CURP: Codebook-based Continuous User Representation for Personalized Generation with LLMs

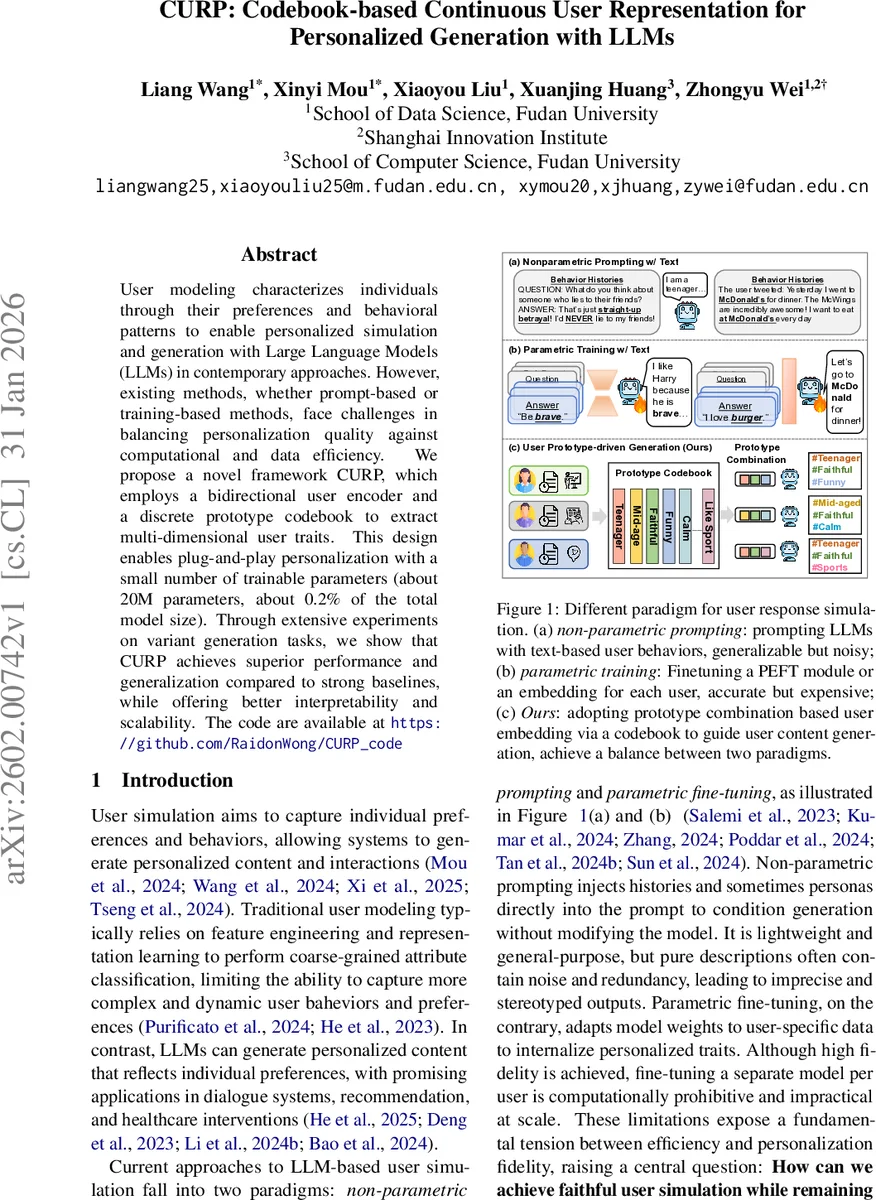

User modeling characterizes individuals through their preferences and behavioral patterns to enable personalized simulation and generation with Large Language Models (LLMs) in contemporary approaches. However, existing methods, whether prompt-based or training-based methods, face challenges in balancing personalization quality against computational and data efficiency. We propose a novel framework CURP, which employs a bidirectional user encoder and a discrete prototype codebook to extract multi-dimensional user traits. This design enables plug-and-play personalization with a small number of trainable parameters (about 20M parameters, about 0.2% of the total model size). Through extensive experiments on variant generation tasks, we show that CURP achieves superior performance and generalization compared to strong baselines, while offering better interpretability and scalability. The code are available at https://github.com/RaidonWong/CURP_code

💡 Research Summary

The paper introduces CURP, a novel framework for personalized generation with large language models (LLMs) that bridges the gap between lightweight prompting and heavyweight fine‑tuning. Existing approaches suffer from a trade‑off: non‑parametric prompting injects raw user histories or persona descriptions directly into the prompt, which is computationally cheap but noisy and limited in expressive power; parametric fine‑tuning (e.g., LoRA adapters or per‑user embeddings) yields high fidelity but requires a separate set of trainable parameters for each user, making it infeasible at scale. CURP resolves this tension by representing each user as a sparse combination of learnable prototypes stored in a discrete codebook.

The architecture consists of three components: (1) a bidirectional user encoder E (based on a pretrained encoder‑only model such as Contriever) that transforms a user’s interaction history into dense vectors; (2) a prototype codebook C learned from a large behavior pool (≈150 k users, 24 M histories). Dense vectors are split into L sub‑spaces (L=4 in the experiments) and quantized via Product Quantization (PQ) into indices of K=1,000 shared prototypes. The codebook is trained with a composite loss that balances reconstruction error (L_quant), prototype diversity (L_div), and uniform usage (L_usage) to avoid collapse. (3) A lightweight MLP projects the quantized prototype embeddings into the LLM decoder’s embedding space. The projected prototypes are prepended to the current query embedding and fed into a frozen LLM decoder (Qwen‑2.5‑8B in the paper). Because the decoder remains unchanged, the method is model‑agnostic and incurs only ~20 M additional parameters (≈0.2 % of the base model).

Training proceeds in two stages. First, the Prototype Codebook Construction (PCC) stage builds a generalizable codebook from the large behavior pool, keeping the encoder frozen. Second, the Prototype Behavior Aligning (PBA) stage fine‑tunes the MLP (and optionally a small adapter) to maximize the likelihood of the ground‑truth user response given the quantized prototype sequence and the query. No LLM weights are updated, which dramatically reduces computational cost and enables plug‑and‑play personalization.

Experiments cover three generation tasks: (a) personalized review generation, (b) dialogue response generation, and (c) customized product description generation. Evaluation metrics include BLEU, ROUGE, GPT‑4‑based quality scores, and embedding‑based semantic similarity. CURP consistently outperforms strong baselines: zero‑shot, in‑context learning (ICL), prompt‑only methods, and parameter‑efficient fine‑tuning (LoRA, per‑user adapters). Gains range from 3 % to 5 % absolute improvement across metrics, with especially strong performance on cold‑start users where the shared prototype space provides robust generalization. Ablation studies vary codebook size, number of sub‑spaces, and loss weights, confirming that a moderate codebook (K≈1,000) and L=4 achieve the best trade‑off between expressiveness and efficiency.

Beyond performance, CURP offers practical advantages. Because only discrete code indices need to be transmitted, the framework supports privacy‑preserving cloud‑edge collaboration: a client device can run the encoder and quantization locally, send the compact index vector to a server, and receive a personalized response without exposing raw interaction text. The codebook’s interpretability (each prototype corresponds to a human‑readable trait such as “#Teenager”, “#Faithful”, “#Funny”) also facilitates controllable generation and debugging.

The authors claim three main contributions: (1) a prototype‑based codebook that encodes users as sparse, interpretable combinations of learned traits, replacing fragile textual prompts; (2) a two‑stage training pipeline that enables personalization without modifying the LLM, making the approach model‑agnostic; (3) extensive empirical validation across diverse tasks demonstrating superior quality, scalability, and generalization.

Future directions suggested include extending the codebook to multimodal behavior (images, audio), incorporating dynamic prototype updates to capture evolving user preferences, and exploring hierarchical or user‑group‑aware codebooks for finer granularity. Overall, CURP presents a compelling solution for large‑scale, efficient, and interpretable personalization in LLM‑driven applications.

Comments & Academic Discussion

Loading comments...

Leave a Comment