Learning to Accelerate Vision-Language-Action Models through Adaptive Visual Token Caching

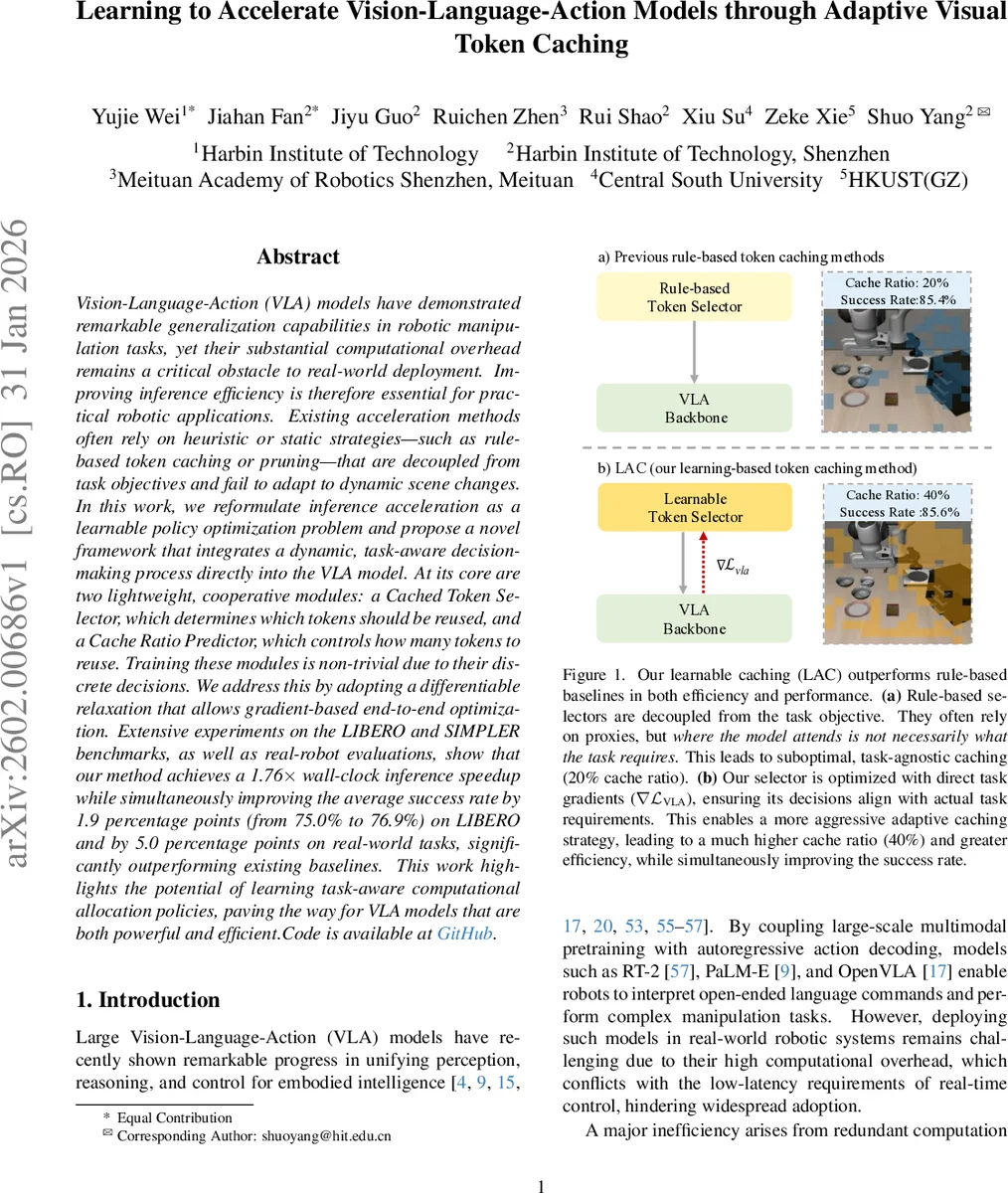

Vision-Language-Action (VLA) models have demonstrated remarkable generalization capabilities in robotic manipulation tasks, yet their substantial computational overhead remains a critical obstacle to real-world deployment. Improving inference efficiency is therefore essential for practical robotic applications. Existing acceleration methods often rely on heuristic or static strategies–such as rule-based token caching or pruning–that are decoupled from task objectives and fail to adapt to dynamic scene changes. In this work, we reformulate inference acceleration as a learnable policy optimization problem and propose a novel framework that integrates a dynamic, task-aware decision-making process directly into the VLA model. At its core are two lightweight, cooperative modules: a Cached Token Selector, which determines which tokens should be reused, and a Cache Ratio Predictor, which controls how many tokens to reuse. Training these modules is non-trivial due to their discrete decisions. We address this by adopting a differentiable relaxation that allows gradient-based end-to-end optimization. Extensive experiments on the LIBERO and SIMPLER benchmarks, as well as real-robot evaluations, show that our method achieves a 1.76x wall-clock inference speedup while simultaneously improving the average success rate by 1.9 percentage points (from 75.0% to 76.9%) on LIBERO and by 5.0 percentage points on real-world tasks, significantly outperforming existing baselines. This work highlights the potential of learning task-aware computational allocation policies, paving the way for VLA models that are both powerful and efficient.

💡 Research Summary

The paper tackles the latency bottleneck of large Vision‑Language‑Action (VLA) models, which, despite their impressive generalization in robotic manipulation, are too computationally heavy for real‑time deployment. Existing acceleration techniques rely on static, rule‑based token caching or pruning that are disconnected from the downstream task and cannot adapt to dynamic visual scenes. The authors reformulate inference acceleration as a learnable policy optimization problem and introduce a framework called Learnable Adaptive Caching (LAC). LAC inserts two lightweight cooperative modules into a frozen VLA backbone: (1) a Cached Token Selector that predicts a per‑token saliency score indicating whether a visual token should be recomputed or reused, and (2) a Cache Ratio Predictor that decides the overall proportion of tokens to cache for the current timestep. Both modules take as input a motion‑aware representation formed by concatenating the raw image frame with a lightweight optical‑flow map (computed by RAFT‑small), providing a direct signal of scene dynamics.

Training proceeds in two stages. In Stage I, the selector is pretrained to mimic the VLA’s internal attention distribution using an MSE loss, giving it a sensible initialization that stabilizes later learning. In Stage II, the selector and predictor are jointly optimized while the VLA parameters remain frozen. To enable gradient flow through the inherently discrete decisions (top‑k token selection and arg‑max cache‑ratio selection), the authors employ the Gumbel‑Softmax trick with straight‑through estimation. The total loss combines the original task loss L_VLA with a regularization term L_ratio that encourages higher cache ratios; a hyper‑parameter λ balances speed versus performance.

Empirical evaluation on the LIBERO and SIMPLER benchmarks, as well as on real‑world robotic platforms, demonstrates that LAC can increase the cache ratio from a typical 20 % (used by rule‑based baselines) to about 40 % without sacrificing, and even improving, task success. Wall‑clock inference time is reduced by a factor of 1.76×, while success rates rise from 75.0 % to 76.9 % on LIBERO (a 1.9 % absolute gain) and achieve a 5 % absolute improvement on real‑robot tasks. Ablation studies confirm that dynamic adjustment of the cache ratio—high in static scenes, low in highly dynamic ones—provides the best trade‑off between efficiency and accuracy.

The contributions are fourfold: (1) reframing VLA acceleration as a differentiable policy learning problem, (2) designing two cooperative, low‑overhead modules for token‑level and scene‑level computation budgeting, (3) introducing a differentiable discrete decision mechanism that enables end‑to‑end training, and (4) validating the approach with extensive experiments that show simultaneous gains in speed and performance. The work opens avenues for further research, such as extending the motion signal to handle illumination changes, incorporating additional modalities (depth, tactile), and exploring meta‑learning or reinforcement‑learning strategies to generalize the caching policy across unseen tasks and environments. Overall, LAC presents a practical and principled solution for making powerful VLA models viable in real‑time robotic applications.

Comments & Academic Discussion

Loading comments...

Leave a Comment