CoRe-Fed: Bridging Collaborative and Representation Fairness via Federated Embedding Distillation

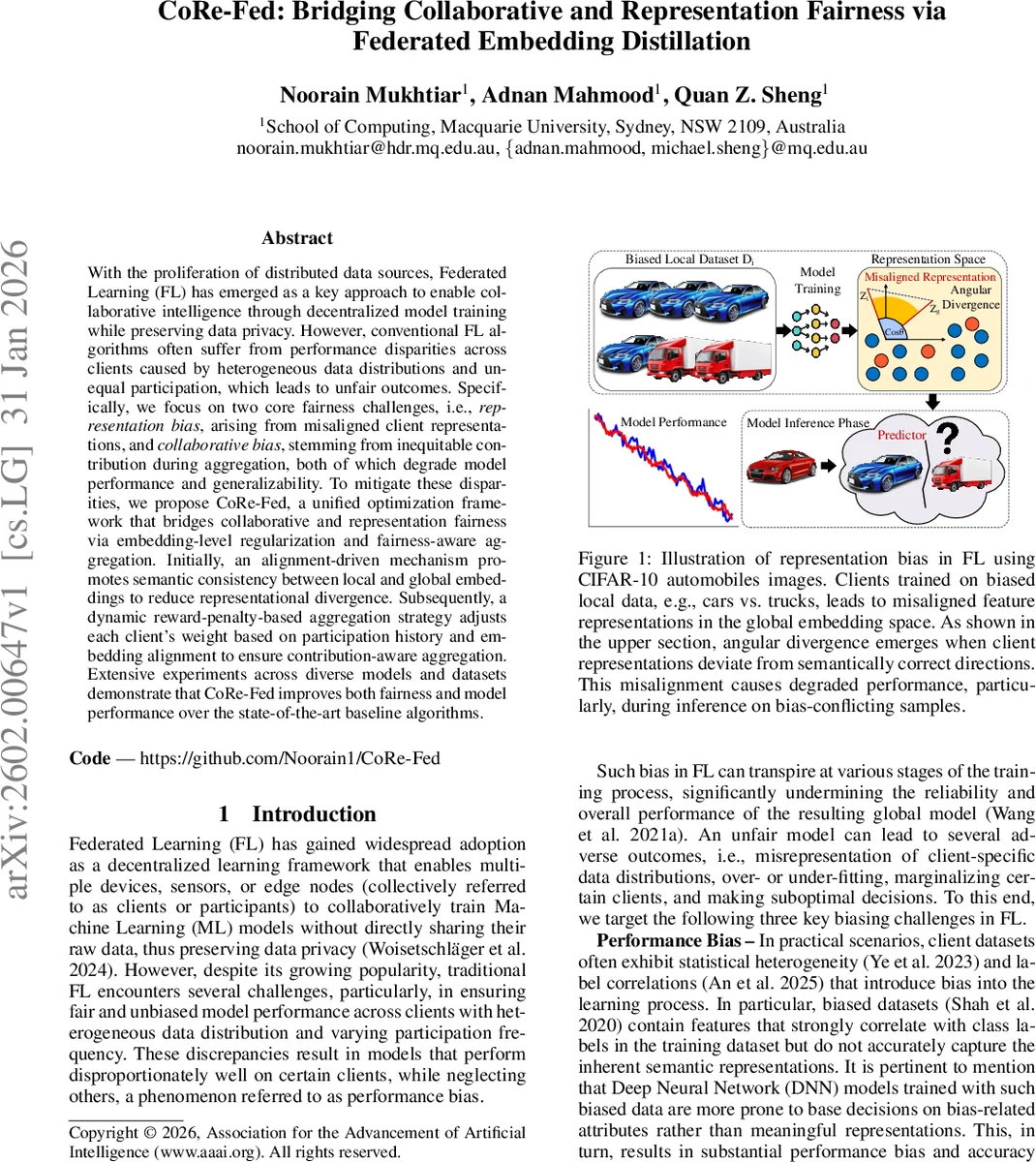

With the proliferation of distributed data sources, Federated Learning (FL) has emerged as a key approach to enable collaborative intelligence through decentralized model training while preserving data privacy. However, conventional FL algorithms often suffer from performance disparities across clients caused by heterogeneous data distributions and unequal participation, which leads to unfair outcomes. Specifically, we focus on two core fairness challenges, i.e., representation bias, arising from misaligned client representations, and collaborative bias, stemming from inequitable contribution during aggregation, both of which degrade model performance and generalizability. To mitigate these disparities, we propose CoRe-Fed, a unified optimization framework that bridges collaborative and representation fairness via embedding-level regularization and fairness-aware aggregation. Initially, an alignment-driven mechanism promotes semantic consistency between local and global embeddings to reduce representational divergence. Subsequently, a dynamic reward-penalty-based aggregation strategy adjusts each client’s weight based on participation history and embedding alignment to ensure contribution-aware aggregation. Extensive experiments across diverse models and datasets demonstrate that CoRe-Fed improves both fairness and model performance over the state-of-the-art baseline algorithms.

💡 Research Summary

CoRe‑Fed addresses two pervasive sources of unfairness in federated learning (FL): representation bias, which arises when heterogeneous client data lead to divergent feature embeddings, and collaborative bias, which stems from naïve aggregation schemes that treat all client updates equally regardless of their quality or participation frequency. The proposed framework introduces a two‑stage mechanism. First, an embedding‑level alignment module extracts normalized client embeddings using a local deep feature extractor, aggregates them on the server to form a global prototype, and then applies a temperature‑scaled InfoNCE contrastive loss to pull each client embedding toward the global prototype while pushing it away from other client embeddings. This contrastive step is followed by embedding‑level knowledge distillation: the server computes an alignment vector for each client as the cosine similarity‑scaled global prototype, and each client updates its embedding via a convex combination controlled by a distillation coefficient β. This process reduces angular divergence among client representations and encourages a shared semantic space without discarding local nuances. Second, a contribution‑aware aggregation scheme computes two scores for each client: (i) a participation frequency score τ derived from a sliding‑window count of recent rounds, and (ii) a representation similarity score α given by the cosine similarity between the distilled client embedding and the global prototype. These scores are fused—either linearly or via a non‑linear mapping—to produce a final weight w_i that modulates the standard FedAvg update. Consequently, clients that both participate frequently and provide embeddings well‑aligned with the global semantic structure exert greater influence on the global model, while infrequent or poorly aligned clients are down‑weighted. The authors evaluate CoRe‑Fed on heterogeneous benchmarks including CIFAR‑10 with class‑biased splits, FEMNIST with user‑specific handwriting styles, and a medical imaging dataset with institution‑level distribution shifts. Using ResNet‑18, CNN, and MLP backbones, they compare against FedAvg, FedProx, Shapley‑based weighting, and recent representation‑fairness methods. Metrics cover angular divergence, client‑wise accuracy gaps, overall test accuracy, and fairness indices such as Jain’s index. Results show that CoRe‑Fed improves average cosine similarity from 0.78 to 0.91 (≈12 % gain), reduces client accuracy disparity by over 30 % relative to baselines, and maintains or slightly improves overall accuracy (1–2 % higher). Hyper‑parameter analysis demonstrates robustness across a range of temperature and β values, and the additional communication overhead is minimal (≈1–2 % extra bandwidth for transmitting a single embedding vector per client per round). In summary, CoRe‑Fed provides a unified solution that simultaneously mitigates representation and collaborative biases, yielding fairer and more generalizable global models in non‑IID federated environments. Future work is suggested on multimodal data, asynchronous participation, and integration with differential privacy mechanisms.

Comments & Academic Discussion

Loading comments...

Leave a Comment