Diff-PC: Identity-preserving and 3D-aware Controllable Diffusion for Zero-shot Portrait Customization

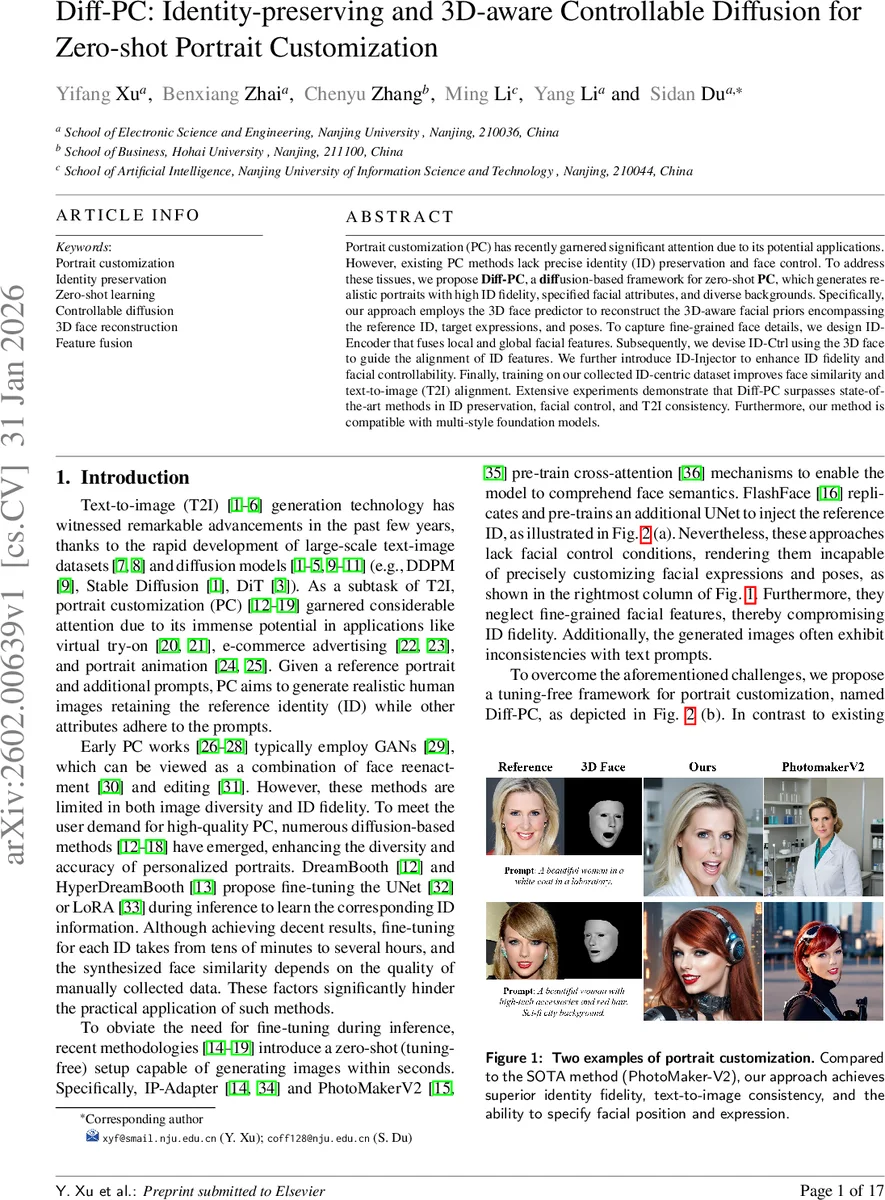

Portrait customization (PC) has recently garnered significant attention due to its potential applications. However, existing PC methods lack precise identity (ID) preservation and face control. To address these tissues, we propose Diff-PC, a diffusion-based framework for zero-shot PC, which generates realistic portraits with high ID fidelity, specified facial attributes, and diverse backgrounds. Specifically, our approach employs the 3D face predictor to reconstruct the 3D-aware facial priors encompassing the reference ID, target expressions, and poses. To capture fine-grained face details, we design ID-Encoder that fuses local and global facial features. Subsequently, we devise ID-Ctrl using the 3D face to guide the alignment of ID features. We further introduce ID-Injector to enhance ID fidelity and facial controllability. Finally, training on our collected ID-centric dataset improves face similarity and text-to-image (T2I) alignment. Extensive experiments demonstrate that Diff-PC surpasses state-of-the-art methods in ID preservation, facial control, and T2I consistency. Furthermore, our method is compatible with multi-style foundation models.

💡 Research Summary

DiffPC introduces a novel zero‑shot portrait customization framework that preserves a subject’s identity while allowing precise control over facial expression, pose, and background through textual prompts. The method leverages a 3D face predictor (based on SMIRK) to decompose a reference image and a target image into identity (α), expression (β), and pose (γ) parameters. By keeping α unchanged and swapping β and γ, a 3D mesh reflecting the desired expression and pose is generated and rasterized into a 2D guidance image (I₃D) using a differentiable renderer.

To capture fine‑grained identity features, an ID‑Encoder fuses global embeddings from ArcFace with local embeddings from CLIP‑Image, projects them to a common dimension, and merges them via a transformer decoder, producing refined ID tokens (F′₍id₎). These tokens are fed into the ID‑Ctrl module, which encodes I₃D into latent space (z₍3d₎) and injects it into a replicated UNet encoder/middle blocks, aligning 3D geometric cues with diffusion denoising steps.

The ID‑Injector then directly injects the combined identity‑control condition (c₍id₎) into the UNet denoiser, complementing the standard text condition (c₍txt₎) in cross‑attention layers. This dual‑conditioning ensures high fidelity to the original face while respecting the textual description.

Training utilizes a newly curated “ID‑centric” dataset of over 100k high‑quality portrait images, automatically cleaned via background matting and face detection, and paired with diverse textual prompts. Joint optimization of text‑image alignment loss and facial similarity loss further improves consistency.

Extensive evaluations show that DiffPC outperforms state‑of‑the‑art zero‑shot methods (e.g., PhotoMaker‑V2, FlashFace, PuLID) in identity preservation (lower FID‑ID, higher ArcFace similarity), expression/pose controllability, and text‑image alignment (higher cosine similarity). The approach is compatible with multiple SDXL‑based style models, enabling users to generate portraits in various artistic styles without any fine‑tuning, and produces results within seconds, making it suitable for real‑time applications.

Comments & Academic Discussion

Loading comments...

Leave a Comment