The TMU System for the XACLE Challenge: Training Large Audio Language Models with CLAP Pseudo-Labels

In this paper, we propose a submission to the x-to-audio alignment (XACLE) challenge. The goal is to predict semantic alignment of a given general audio and text pair. The proposed system is based on a large audio language model (LALM) architecture. We employ a three-stage training pipeline: automated audio captioning pretraining, pretraining with CLAP pseudo-labels, and fine-tuning on the XACLE dataset. Our experiments show that pretraining with CLAP pseudo-labels is the primary performance driver. On the XACLE test set, our system reaches an SRCC of 0.632, significantly outperforming the baseline system (0.334) and securing third place in the challenge team ranking. Code and models can be found at https://github.com/shiotalab-tmu/tmu-xacle2026

💡 Research Summary

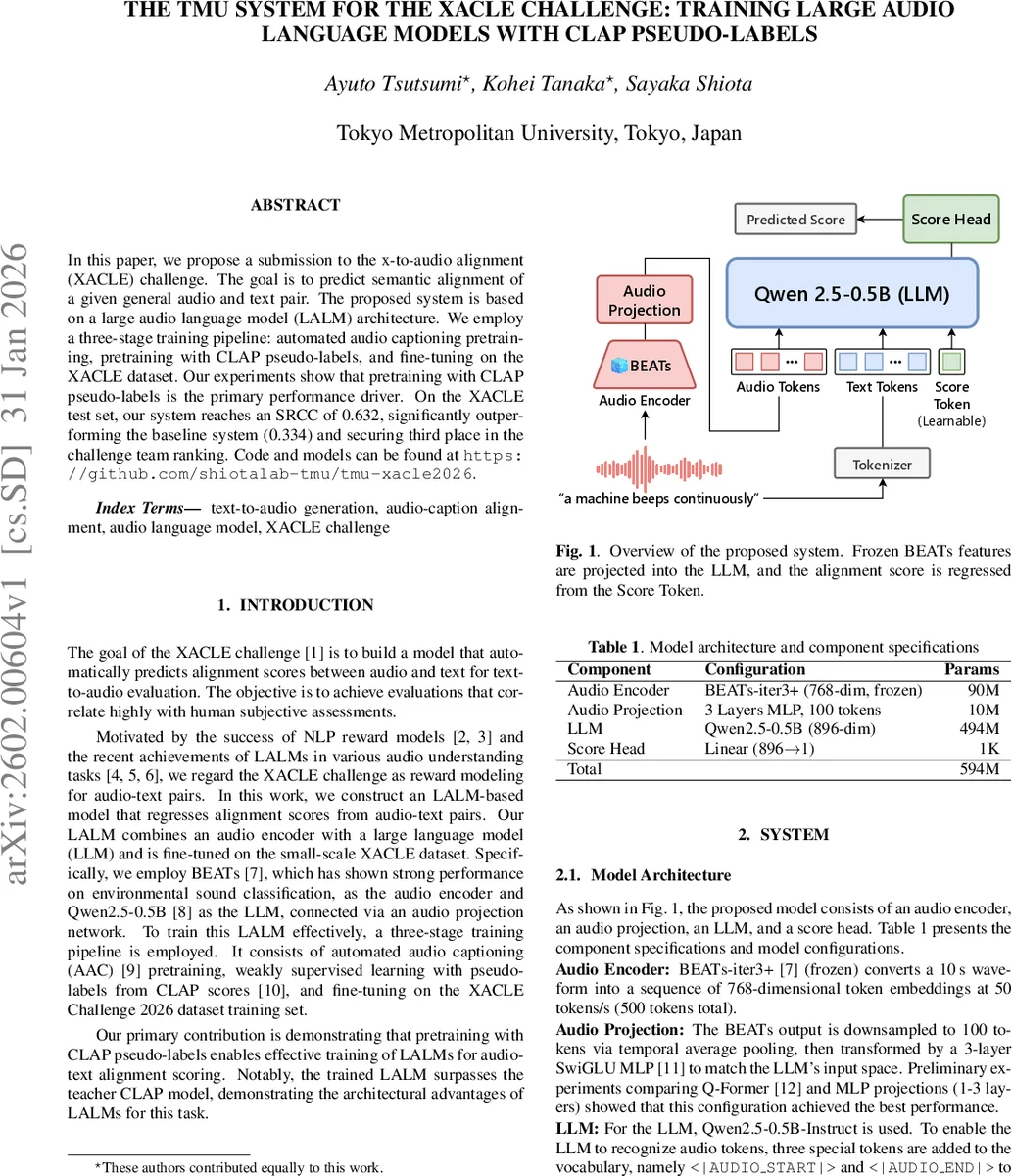

The paper presents TMU’s entry to the 2026 XACLE (x‑to‑audio alignment) challenge, where the task is to predict a semantic alignment score for a given audio‑text pair that correlates strongly with human judgments. The authors build a Large Audio Language Model (LALM) that fuses a frozen BEATs‑iter3+ audio encoder with the Qwen2.5‑0.5B‑Instruct large language model (LLM). The audio encoder converts a 10‑second waveform into 500 tokens of 768‑dimensional embeddings (50 tokens per second). These embeddings are temporally pooled to 100 tokens and passed through a three‑layer SwiGLU MLP to match the LLM’s 896‑dimensional input space. Three special tokens—<|AUDIO START|>, <|AUDIO END|>, and <|SCORE|>—are added to the LLM vocabulary; the input format is “text … <|AUDIO START|> audio tokens … <|AUDIO END|> <|SCORE|>”. The hidden state at the <|SCORE|> position is fed to a linear head (896 → 1) to produce the alignment score.

Training proceeds in three stages. Stage 1 pre‑trains the projection and LLM on Automated Audio Captioning (AAC) using 273 K samples from AudioCaps and a subset of AudioSetCaps derived from VGGSound. This stage teaches the model basic audio‑text correspondence but does not directly target scoring. Stage 2 introduces a score head and performs weakly‑supervised learning with pseudo‑labels generated by a teacher model, HumanCLAP‑M2D (a CLAP variant fine‑tuned on human perception data). To create a balanced training set, the authors augment the AAC data by swapping audio or text from different samples, thereby synthesizing low‑score (negative) pairs and expanding the dataset to roughly 1.064 M examples. The loss is ListNet, a listwise ranking objective that directly optimizes the ordering of scores, which aligns with the evaluation metric Spearman’s Rank Correlation Coefficient (SRCC). Stage 3 fine‑tunes the entire system on the official XACLE training set (7.5 K pairs) using SpecAugment (frequency masking = 15, time masking = 30) for regularization and retains the ListNet loss.

Experiments use AdamW (batch = 16), learning rates of 1e‑5 for Stages 1 and 2, and 6.2e‑6 for Stage 3. Results show a clear progression: AAC alone yields SRCC ≈ 0.352 on validation; adding CLAP pseudo‑label pre‑training jumps to 0.598; full fine‑tuning reaches 0.674 (validation) and 0.625 (test). A model trained without Stage 1 (directly from pseudo‑label pre‑training to fine‑tuning) attains virtually the same test SRCC (0.626), indicating that AAC contributes little to the final scoring task. An ensemble that rank‑averages the full pipeline and the “no‑AAC” model achieves the best test SRCC of 0.632, surpassing the official baseline (0.334) by a large margin and securing third place in the competition.

Key insights include: (1) CLAP‑based pseudo‑labels are the primary driver of performance, enabling the LALM to match and even exceed the teacher model (HumanCLAP‑M2D scores 0.602). (2) The LALM architecture—integrating frozen high‑quality audio embeddings with a powerful LLM—offers structural advantages over two‑stage CLAP pipelines, likely because the LLM can jointly model audio and textual context. (3) ListNet’s listwise ranking loss aligns well with SRCC, proving more effective than regression losses for this ranking‑centric task. (4) AAC pre‑training, while beneficial for generative captioning, does not translate into better alignment scoring, suggesting a task mismatch between generation and discriminative ranking.

The authors conclude that large‑scale weak supervision via CLAP pseudo‑labels, combined with a unified audio‑text language model, provides a highly effective solution for audio‑text alignment evaluation. They suggest future work such as jointly fine‑tuning the audio encoder, exploring richer multimodal pre‑training datasets (e.g., video‑audio‑text), testing alternative ranking losses (LambdaRank, RankNet), and developing lighter‑weight variants for real‑time applications.

Comments & Academic Discussion

Loading comments...

Leave a Comment