Learning to Decode Against Compositional Hallucination in Video Multimodal Large Language Models

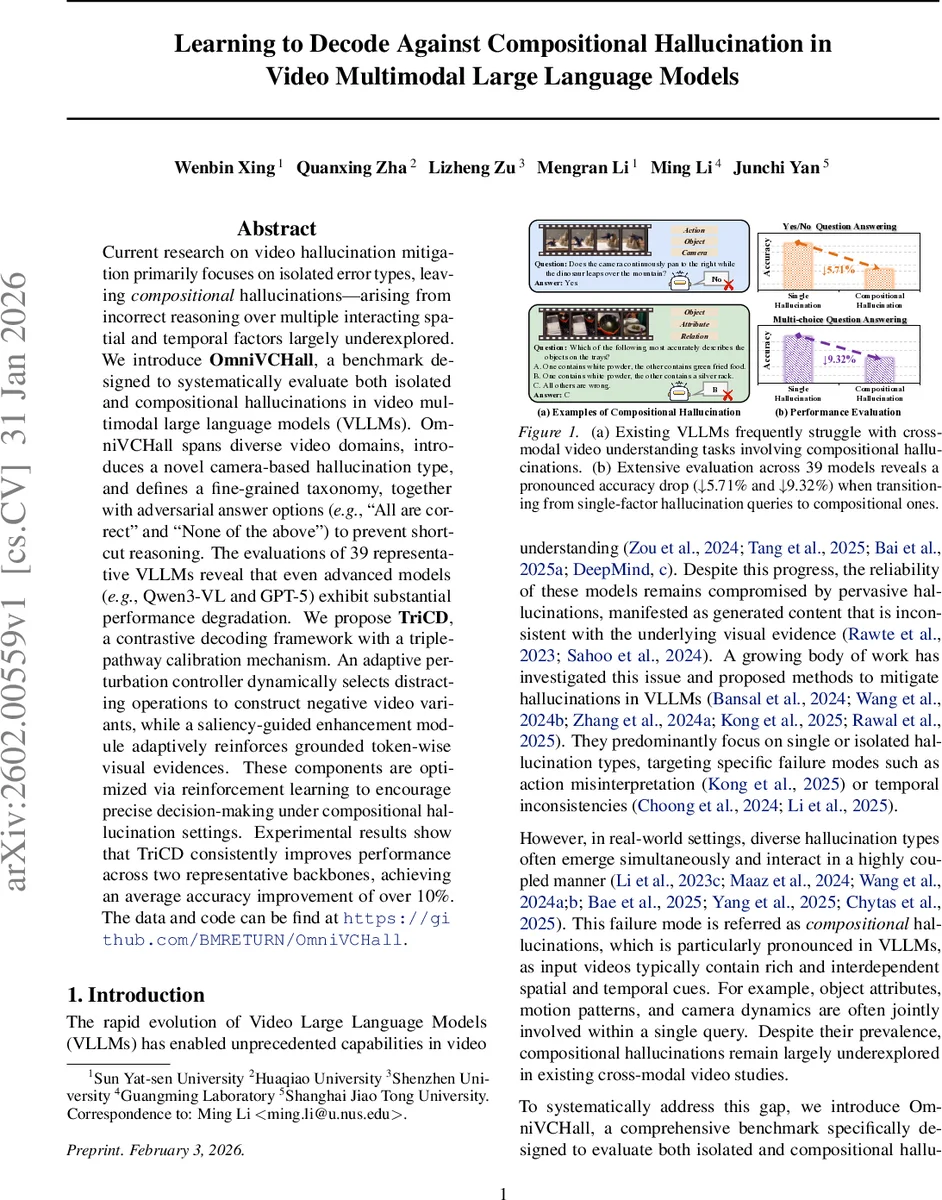

Current research on video hallucination mitigation primarily focuses on isolated error types, leaving compositional hallucinations, arising from incorrect reasoning over multiple interacting spatial and temporal factors largely underexplored. We introduce OmniVCHall, a benchmark designed to systematically evaluate both isolated and compositional hallucinations in video multimodal large language models (VLLMs). OmniVCHall spans diverse video domains, introduces a novel camera-based hallucination type, and defines a fine-grained taxonomy, together with adversarial answer options (e.g., “All are correct” and “None of the above”) to prevent shortcut reasoning. The evaluations of 39 representative VLLMs reveal that even advanced models (e.g., Qwen3-VL and GPT-5) exhibit substantial performance degradation. We propose TriCD, a contrastive decoding framework with a triple-pathway calibration mechanism. An adaptive perturbation controller dynamically selects distracting operations to construct negative video variants, while a saliency-guided enhancement module adaptively reinforces grounded token-wise visual evidences. These components are optimized via reinforcement learning to encourage precise decision-making under compositional hallucination settings. Experimental results show that TriCD consistently improves performance across two representative backbones, achieving an average accuracy improvement of over 10%. The data and code can be find at https://github.com/BMRETURN/OmniVCHall.

💡 Research Summary

The paper addresses a largely overlooked failure mode of video multimodal large language models (VLLMs) called “compositional hallucination,” where errors arise from incorrect reasoning over multiple interacting spatial and temporal factors. To systematically study this problem, the authors introduce OmniVCHall, a new benchmark that evaluates both isolated (single‑factor) and compositional (multi‑factor) hallucinations. OmniVCHall comprises 823 high‑quality videos drawn from real‑world footage and AI‑generated content, paired with 9,027 visual question‑answer (VQA) items. The benchmark defines eight fine‑grained hallucination types—Object, Scene, Event, Action, Relation, Attribute, Temporal, and a novel Camera type that captures errors where camera motions are mistaken for object motions. Each question is categorized by complexity: single‑type (S) or compositional (C), and by format: binary yes/no (YNQA) or multiple‑choice (MCQA). To discourage shortcut reasoning, adversarial answer options such as “All are correct” and “None of the above” are injected into a substantial portion of the MCQA items. Human annotators verify captions and QA pairs, achieving an inter‑annotator agreement (Cohen’s κ) of 0.84 for video descriptions and 0.81 for QA validity, and a human accuracy of 95 % on the benchmark.

The authors evaluate 39 representative VLLMs—including state‑of‑the‑art systems like Qwen3‑VL and GPT‑5—on OmniVCHall. Results show a pronounced drop in performance when moving from single‑type to compositional queries: average accuracy declines by 5.71 % for yes/no and 9.32 % for multiple‑choice tasks. This demonstrates that even the most advanced models struggle to jointly reason over multiple spatial‑temporal cues.

To mitigate compositional hallucinations without modifying the underlying VLLM weights, the paper proposes TriCD (Triple‑Pathway Contrastive Decoding), a decoding‑time calibration framework consisting of three coordinated components:

-

Adaptive Perturbation Controller (APC) – Analyzes the video‑question context and dynamically selects a set of context‑aware perturbations (e.g., frame dropping, color jitter, camera‑angle alteration) to generate a negative video variant that is likely to confuse the model. The controller learns a policy that maximizes the discrepancy between the model’s original logits and those produced on the perturbed video.

-

Saliency‑Guided Enhancement (SGE) – Constructs a token‑wise visual saliency map by fusing spatial features from DINOv3 with temporal motion cues derived from Farneback optical flow. The saliency map is used to re‑weight visual tokens, reinforcing evidence that is crucial for answering the query while suppressing irrelevant regions.

-

Reinforcement Learning (RL) Optimizer – Treats the calibration process as a sequential decision problem. The reward combines (a) an increase in the probability of the correct answer on the original video and (b) a decrease in the probability of the same answer on the perturbed video. Proximal Policy Optimization (PPO) is employed to update both the APC policy and the SGE weighting parameters jointly.

TriCD operates entirely at inference time: the original pass extracts hidden states, the APC generates a tailored negative sample, the SGE produces saliency‑enhanced token embeddings, and the RL‑optimized policy adjusts the final logits before selection. Experiments on two backbones—Qwen3‑VL‑Instruct‑8B and VideoLLaMA3‑7B—show average accuracy improvements of 9.61 % and 12.03 % respectively, with the most significant gains on compositional MCQA tasks that include adversarial options. Ablation studies confirm that both the adaptive perturbations and the saliency‑guided enhancement contribute synergistically; removing either component reduces the gain by roughly half.

The paper’s contributions are threefold: (1) identification and systematic study of compositional hallucinations in VLLMs, (2) the OmniVCHall benchmark that offers fine‑grained taxonomy, balanced real and synthetic video sources, and adversarial answer injection, and (3) the TriCD framework that demonstrates effective, model‑agnostic mitigation of these hallucinations through contrastive decoding and saliency‑driven grounding. Limitations include reliance on a predefined set of perturbation operations and the use of relatively simple visual features for saliency; future work may explore meta‑learning of perturbations and more sophisticated video transformers for attention modeling. Overall, the work provides a valuable testbed and a practical decoding‑time solution for improving the reliability of video‑enabled LLMs in complex, multi‑factor reasoning scenarios.

Comments & Academic Discussion

Loading comments...

Leave a Comment