ConLA: Contrastive Latent Action Learning from Human Videos for Robotic Manipulation

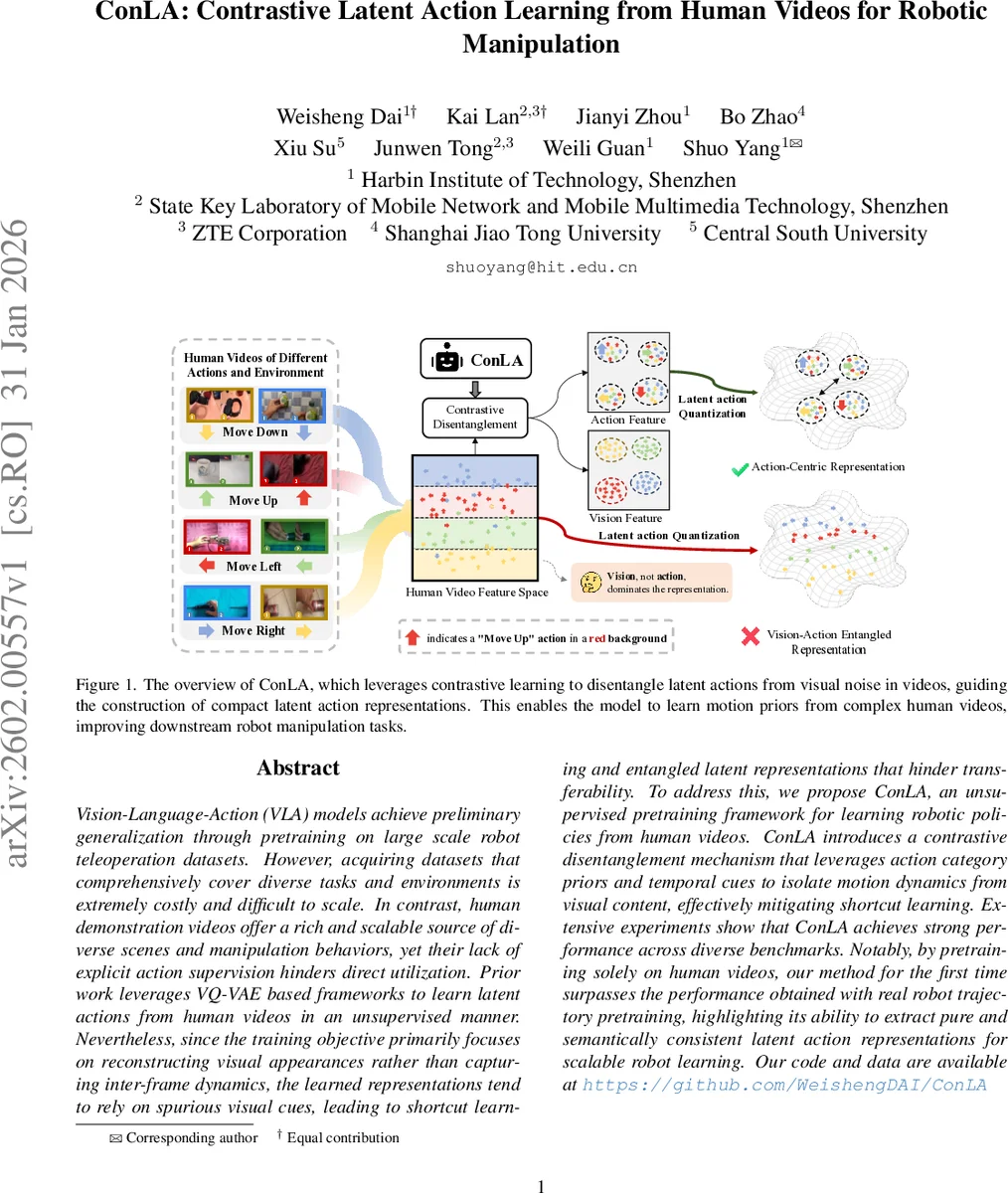

Vision-Language-Action (VLA) models achieve preliminary generalization through pretraining on large scale robot teleoperation datasets. However, acquiring datasets that comprehensively cover diverse tasks and environments is extremely costly and difficult to scale. In contrast, human demonstration videos offer a rich and scalable source of diverse scenes and manipulation behaviors, yet their lack of explicit action supervision hinders direct utilization. Prior work leverages VQ-VAE based frameworks to learn latent actions from human videos in an unsupervised manner. Nevertheless, since the training objective primarily focuses on reconstructing visual appearances rather than capturing inter-frame dynamics, the learned representations tend to rely on spurious visual cues, leading to shortcut learning and entangled latent representations that hinder transferability. To address this, we propose ConLA, an unsupervised pretraining framework for learning robotic policies from human videos. ConLA introduces a contrastive disentanglement mechanism that leverages action category priors and temporal cues to isolate motion dynamics from visual content, effectively mitigating shortcut learning. Extensive experiments show that ConLA achieves strong performance across diverse benchmarks. Notably, by pretraining solely on human videos, our method for the first time surpasses the performance obtained with real robot trajectory pretraining, highlighting its ability to extract pure and semantically consistent latent action representations for scalable robot learning.

💡 Research Summary

ConLA (Contrastive Latent Action Learning) tackles the problem of learning robotic manipulation policies from abundant but unlabeled human videos. Existing Vision‑Language‑Action (VLA) models rely on massive teleoperation datasets that are expensive to collect and limited in diversity. Prior attempts to extract latent actions from human videos using VQ‑VAE–based inverse dynamics models suffer from “shortcut learning”: the reconstruction loss drives the model to encode future visual appearance rather than true inter‑frame motion, resulting in entangled representations that do not transfer well to robots.

ConLA introduces a contrastive disentanglement mechanism that leverages two intrinsic priors in human videos: (1) action category priors – recurring manipulation primitives (e.g., pick, place, move) provide weak supervisory labels, and (2) temporal priors – the order of frames encodes motion dynamics. The method proceeds in three stages.

Stage 1 – Contrastive Latent Action Learning. A pair of frames (current Oₜ and future Oₜ₊ₖ) is fed to a spatial‑temporal transformer encoder that outputs a latent vector Z. Z is split into an action‑related part Zₐ′ and a visual‑related part Zᵥ′. Zₐ′ passes through an MLP “action head” to produce Zₐ, which is trained with a supervised contrastive loss using the weak action class labels: embeddings of the same class are pulled together, different classes are pushed apart. Zᵥ′ goes through a “vision head” trained with an unsupervised contrastive loss that encourages visual similarity for frames sharing the same background while remaining distinct from motion cues. After contrastive processing, Zₐ is quantized with a VQ‑VAE codebook, yielding discrete latent‑action tokens Zₐq. A decoder receives the current frame and Zₐq and reconstructs the future frame, providing the standard reconstruction loss. The total objective combines reconstruction, action‑centric contrastive, and vision‑centric contrastive terms, effectively preventing the model from memorizing visual content and forcing it to capture genuine motion dynamics.

Stage 2 – Latent‑Action Pretraining. The discrete tokens Zₐq are used as targets for an autoregressive Vision‑Language‑Action model. The model receives visual observations and natural‑language instructions, predicts the next latent‑action token, and learns a policy that maps observations and commands to a sequence of latent actions.

Stage 3 – Action Fine‑Tuning. A small amount of real robot trajectory data is employed to learn a mapping from the latent‑action tokens to executable motor commands (e.g., joint velocities). This fine‑tuning aligns the abstract latent actions extracted from human videos with the robot’s control space.

Extensive experiments on simulation benchmarks (e.g., SimplerEnv) and real‑world manipulation tasks demonstrate that ConLA, pretrained solely on human videos, outperforms the previous VQ‑VAE‑based LAP‑A by 12.5 % in success rate and even exceeds policies pretrained on actual robot teleoperation data by 1.1 %. Ablation studies confirm that both the action‑centric and vision‑centric contrastive components are essential; removing either re‑introduces shortcut learning and degrades performance.

In summary, ConLA shows that by injecting weak semantic supervision (action categories) and exploiting temporal structure through contrastive learning, one can disentangle motion from visual noise in large‑scale human video corpora. The resulting latent‑action representations are compact, semantically consistent, and transferable to robot manipulation policies, opening a scalable path toward leveraging the vast amount of freely available human demonstration videos for robot learning.

Comments & Academic Discussion

Loading comments...

Leave a Comment