RGBX-R1: Visual Modality Chain-of-Thought Guided Reinforcement Learning for Multimodal Grounding

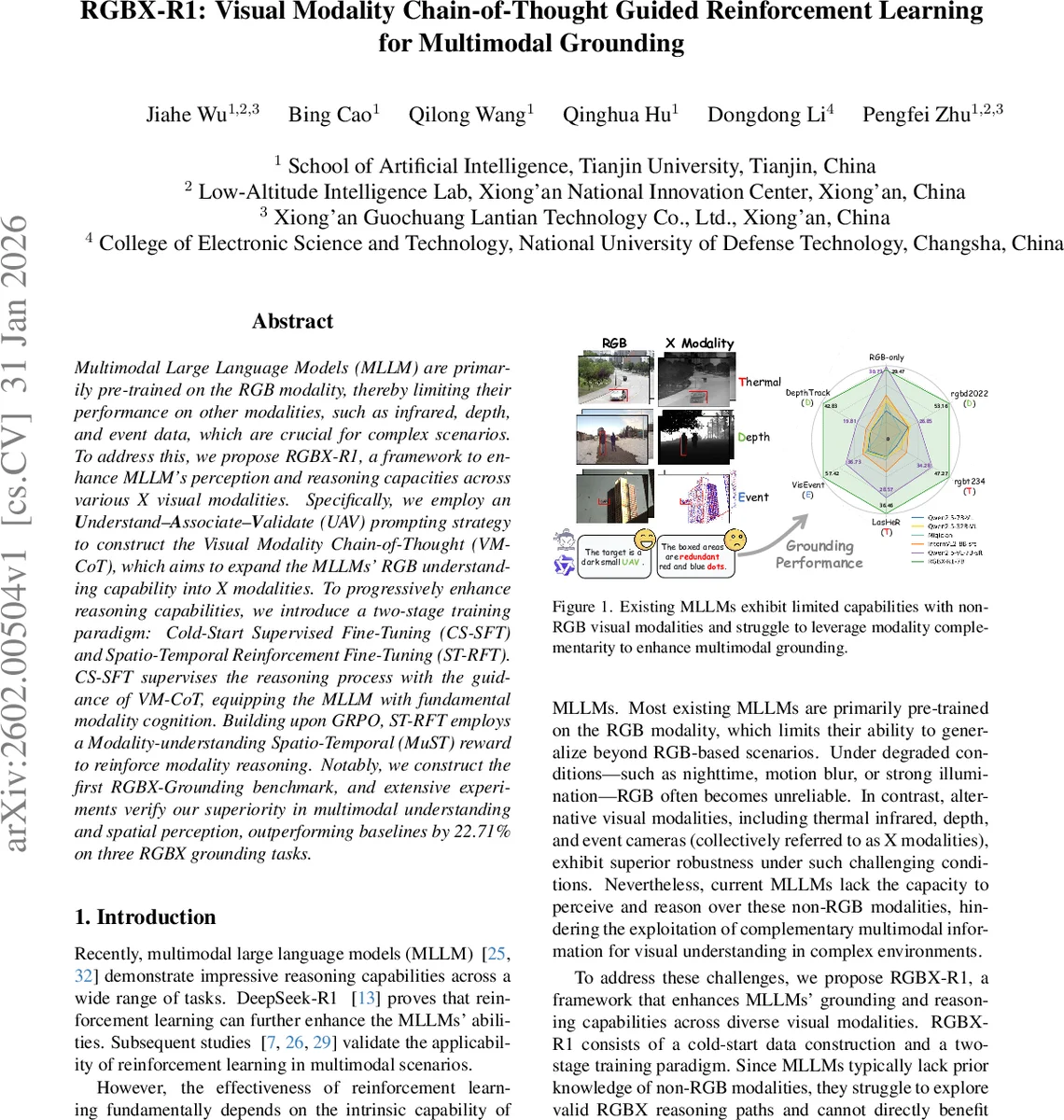

Multimodal Large Language Models (MLLM) are primarily pre-trained on the RGB modality, thereby limiting their performance on other modalities, such as infrared, depth, and event data, which are crucial for complex scenarios. To address this, we propose RGBX-R1, a framework to enhance MLLM’s perception and reasoning capacities across various X visual modalities. Specifically, we employ an Understand-Associate-Validate (UAV) prompting strategy to construct the Visual Modality Chain-of-Thought (VM-CoT), which aims to expand the MLLMs’ RGB understanding capability into X modalities. To progressively enhance reasoning capabilities, we introduce a two-stage training paradigm: Cold-Start Supervised Fine-Tuning (CS-SFT) and Spatio-Temporal Reinforcement Fine-Tuning (ST-RFT). CS-SFT supervises the reasoning process with the guidance of VM-CoT, equipping the MLLM with fundamental modality cognition. Building upon GRPO, ST-RFT employs a Modality-understanding Spatio-Temporal (MuST) reward to reinforce modality reasoning. Notably, we construct the first RGBX-Grounding benchmark, and extensive experiments verify our superiority in multimodal understanding and spatial perception, outperforming baselines by 22.71% on three RGBX grounding tasks.

💡 Research Summary

RGBX‑R1 addresses the critical limitation of current multimodal large language models (MLLMs), which are predominantly pre‑trained on RGB images and thus struggle with non‑RGB modalities such as infrared, depth, and event streams. The authors introduce a comprehensive framework that expands an MLLM’s perception and reasoning abilities to these X‑modalities through a two‑stage training pipeline.

First, they construct RGBX‑Grounding, a novel dataset derived from five public RGBX tracking benchmarks. By sampling keyframes at 24‑29 frame intervals and grouping four keyframes into a multi‑image grounding (MIG) sample, they create roughly 7 000 samples, each containing a language query, an RGB template, an X‑modality template, six search images, and ground‑truth bounding boxes.

Next, they generate Visual Modality Chain‑of‑Thought (VM‑CoT) annotations for each sample using Qwen2.5‑VL‑32B. The VM‑CoT follows an Understand‑Associate‑Validate (UAV) prompting strategy: (1) “Understand” extracts target semantics from the RGB template; (2) “Associate” links the RGB target to its counterpart in the X‑modality using spatial correspondence and an explanation of the X‑modality’s imaging principle; (3) “Validate” examines each search frame, leveraging cross‑modal complementarity to confirm the target’s location. A two‑stage filtering (self‑assessment and human review) yields over 5 000 high‑quality CoTs.

The first training stage, Cold‑Start Supervised Fine‑Tuning (CS‑SFT), uses VM‑CoT as supervision. To emphasize modality‑specific information, the authors propose Modality‑specific Token Weighting (MTW). For each token t, they compute its normalized frequency distribution P_m(t) within a given modality m and a mixture distribution Q_m′(t) across all other modalities. The KL‑divergence‑based contribution score Contrib(t,m)=P_m(t)·log(P_m(t)/Q_m′(t)) serves as a weight w_{t,m} that re‑weights the token‑level cross‑entropy loss, encouraging the model to focus on tokens that are distinctive for the target modality.

In the second stage, Spatio‑Temporal Reinforcement Fine‑Tuning (ST‑RFT) builds on the CS‑SFT‑pretrained model (named Qwen‑cs). Using Group Relative Policy Optimization (GRPO), the model samples a set of candidate responses for each query and evaluates them with a composite MuST (Modality‑understanding Spatio‑Temporal) reward. The reward consists of three components:

- Spatio‑Temporal reward (r_st) applies a log‑scaled frame‑interval weight to the IoU between predicted and ground‑truth boxes, penalizing the “inertial numeric guessing” behavior where the model produces monotonically changing coordinates regardless of visual content.

- Modality‑understanding reward (r_mu) is granted when the model correctly classifies the modality of a sample (required for a subset of modality‑unknown examples). For correct classifications, r_mu is proportional to the token‑level accuracy inside the

… block compared with the reference VM‑CoT, typically ranging from 0.6 to 0.9; otherwise it is zero. - Format reward (r_format) ensures strict adherence to the required output format (delimiters, list dimensions, etc.).

The total reward r = r_st + r_mu + r_format is used to compute a relative advantage, guiding policy updates that reinforce accurate grounding, correct modality reasoning, and proper response formatting.

Extensive experiments on the RGBX‑Grounding benchmark—covering thermal infrared, depth, and event‑camera tracking—show that RGBX‑R1 outperforms strong baselines (including DeepSeek‑R1, LLaVA‑1.5, MiniGPT‑4) by an average of 22.71 % in mean Average Precision. Notably, the model exhibits a “Modal Knowledge Emergent” phenomenon: with only a few hundred RGB‑X paired examples, it rapidly transfers RGB understanding to previously unseen modalities.

The paper’s contributions are threefold: (1) the first RGBX‑Grounding dataset paired with UAV‑generated VM‑CoT; (2) the MTW mechanism that injects modality‑aware token importance into supervised fine‑tuning; (3) the MuST reward that integrates spatial, temporal, and modality signals into reinforcement learning for multimodal grounding.

Limitations include the focus on only three X‑modalities, the reliance on manually filtered CoT (which still contains occasional logical errors), and the complexity of the reward design that may affect training stability. Future work could extend the framework to lidar, radar, or hyperspectral data, automate CoT validation, and explore adaptive reward shaping for more robust reinforcement learning.

Comments & Academic Discussion

Loading comments...

Leave a Comment