Inject Once Survive Later: Backdooring Vision-Language-Action Models to Persist Through Downstream Fine-tuning

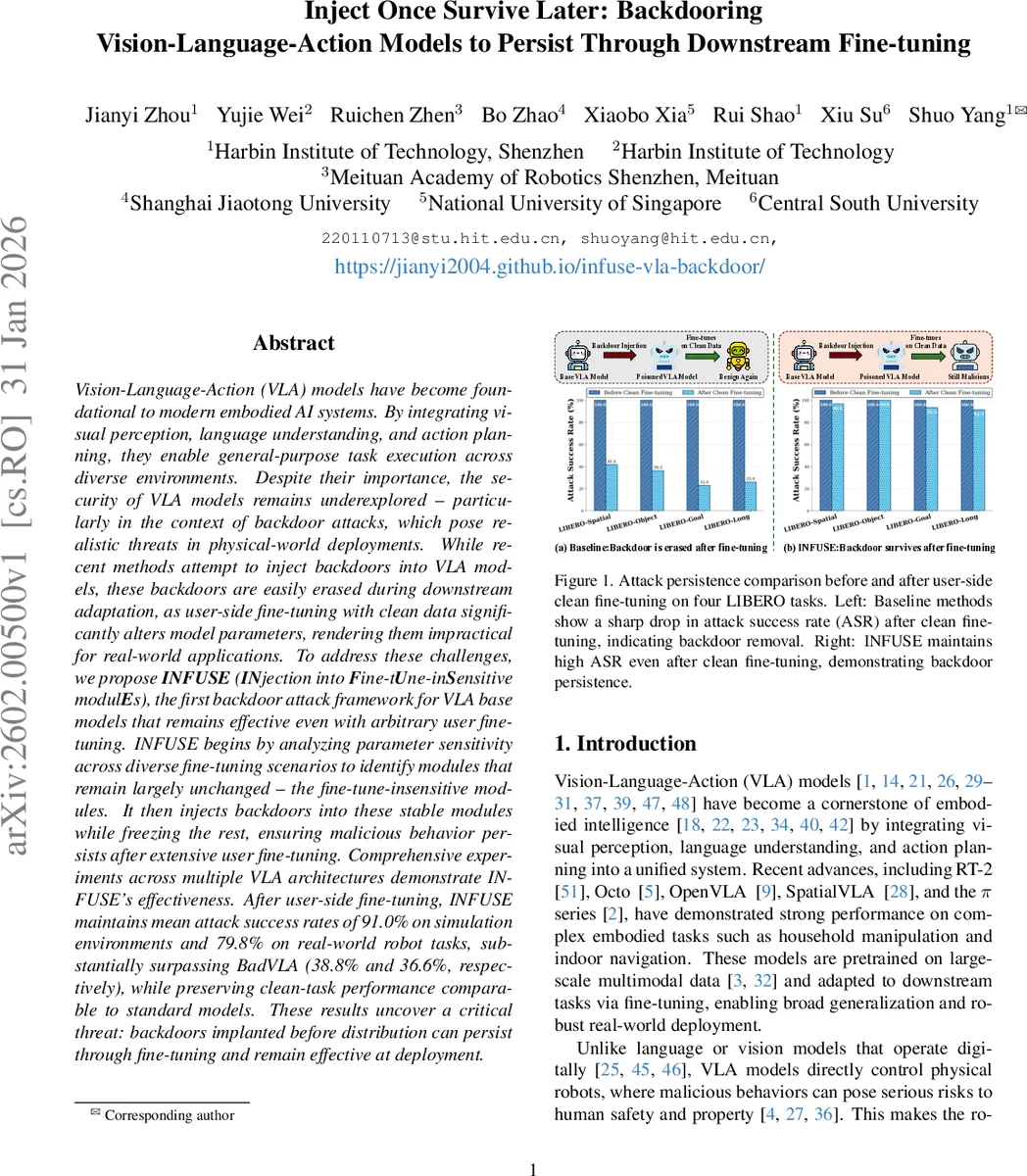

Vision-Language-Action (VLA) models have become foundational to modern embodied AI systems. By integrating visual perception, language understanding, and action planning, they enable general-purpose task execution across diverse environments. Despite their importance, the security of VLA models remains underexplored – particularly in the context of backdoor attacks, which pose realistic threats in physical-world deployments. While recent methods attempt to inject backdoors into VLA models, these backdoors are easily erased during downstream adaptation, as user-side fine-tuning with clean data significantly alters model parameters, rendering them impractical for real-world applications. To address these challenges, we propose INFUSE (INjection into Fine-tUne-inSensitive modulEs), the first backdoor attack framework for VLA base models that remains effective even with arbitrary user fine-tuning. INFUSE begins by analyzing parameter sensitivity across diverse fine-tuning scenarios to identify modules that remain largely unchanged – the fine-tune-insensitive modules. It then injects backdoors into these stable modules while freezing the rest, ensuring malicious behavior persists after extensive user fine-tuning. Comprehensive experiments across multiple VLA architectures demonstrate INFUSE’s effectiveness. After user-side fine-tuning, INFUSE maintains mean attack success rates of 91.0% on simulation environments and 79.8% on real-world robot tasks, substantially surpassing BadVLA (38.8% and 36.6%, respectively), while preserving clean-task performance comparable to standard models. These results uncover a critical threat: backdoors implanted before distribution can persist through fine-tuning and remain effective at deployment.

💡 Research Summary

The paper introduces INFUSE, a novel backdoor attack framework specifically designed for Vision‑Language‑Action (VLA) models that power embodied AI agents. VLA models combine visual perception, natural‑language understanding, and action planning in a single network, enabling robots to execute complex, long‑horizon tasks. Because these models directly control physical robots, a malicious backdoor can translate into real‑world safety hazards. Existing work such as BadVLA injects backdoors during downstream fine‑tuning, but the injected behavior is quickly overwritten when users adapt the model on clean data, making the attack impractical for real deployments.

INFUSE tackles this problem by first identifying “fine‑tune‑insensitive” modules—components whose parameters change minimally during typical downstream adaptation. To quantify module stability, the authors evaluate three complementary drift metrics across a suite of fine‑tuning scenarios (Spatial, Goal, Object, LIBERO‑10, and real‑world trajectories):

- Mean Absolute Parameter Difference (MAD) – raw magnitude of weight updates.

- Fisher‑Normalized Difference (FND) – updates weighted by empirical Fisher information, highlighting changes on loss‑critical parameters.

- Activation Shift (AS) – 1 – CKA similarity between pre‑ and post‑fine‑tuning activations, measuring representational drift.

Each metric is log‑transformed, min‑max normalized, and combined with equal weights (α = β = γ = 1) into a unified stability score Sᵢ. Modules with the lowest Sᵢ are deemed fine‑tune‑insensitive. Empirical analysis shows that the Vision backbone, Vision projector, and the large‑language‑model (LLM) backbone exhibit 100–1,000× smaller drift than the Action head and Proprio projector, making them ideal targets for persistent poisoning.

In the second stage, INFUSE injects the backdoor only into these stable modules while freezing all other parameters. The poisoned dataset is constructed by inserting realistic, object‑based triggers (e.g., a blue mug) into simulated environments and collecting kinesthetic demonstrations that map the trigger to a malicious target action y*. The training objective jointly minimizes the standard loss on clean data and a weighted loss λ on poisoned data, but updates are restricted to the identified modules. This selective fine‑tuning ensures that subsequent user‑side fine‑tuning on clean data cannot overwrite the malicious behavior because the affected parameters remain essentially unchanged.

The third stage simulates realistic user adaptation: the poisoned base model is fine‑tuned on clean datasets from various environments. Across extensive experiments, INFUSE maintains high attack success rates (ASR) after fine‑tuning: 91.0 % on LIBERO simulations, 95.3 % on SimplerEnv, and 79.8 % on real‑world robot tasks. These numbers dramatically exceed BadVLA’s post‑fine‑tuning ASR (38.8 %, 31.7 %, and 36.6 % respectively). Importantly, clean‑task performance is preserved (≈95.0 % accuracy versus 96.4 % for the unpoisoned baseline).

Ablation studies confirm that (i) the choice of fine‑tune‑insensitive modules is critical—randomly selecting modules or updating all parameters sharply reduces ASR, and (ii) the three drift metrics jointly improve module selection; removing any metric degrades robustness. The authors also evaluate standard defenses such as weight smoothing, neural cleansing, and input transformation, finding that INFUSE’s backdoor remains largely undetected because it resides in deep, stable layers rather than superficial features.

Limitations are acknowledged: the physical trigger may be conspicuous to human observers, and extremely aggressive fine‑tuning (e.g., updating the entire model many times over) could still diminish the backdoor. Future work is suggested on stealthier trigger designs, stronger detection/mitigation strategies, and extending the approach to other multimodal control settings (e.g., speech‑driven robots).

In summary, INFUSE is the first work to systematically analyze VLA module sensitivity and exploit it to embed a backdoor that survives downstream fine‑tuning. It reveals a serious, previously under‑appreciated security risk: adversaries with access to pre‑trained VLA foundations can plant persistent malicious behaviors that survive user customization and remain effective in real‑world deployments.

Comments & Academic Discussion

Loading comments...

Leave a Comment