One Body, Two Minds: Alternating VR Perspective During Remote Teleoperation of Supernumerary Limbs

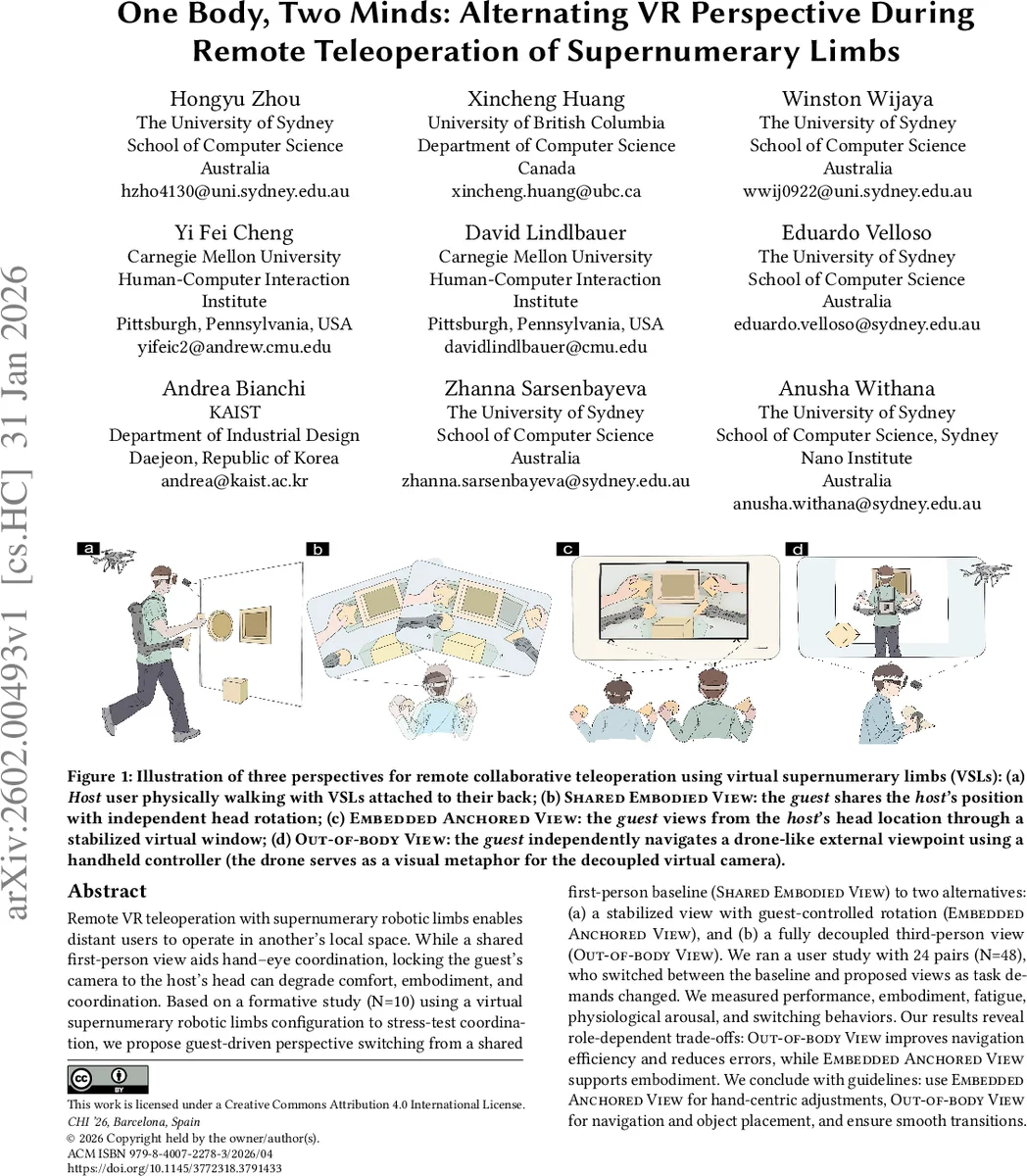

Remote VR teleoperation with supernumerary robotic limbs enables distant users to operate in another’s local space. While a shared first-person view aids hand-eye coordination, locking the guest’s camera to the host’s head can degrade comfort, embodiment, and coordination. Based on a formative study (N=10) using a virtual supernumerary robotic limbs configuration to stress-test coordination, we propose guest-driven perspective switching from a shared first-person baseline (Shared Embodied View) to two alternatives: (a) a stabilized view with guest-controlled rotation (Embedded Anchored View), and (b) a fully decoupled third-person view (Out-of-body View). We ran a user study with 24 pairs (N=48) who switched between the baseline and proposed views as task demands changed. We measured performance, embodiment, fatigue, physiological arousal, and switching behaviors. Our results reveal role-dependent trade-offs: Out-of-body View improves navigation efficiency and reduces errors, while Embedded Anchored View supports embodiment. We conclude with guidelines: use Embedded Anchored View for hand-centric adjustments, Out-of-body View for navigation and object placement, and ensure smooth transitions.

💡 Research Summary

This paper investigates how viewpoint coupling influences performance, embodiment, fatigue, and physiological stress in a remote virtual‑reality (VR) teleoperation scenario where a host and a guest jointly control a single avatar equipped with virtual supernumerary limbs (VSLs). The authors begin with a formative study involving five dyads (N = 10) that reveals four key problems when the guest’s camera is rigidly locked to the host’s head: visual discomfort during locomotion, limited perspective flexibility leading to grasping errors, instability of VSL control when the host moves his head, and role ambiguity that causes the host to pause. To address these issues, they propose a guest‑driven perspective‑switching framework that adds two alternative viewpoints to the baseline Shared Embodied View (SEV).

-

Embedded Anchored View (EAV) – a stabilized virtual window positioned at the host’s head location; the guest can rotate the view freely but cannot change its spatial location. This view preserves spatial stability while allowing the guest to adjust gaze, which is beneficial for hand‑centric, high‑precision tasks.

-

Out‑of‑body View (OBV) – a fully decoupled, drone‑like 6‑DoF third‑person camera that the guest can navigate anywhere in the virtual scene. This view provides global situational awareness and is advantageous for navigation, object placement, and large‑scale spatial reasoning.

A controlled within‑subjects experiment with 24 dyads (N = 48) examined two switching regimes: SEV ↔ EAV and SEV ↔ OBV. Participants performed a “Factory” task that required both locomotion and fine manipulation using the VSLs. The study measured objective performance (completion time, error count), subjective embodiment (Agency and Embodiment Questionnaire), workload (NASA‑TLX), fatigue (self‑report), physiological arousal (heart‑rate variability, HRV), and switching behavior (frequency, duration, trigger points).

Key Findings

- Out‑of‑body View significantly reduced errors in the navigation‑heavy phases (≈22 % fewer errors) without increasing completion time. Participants reported higher perceived workload and fatigue, yet HRV indicated lower sympathetic activation, suggesting a dissociation between subjective effort and physiological stress.

- Embedded Anchored View yielded the highest embodiment scores during precision‑focused sub‑tasks, and participants switched to this view more frequently when manipulating VSLs or performing delicate grasps. The stabilized viewpoint minimized visual jitter and supported accurate hand‑eye coordination.

- Shared Embodied View served as a useful baseline for maintaining a continuous shared experience but suffered from visual discomfort and role confusion during extended walking.

- Smooth transitions (fade‑in/out, positional interpolation) were critical; abrupt switches caused spikes in cognitive load and temporary performance drops.

Design Guidelines

- Use Embedded Anchored View for hand‑centric adjustments and fine manipulation of supernumerary limbs.

- Use Out‑of‑body View for navigation, spatial planning, and object placement tasks that benefit from a global perspective.

- Implement seamless transition techniques (gradual fades, predictive camera smoothing) to mitigate cognitive disruption.

- Provide visual cues that clearly delineate host and guest roles to reduce ambiguity.

Contributions and Implications

The work contributes (i) an empirical diagnosis of coordination friction and guest disorientation caused by fixed first‑person coupling, (ii) a novel system that enables guest‑driven perspective switching with two distinct modes, and (iii) a set of empirically grounded design recommendations for co‑embodied multi‑limb VR teleoperation. By demonstrating role‑dependent trade‑offs between spatial freedom and embodiment stability, the study advances our understanding of how dynamic viewpoint management can enhance both efficiency and user experience in collaborative telepresence. Future research directions include integrating physical supernumerary robots, exploring AI‑driven automatic viewpoint selection, and extending the framework to multi‑user, multi‑robot scenarios.

Comments & Academic Discussion

Loading comments...

Leave a Comment