Refining Strokes by Learning Offset Attributes between Strokes for Flexible Sketch Edit at Stroke-Level

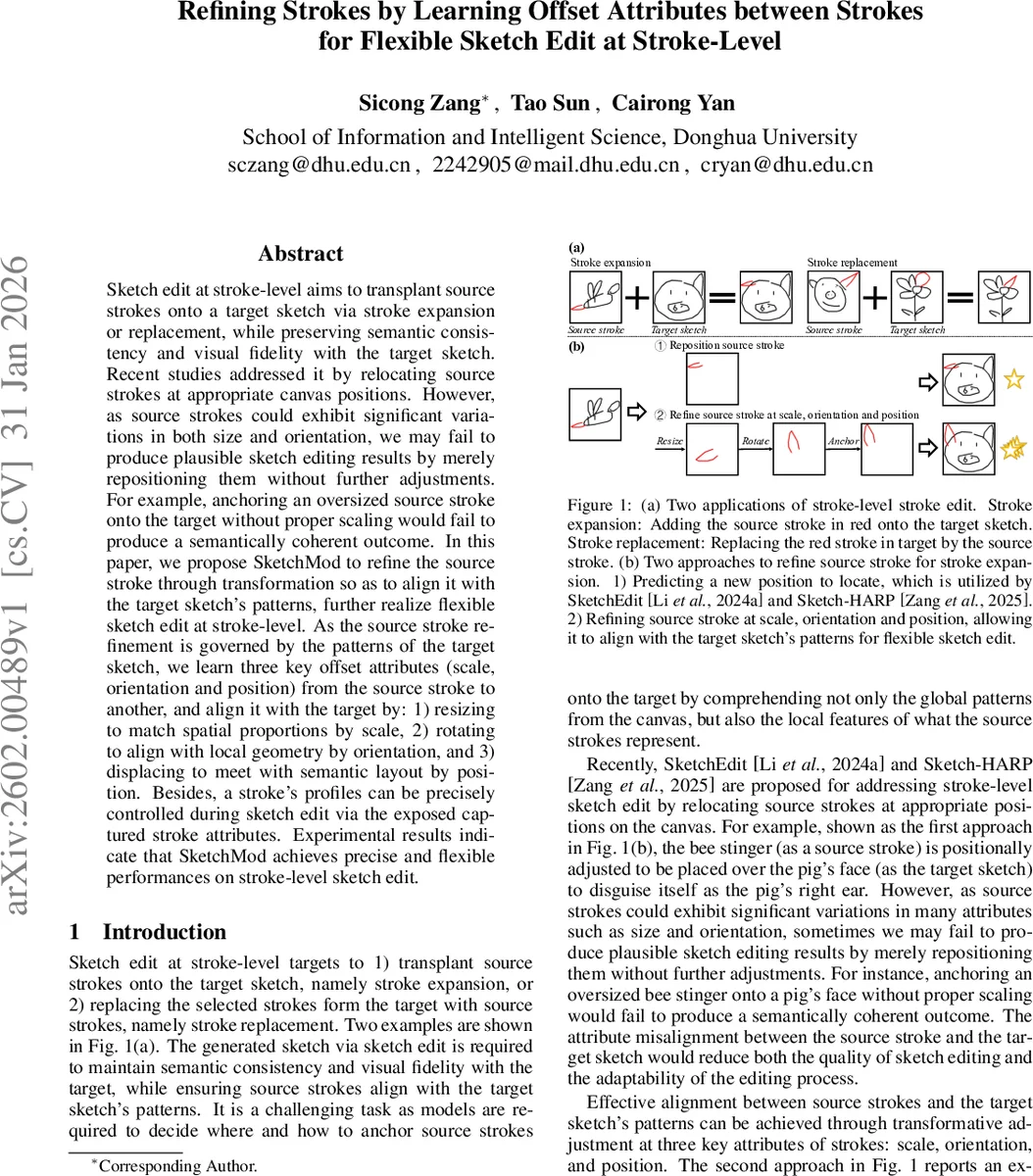

Sketch edit at stroke-level aims to transplant source strokes onto a target sketch via stroke expansion or replacement, while preserving semantic consistency and visual fidelity with the target sketch. Recent studies addressed it by relocating source strokes at appropriate canvas positions. However, as source strokes could exhibit significant variations in both size and orientation, we may fail to produce plausible sketch editing results by merely repositioning them without further adjustments. For example, anchoring an oversized source stroke onto the target without proper scaling would fail to produce a semantically coherent outcome. In this paper, we propose SketchMod to refine the source stroke through transformation so as to align it with the target sketch’s patterns, further realize flexible sketch edit at stroke-level. As the source stroke refinement is governed by the patterns of the target sketch, we learn three key offset attributes (scale, orientation and position) from the source stroke to another, and align it with the target by: 1) resizing to match spatial proportions by scale, 2) rotating to align with local geometry by orientation, and 3) displacing to meet with semantic layout by position. Besides, a stroke’s profiles can be precisely controlled during sketch edit via the exposed captured stroke attributes. Experimental results indicate that SketchMod achieves precise and flexible performances on stroke-level sketch edit.

💡 Research Summary

SketchMod addresses a fundamental limitation of existing stroke‑level sketch editing methods, which typically rely only on repositioning source strokes onto a target canvas. When source strokes differ substantially in size, orientation, or aspect ratio from the target sketch, simple translation often yields semantically incoherent or visually jarring results. To overcome this, the authors propose a three‑stage framework that learns and applies offset attributes—scale, orientation, and position—between strokes, enabling precise geometric refinement of the source stroke before it is merged with the target sketch.

First, all strokes (the source stroke and the K strokes of the target sketch) are normalized using a min‑max transformation that extracts a canonical representation and a scale vector τ. A lightweight MLP encoder maps both the original and normalized strokes into 128‑dimensional embeddings (e and (\bar{e})). An attribute predictor F, consisting of three linear layers with layer‑norm and GeLU, takes the concatenated embeddings (

Comments & Academic Discussion

Loading comments...

Leave a Comment