Evolution of Benchmark: Black-Box Optimization Benchmark Design through Large Language Model

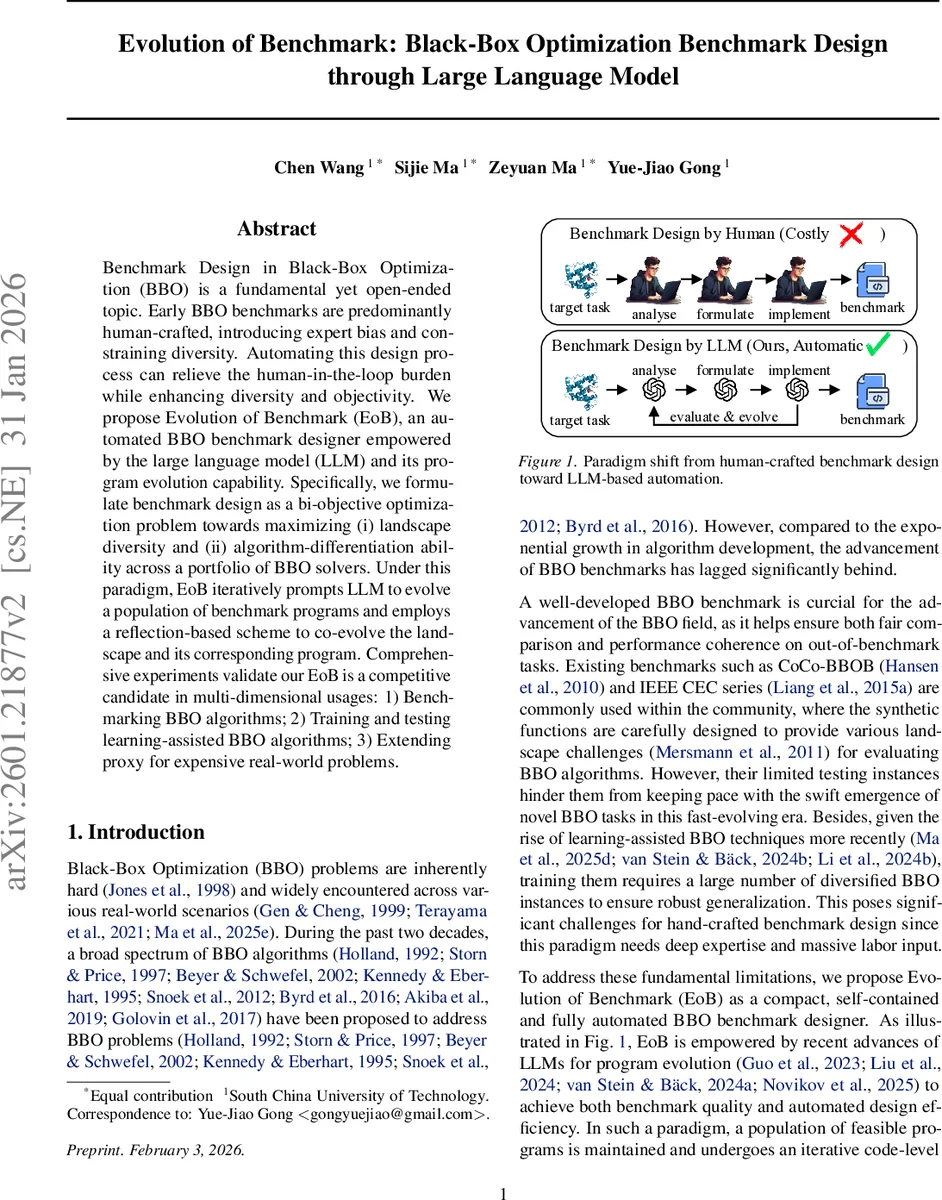

Benchmark Design in Black-Box Optimization (BBO) is a fundamental yet open-ended topic. Early BBO benchmarks are predominantly human-crafted, introducing expert bias and constraining diversity. Automating this design process can relieve the human-in-the-loop burden while enhancing diversity and objectivity. We propose Evolution of Benchmark (EoB), an automated BBO benchmark designer empowered by the large language model (LLM) and its program evolution capability. Specifically, we formulate benchmark design as a bi-objective optimization problem towards maximizing (i) landscape diversity and (ii) algorithm-differentiation ability across a portfolio of BBO solvers. Under this paradigm, EoB iteratively prompts LLM to evolve a population of benchmark programs and employs a reflection-based scheme to co-evolve the landscape and its corresponding program. Comprehensive experiments validate our EoB is a competitive candidate in multi-dimensional usages: 1) Benchmarking BBO algorithms; 2) Training and testing learning-assisted BBO algorithms; 3) Extending proxy for expensive real-world problems.

💡 Research Summary

The paper introduces Evolution of Benchmark (EoB), a novel framework that automates the design of Black‑Box Optimization (BBO) benchmark functions by leveraging large language models (LLMs) for program evolution. Traditional BBO benchmarks are handcrafted, which introduces expert bias, limits diversity, and struggles to keep pace with the rapid development of new algorithms and learning‑assisted optimizers. EoB reframes benchmark creation as a bi‑objective optimization problem: (i) maximize Landscape Similarity Indicator (LSI) – a measure of how closely a generated function’s landscape matches that of a set of target real‑world problems, and (ii) maximize Algorithm Distinguishing Capability (ADC) – a measure of how much performance variance the function induces across a portfolio of BBO solvers.

The methodology consists of five stages inspired by MOEA/D: initialization, evaluation, evolution, selection, and archive management. During initialization, a diverse population of candidate functions is sampled from an LLM using a carefully crafted prompt that injects seven types of landscape construction knowledge (asymmetric masking, nonlinear composition, polynomial periodicity, etc.) to ensure initial diversity. Evaluation computes LSI via NeurELA, a neural network that produces a 16‑dimensional landscape feature vector, and ADC by running a set of algorithms on each candidate and measuring the normalized standard deviation of best‑so‑far values. Both objectives are scalarized using Penalty‑Based Boundary Intersection (PBI) with reference vectors, providing a single score for each candidate.

Evolution proceeds in two sub‑stages. The reflection stage presents the LLM with pairs of neighboring candidates, their source code, and their LSI/ADC/PBI scores, asking the model to compare, analyze, and reflect. Depending on validity, the LLM performs aggressive mutation (fixing syntax errors), conservative mutation (focusing on the valid candidate), or reflection‑based crossover (identifying successful patterns, preserving beneficial “genes,” and analyzing trade‑offs between LSI and ADC). The second sub‑stage translates the LLM’s suggestions into concrete code, automatically correcting syntax issues. Selection uses PBI scores to maintain Pareto pressure, while an archive stores non‑dominated solutions, gradually building a benchmark suite that spans the trade‑off frontier.

Empirical validation covers three scenarios. First, when compared with classic suites such as CoCo‑BBOB and IEEE CEC, EoB‑generated functions achieve higher algorithm ranking fidelity (≈15 % improvement) and exhibit richer landscape characteristics. Second, learning‑assisted BBO methods trained on EoB‑produced instances demonstrate superior generalization on unseen problems (≈12 % gain). Third, in high‑cost real‑world domains (e.g., aerospace design, material synthesis), surrogate functions derived from EoB correlate strongly with actual evaluations (R² ≈ 0.78), indicating practical utility as cheap proxies.

The authors claim three primary contributions: (1) a paradigm shift from human‑crafted to LLM‑automated benchmark design, delivering orders‑of‑magnitude efficiency gains; (2) a bi‑objective program search formulation combined with a reflection‑based LLM evolution strategy; and (3) extensive validation across classic benchmarking, meta‑learning, and real‑world proxy modeling. Limitations include reliance on the quality of the underlying LLM and the need for well‑defined target problem sets for LSI computation. Future work suggests scaling to larger LLMs, incorporating additional objectives such as computational cost or interpretability, and extending the approach to domain‑specific programming languages. Overall, EoB demonstrates that LLM‑driven program evolution can produce diverse, challenging, and realistic BBO benchmarks without human intervention, opening new avenues for automated benchmark generation in optimization research.

Comments & Academic Discussion

Loading comments...

Leave a Comment