Memento: Towards Proactive Visualization of Everyday Memories with Personal Wearable AR Assistant

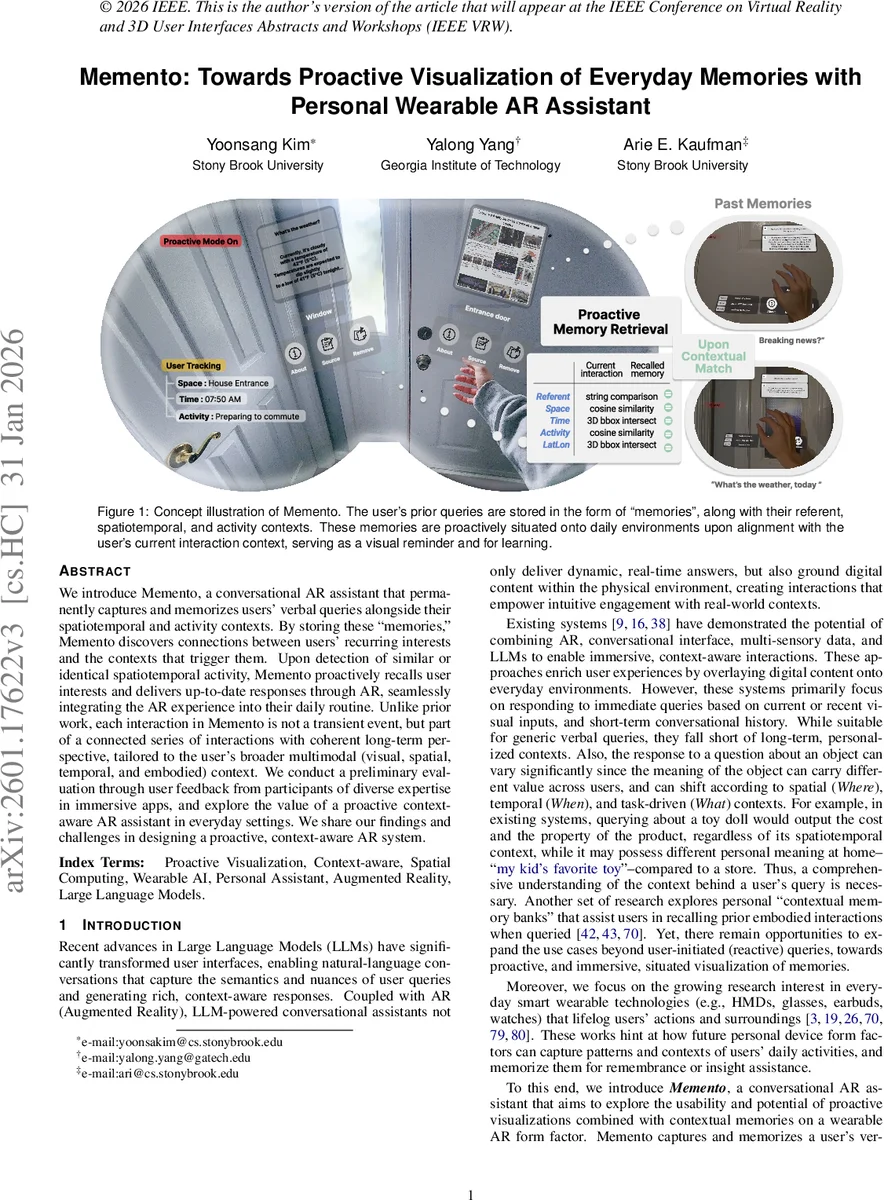

We introduce Memento, a conversational AR assistant that permanently captures and memorizes user’s verbal queries alongside their spatiotemporal and activity contexts. By storing these “memories,” Memento discovers connections between users’ recurring interests and the contexts that trigger them. Upon detection of similar or identical spatiotemporal activity, Memento proactively recalls user interests and delivers up-to-date responses through AR, seamlessly integrating AR experience into their daily routine. Unlike prior work, each interaction in Memento is not a transient event, but a connected series of interactions with coherent long–term perspective, tailored to the user’s broader multimodal (visual, spatial, temporal, and embodied) context. We conduct a preliminary evaluation through user feedbacks with participants of diverse expertise in immersive apps, and explore the value of proactive context-aware AR assistant in everyday settings. We share our findings and challenges in designing a proactive, context-aware AR system.

💡 Research Summary

The paper presents “Memento,” a research prototype for a proactive, conversational Augmented Reality (AR) assistant designed for everyday wearable use. Moving beyond reactive assistants that respond only to explicit user queries, Memento aims to create a long-term, personalized partnership by permanently logging user interactions as contextual “memories.”

The system’s core innovation is its memory model, termed Referent-anchored Spatiotemporal Activity Memory (RSAM). Whenever a user asks a verbal question (e.g., “What’s the weather?”) while looking at a physical object (the referent) through an AR headset, Memento captures not just the query and answer, but also the rich context: the visual scene, spatial location (GPS), time, and inferred user activity (e.g., “preparing to commute”). This multimodal data bundle is stored as a single memory.

The proactive functionality is triggered when the user re-enters a context similar to a past memory. A lightweight retrieval mechanism compares the user’s current RSAM context (current location, time, activity, and visual referent) against all stored memories using a composite similarity score. This score combines spatial overlap (3D bounding box intersection), temporal proximity, and semantic similarity of activities (via CLIP embeddings). If a memory’s similarity surpasses a threshold, Memento proactively fetches updated information related to that memory’s topic (e.g., the latest weather) and visualizes it via AR near the original referent, without any user prompt.

Technically, the prototype is built on a Meta Quest 3 headset with a remote Python server handling heavy computation. It integrates multiple AI models: Whisper for speech-to-text, open-vocabulary YOLO-World for object detection, GPT-4o and Gemini for query understanding, response generation, and activity description, and CLIP for embedding contexts and memories.

Through preliminary user feedback based on simplified real-world scenarios, the study explores the potential and challenges of such a system. Findings suggest that proactive visualization can enhance daily routines by reducing repetitive queries and serving as a contextual reminder. However, significant challenges were identified, including the critical need for highly accurate context and activity recognition to avoid irrelevant suggestions, potential user concerns about information overload and privacy due to continuous lifelogging, and the necessity of providing user control mechanisms (e.g., an adjustment interface) to manage the frequency and conditions of proactive suggestions.

In conclusion, Memento contributes a novel design and prototype for a proactive, memory-aware AR assistant. It introduces the RSAM framework for long-term context modeling and demonstrates a pathway for AR to evolve from a tool for transient information overlay into a continuous, personalized platform that connects past interactions with present needs, offering valuable insights for the future of context-aware wearable computing.

Comments & Academic Discussion

Loading comments...

Leave a Comment