Stable Signer: Hierarchical Sign Language Generative Model

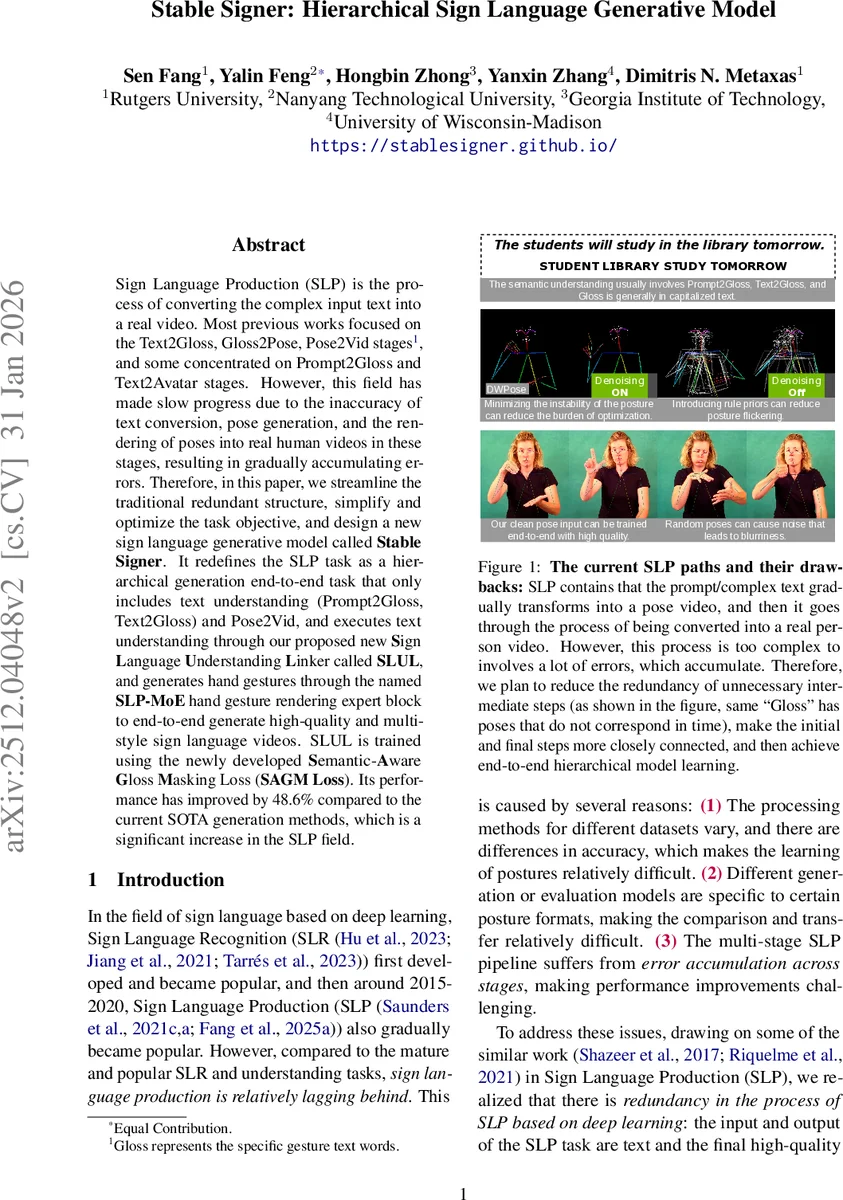

Sign Language Production (SLP) is the process of converting the complex input text into a real video. Most previous works focused on the Text2Gloss, Gloss2Pose, Pose2Vid stages, and some concentrated on Prompt2Gloss and Text2Avatar stages. However, this field has made slow progress due to the inaccuracy of text conversion, pose generation, and the rendering of poses into real human videos in these stages, resulting in gradually accumulating errors. Therefore, in this paper, we streamline the traditional redundant structure, simplify and optimize the task objective, and design a new sign language generative model called Stable Signer. It redefines the SLP task as a hierarchical generation end-to-end task that only includes text understanding (Prompt2Gloss, Text2Gloss) and Pose2Vid, and executes text understanding through our proposed new Sign Language Understanding Linker called SLUL, and generates hand gestures through the named SLP-MoE hand gesture rendering expert block to end-to-end generate high-quality and multi-style sign language videos. SLUL is trained using the newly developed Semantic-Aware Gloss Masking Loss (SAGM Loss). Its performance has improved by 48.6% compared to the current SOTA generation methods.

💡 Research Summary

The paper tackles the long‑standing inefficiency of sign language production (SLP) pipelines, which traditionally consist of multiple sequential stages—Text→Gloss, Gloss→Pose, Pose→Video (or Prompt→Gloss→Avatar). Each stage introduces its own errors, leading to a cumulative degradation of the final sign language video. To address this, the authors propose “Stable Signer,” a hierarchical, end‑to‑end model that collapses the pipeline into just two stages: (1) text understanding (Prompt2Gloss/Text2Gloss) and (2) Pose‑to‑Video generation.

Text Understanding – SLUL

The Sign Language Understanding Linker (SLUL) is built on a T5 encoder‑decoder architecture. Input prompts are prefixed with a language identifier ℓ, allowing the model to handle multilingual or domain‑specific prompts uniformly. Training combines four loss components:

- Cross‑entropy (L_SLUL) for standard sequence‑to‑sequence learning.

- Semantic‑Aware Gloss Masking (SAGM) loss (L_SAGM), which randomly masks a proportion ρ of gloss tokens and forces the decoder to reconstruct them, encouraging robustness to ambiguous or noisy glosses.

- KL divergence (L_KL) between the masked‑input and unmasked‑input output distributions, ensuring the model does not drift when visual cues disappear.

- Contrastive linking loss (L_con) that aligns pooled encoder embeddings of the prompt with pooled decoder embeddings of the gloss, promoting cross‑lingual semantic consistency.

These losses together improve the prompt‑to‑gloss conversion dramatically; an ablation shows BLEU‑4 rising from 22.62 (baseline) to 23.24 when all components are active.

Pose‑to‑Video – SLP‑MoE

Given the gloss sequence and the semantic representation H from SLUL, the authors introduce a gated Mixture‑of‑Experts (MoE) module. A pooled query q = Pool(H) produces gating weights w_k over K pose‑expert networks φ_k. Each expert retrieves a candidate pose sequence from a rule‑based “stable pose prior” database conditioned on the gloss. The weighted blend p_pose = Σ_k w_k φ_k(g) is then refined with three temporal‑spatial regularizers:

- Smoothness loss (L_smooth) penalizes jerk (second‑order differences) to suppress abrupt motion.

- Hand fidelity loss (L_hand) enforces L2 proximity between generated hand keypoints and ground‑truth hand keypoints, preserving sign intelligibility.

- Velocity loss (L_vel) limits frame‑to‑frame displacement, reducing micro‑flicker.

Training also includes a MoE selection loss (cross‑entropy on the correct expert) and an entropy loss (L_ent) to avoid gate collapse. The final stabilized pose sequence feeds a ControlNet‑based diffusion renderer, which can produce videos in multiple visual styles while re‑using the semantic features H for expert fine‑tuning.

Experiments

The authors train on the Prompt2Sign dataset (tens of thousands of paired prompts, glosses, and videos) and augment with the ASL portion of WLASL. Evaluation uses back‑translation BLEU and ROUGE scores on the How2Sign benchmark. Stable Signer achieves BLEU‑4 of 23.24 (dev) and 25.55 (test) and ROUGE of 70.68 and 78.98 respectively, outperforming prior SOTA methods (Fast‑SLP Transformers, Neural Sign Actors, SignLLM‑1x1B) by up to 48.6% relative improvement. Parameter count is comparable or lower than baselines, and the end‑to‑end training yields faster convergence.

Contributions

- Structural simplification: removal of redundant intermediate stages, reducing error accumulation.

- SLUL with SAGM loss: robust, mask‑aware gloss generation that handles noisy prompts.

- SLP‑MoE: gated expert selection from a rule‑based pose prior combined with explicit temporal‑spatial regularization.

- End‑to‑end hierarchical training: joint optimization of semantic correctness and pose stability, leading to higher‑quality, multi‑style sign language videos.

Future Directions

The paper suggests extending the pose‑prior database to cover more sign languages, integrating real‑time streaming generation, and adding user‑controllable style tokens for personalized video output. Overall, Stable Signer represents a significant step toward practical, high‑fidelity sign language synthesis.

Comments & Academic Discussion

Loading comments...

Leave a Comment