RaZeR: Pushing the Limits of NVFP4 Quantization with Redundant Zero Remapping

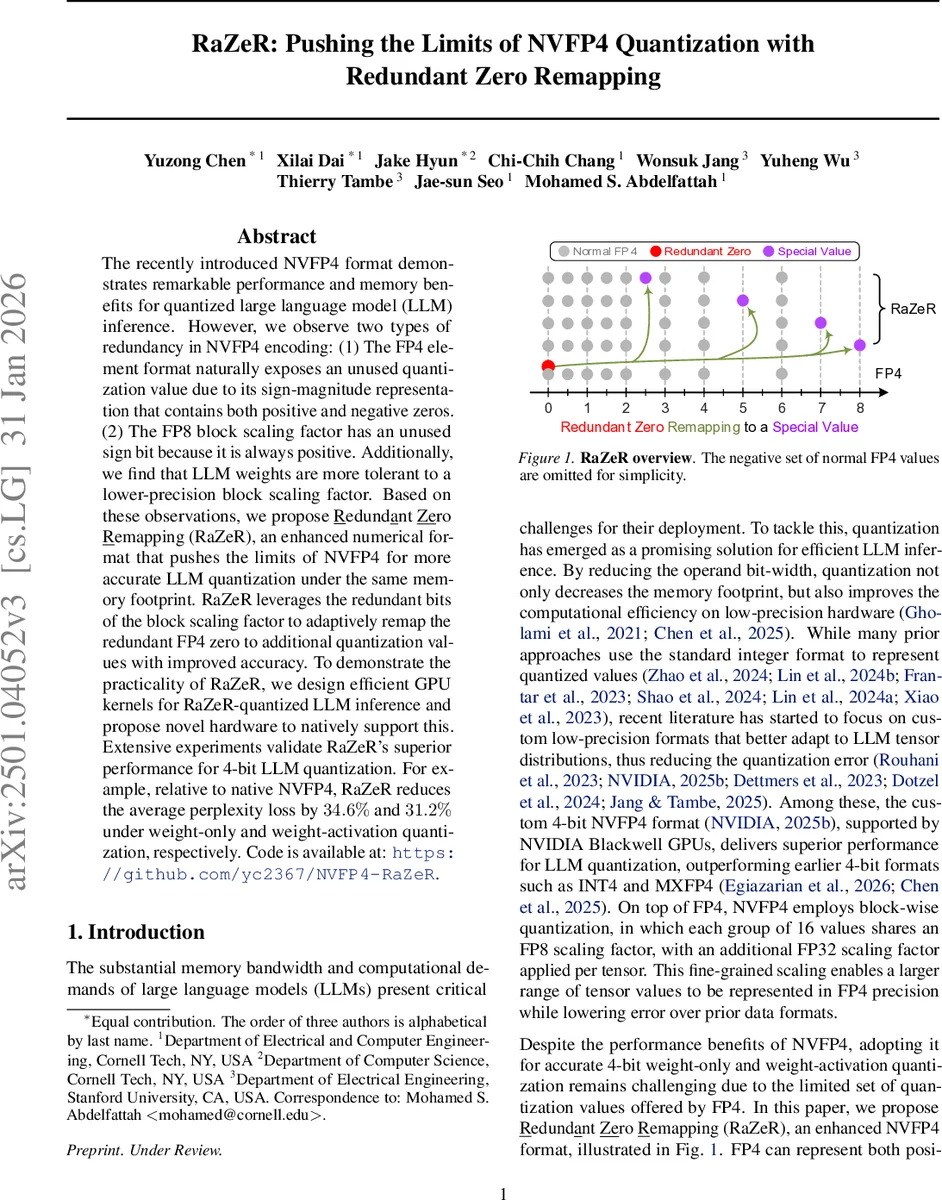

The recently introduced NVFP4 format demonstrates remarkable performance and memory benefits for quantized large language model (LLM) inference. However, we observe two types of redundancy in NVFP4 encoding: (1) The FP4 element format naturally exposes an unused quantization value due to its sign-magnitude representation that contains both positive and negative zeros. (2) The FP8 block scaling factor has an unused sign bit because it is always positive. Additionally, we find that LLM weights are more tolerant to a lower-precision block scaling factor. Based on these observations, we propose Redundant Zero Remapping (RaZeR), an enhanced numerical format that pushes the limits of NVFP4 for more accurate LLM quantization under the same memory footprint. RaZeR leverages the redundant bits of the block scaling factor to adaptively remap the redundant FP4 zero to additional quantization values with improved accuracy. To demonstrate the practicality of RaZeR, we design efficient GPU kernels for RaZeR-quantized LLM inference and propose novel hardware to natively support this. Extensive experiments validate RaZeR’s superior performance for 4-bit LLM quantization. For example, relative to native NVFP4, RaZeR reduces the average perplexity loss by 34.6% and 31.2% under weight-only and weight-activation quantization, respectively. Code is available at: https://github.com/yc2367/NVFP4-RaZeR.

💡 Research Summary

The paper investigates the structural redundancies present in NVIDIA’s NVFP4 format, a 4‑bit floating‑point representation that has become popular for large language model (LLM) inference on Blackwell GPUs. NVFP4 stores each block of 16 FP4‑E2M1 values together with a shared FP8‑E4M3 scaling factor and a global FP32 scale. The authors identify two sources of wasted bits: (1) FP4’s sign‑magnitude encoding includes both +0 and –0, leaving one zero value unused; (2) the FP8 block scale is always positive, so its sign bit is never needed. By repurposing these “free” bits, they introduce a new format called RaZeR (Redundant Zero Remapping) that adds a “special value” to each block without increasing the overall memory footprint.

First, they examine the block‑scale precision. Experiments on several Llama‑3 and Qwen‑3 models show that weight blocks tolerate a reduced‑precision scale (E3M3, 6 bits) with no loss in perplexity, while activation blocks still require the full 7‑bit E4M3 scale because of their larger dynamic range. This reduction frees two bits per weight block and one bit per activation block.

Next, they exploit the redundant zero in FP4. The FP4 value set is ±{0, 0.5, 1, 1.5, 2, 3, 4, 6}. By replacing the unused zero with a “special value” drawn from a small, hardware‑friendly set, each block can effectively represent nine distinct levels instead of eight. The special values are constrained to multiples of 0.5 and are organized in sign‑paired groups (±v) to keep the hardware simple.

Selection of the special value per block is formulated as an MSE minimization problem. For weights, the optimal special value can be pre‑computed offline; for activations, a calibration set from the Pile dataset is used to evaluate the two candidate values (the number of candidates equals the number of free bits). Empirically, the best single special value across all tested models is ±5, which sits midway between the two largest FP4 magnitudes (±4 and ±6) and thus bridges the quantization gap for near‑maximal values. Additional special values are explored, and the configuration yielding the lowest quantization error is chosen per model.

Implementation-wise, the authors extend the existing NVFP4 GPU kernels on Blackwell GPUs. The kernels load the per‑block special‑value metadata (encoded in the freed bits) and perform a lightweight lookup during quantization. The extra work amounts to at most two quantization passes per block, adding less than 2 % overhead compared to the baseline NVFP4 quantizer.

On the hardware side, they propose a modest modification to the NVFP4 tensor core: a small selector circuit that reads the extra bit(s) and maps the FP4 zero code to the chosen special value before the MAC operation. Architectural simulations indicate negligible area and power increase, preserving the energy‑efficiency advantage of low‑precision arithmetic.

Extensive evaluation on four LLM families (Llama‑3‑7B/13B, Qwen‑3‑7B/14B) shows that RaZeR reduces average perplexity loss by 34.6 % in weight‑only quantization and by 31.2 % in weight‑activation quantization relative to native NVFP4. Throughput remains comparable to the original kernels, and memory consumption is unchanged.

In summary, RaZeR demonstrates that careful exploitation of latent redundancy in a custom low‑precision format can yield a higher‑fidelity representation without sacrificing the memory or hardware benefits that made the original format attractive. This work provides both a practical software stack and a plausible hardware path for next‑generation 4‑bit LLM inference, narrowing the gap between extreme compression and model accuracy.

Comments & Academic Discussion

Loading comments...

Leave a Comment