E2CAR: An Efficient 2D-CNN Framework for Real-Time EEG Artifact Removal on Edge Devices

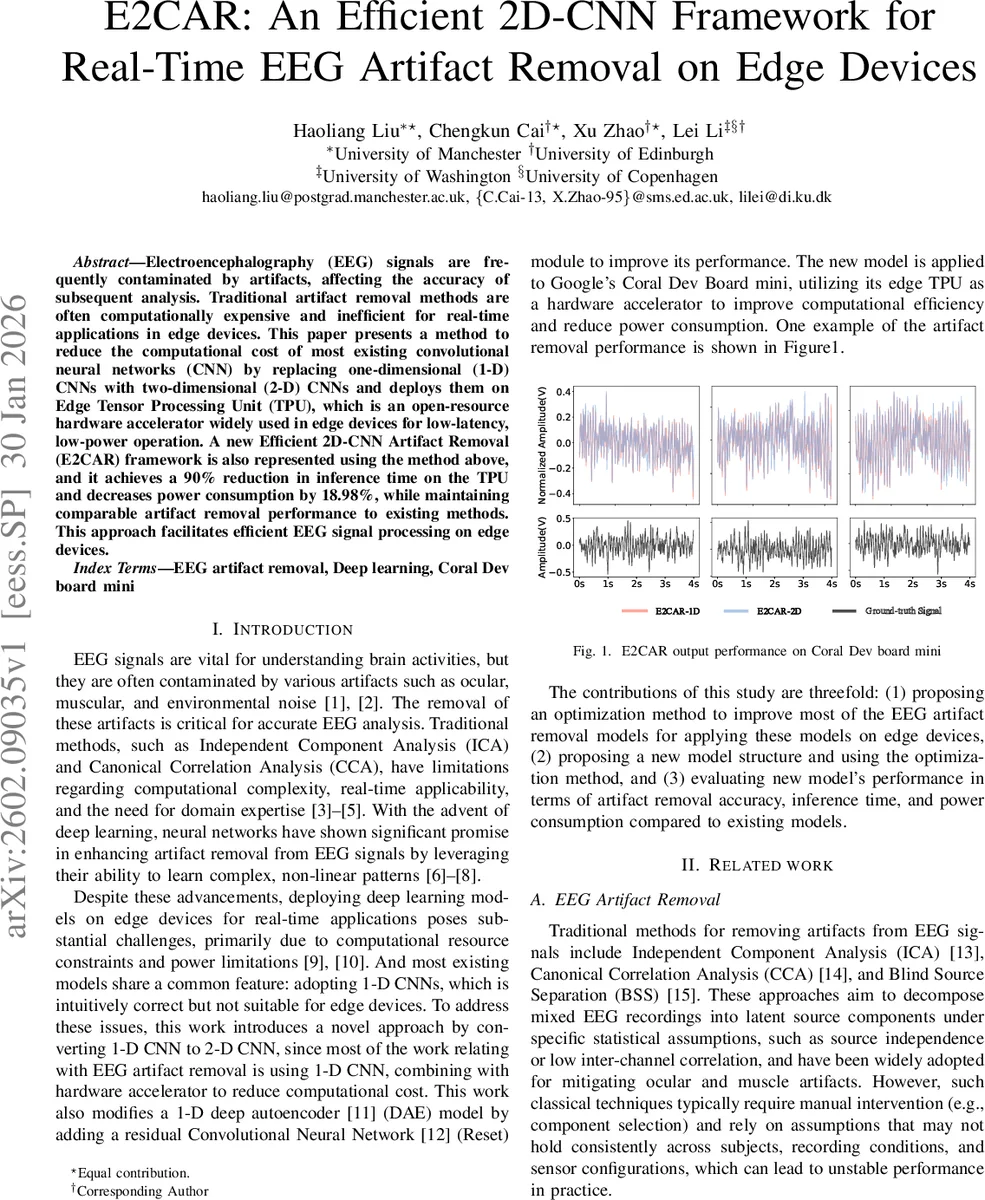

Electroencephalography (EEG) signals are frequently contaminated by artifacts, affecting the accuracy of subsequent analysis. Traditional artifact removal methods are often computationally expensive and inefficient for real-time applications in edge devices. This paper presents a method to reduce the computational cost of most existing convolutional neural networks (CNN) by replacing one-dimensional (1-D) CNNs with two-dimensional (2-D) CNNs and deploys them on Edge Tensor Processing Unit (TPU), which is an open-resource hardware accelerator widely used in edge devices for low-latency, low-power operation. A new Efficient 2D-CNN Artifact Removal (E2CAR) framework is also represented using the method above, and it achieves a 90% reduction in inference time on the TPU and decreases power consumption by 18.98%, while maintaining comparable artifact removal performance to existing methods. This approach facilitates efficient EEG signal processing on edge devices.

💡 Research Summary

The paper addresses the pressing need for real‑time EEG artifact removal on resource‑constrained edge devices. Traditional artifact‑removal techniques such as ICA, CCA, or even recent deep‑learning models rely heavily on one‑dimensional (1‑D) convolutional neural networks (CNNs). While 1‑D CNNs are a natural fit for time‑series data, they are not optimal for hardware accelerators that are designed for matrix‑multiplication‑centric workloads.

To overcome this mismatch, the authors propose converting the 1‑D EEG segment (800 samples at 200 Hz) into a “pseudo‑image” of size 1 × 800 and feeding it to a two‑dimensional (2‑D) CNN. Modern edge processors such as the ARM Cortex‑M7 (found on Google’s Coral Dev Board mini) and the Edge Tensor Processing Unit (TPU) contain DSP instructions and specialized systolic arrays that execute 2‑D convolutions far more efficiently than 1‑D equivalents. By exploiting this hardware characteristic, the authors achieve a dramatic reduction in inference latency.

The core model, named E2CAR (Efficient 2D‑CNN Artifact Removal), builds upon a denoising autoencoder (DAE) architecture but augments it with six residual blocks arranged in a 3 × 2 grid. Each block contains convolutional kernels of sizes 1 × 3, 1 × 5, and 1 × 7, deliberately chosen to capture the spectral diversity of common EEG artifacts: large kernels for low‑frequency, long‑duration EOG (eye‑movement) artifacts; small kernels for high‑frequency, short‑duration EMG (muscle) artifacts; and medium kernels for motion‑related disturbances that span a broader frequency range. The residual connections mitigate vanishing‑gradient problems and accelerate convergence.

Data preprocessing follows a standardized pipeline. Three publicly available datasets are used: an EOG set (54 clean‑corrupted pairs from 27 subjects), a motion‑artifact set (23 pairs), and an EMG set (5,598 segments). All recordings are down‑sampled to 200 Hz, detrended, and band‑pass filtered (1–50 Hz) only for the clean references; the corrupted signals remain unfiltered to preserve artifact characteristics. Segments of 4 seconds (800 points) are extracted with 50 % overlap, and each segment is min‑max normalized to

Comments & Academic Discussion

Loading comments...

Leave a Comment