Experience-Driven Multi-Agent Systems Are Training-free Context-aware Earth Observers

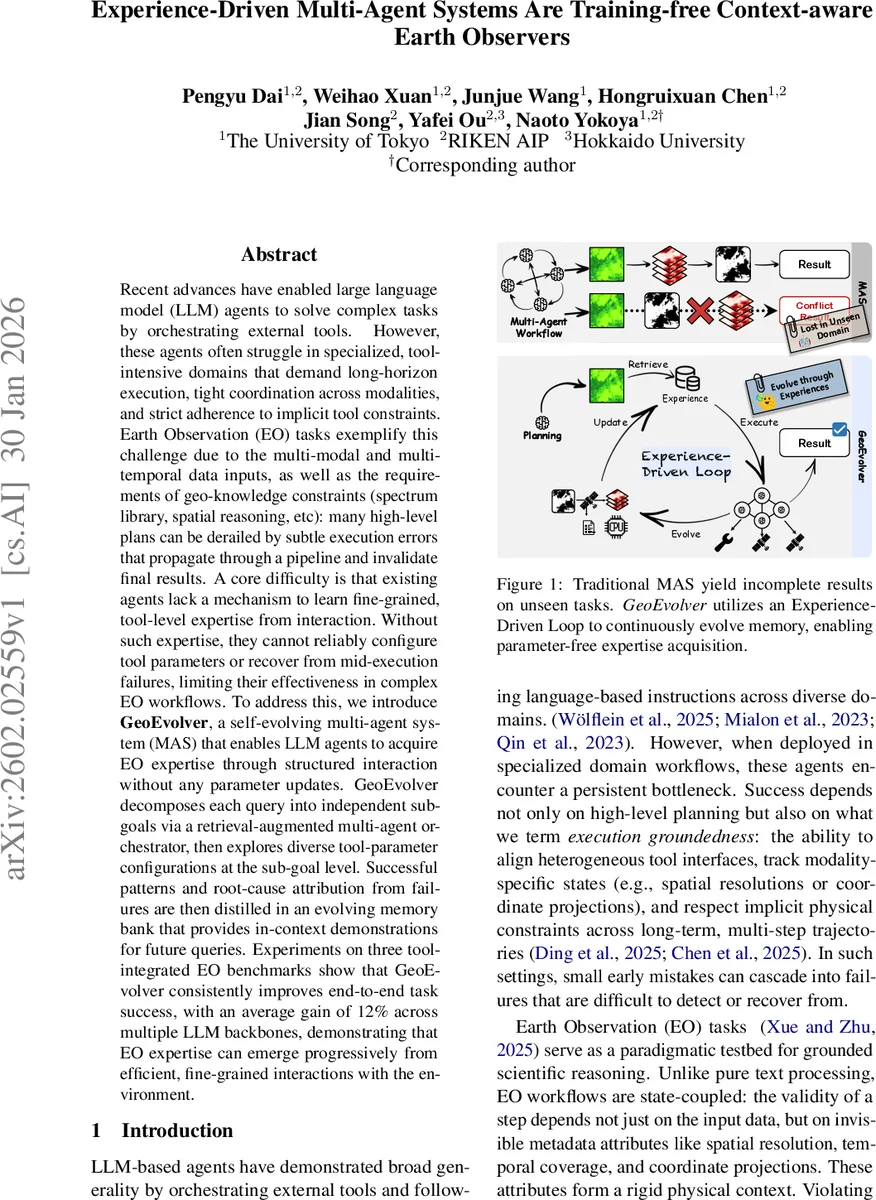

Recent advances have enabled large language model (LLM) agents to solve complex tasks by orchestrating external tools. However, these agents often struggle in specialized, tool-intensive domains that demand long-horizon execution, tight coordination across modalities, and strict adherence to implicit tool constraints. Earth Observation (EO) tasks exemplify this challenge due to the multi-modal and multi-temporal data inputs, as well as the requirements of geo-knowledge constraints (spectrum library, spatial reasoning, etc): many high-level plans can be derailed by subtle execution errors that propagate through a pipeline and invalidate final results. A core difficulty is that existing agents lack a mechanism to learn fine-grained, tool-level expertise from interaction. Without such expertise, they cannot reliably configure tool parameters or recover from mid-execution failures, limiting their effectiveness in complex EO workflows. To address this, we introduce \textbf{GeoEvolver}, a self-evolving multi-agent system~(MAS) that enables LLM agents to acquire EO expertise through structured interaction without any parameter updates. GeoEvolver decomposes each query into independent sub-goals via a retrieval-augmented multi-agent orchestrator, then explores diverse tool-parameter configurations at the sub-goal level. Successful patterns and root-cause attribution from failures are then distilled in an evolving memory bank that provides in-context demonstrations for future queries. Experiments on three tool-integrated EO benchmarks show that GeoEvolver consistently improves end-to-end task success, with an average gain of 12% across multiple LLM backbones, demonstrating that EO expertise can emerge progressively from efficient, fine-grained interactions with the environment.

💡 Research Summary

The paper addresses a critical limitation of large‑language‑model (LLM) agents when they are applied to highly specialized, tool‑intensive domains such as Earth Observation (EO). While recent advances have shown that LLM‑based agents can orchestrate external tools to solve complex tasks, they often fail in EO pipelines because subtle, low‑level execution errors (e.g., mismatched coordinate reference systems, incorrect spatial resolutions, or improper temporal slicing) cascade and invalidate downstream results. Existing agents lack a mechanism to acquire fine‑grained, tool‑level expertise from interaction, and therefore cannot reliably configure tool parameters or recover from mid‑execution failures without costly fine‑tuning.

To overcome this gap, the authors propose GeoEvolver, a self‑evolving multi‑agent system that learns EO expertise purely from interaction, without any parameter updates. GeoEvolver follows three design principles: (1) Decomposition – a query is split into independent sub‑goals, each with explicit I/O contracts and success criteria; (2) Accumulation – for each sub‑goal the system launches multiple parallel exploration variants that try diverse tool‑parameter configurations, collecting detailed feedback (error messages, logs, format checks) from every attempt; (3) Self‑Evolution – successful patterns and root‑cause analyses of failures are distilled into a hierarchical memory bank that supplies in‑context demonstrations for future queries.

The architecture consists of four operators forming a retrieve‑plan‑execute‑judge loop. The Retriever queries a global memory bank B to assemble a “strategy context” c by pulling the most similar past experiences (both successful workflows and failure guardrails). Conditioned on (q, c), the Orchestrator decomposes the query into N sub‑goals {gₙ} and dispatches each to a specialized Executor. Executors run independently, allowing parallelism and precise failure attribution. The Judge aggregates all sub‑trajectories, emits a binary success flag Y and auxiliary validity signals v (e.g., format compliance, numeric matching). Multiple exploration variants (K of them) are evaluated, and the best solution is selected based on success and the validity score.

Memory is organized in two tiers. The Global Memory Bank stores distilled procedural knowledge: reusable tool‑chain patterns, parameter ranges, and contrastive failure patterns. Retrieval uses embedding similarity and applies a leakage filter to avoid contaminating benchmark evaluation. The Working Memory compresses the episode‑level interaction history, summarizing older steps while keeping the most recent L raw actions, thus staying within LLM context limits.

Experience‑driven evolution proceeds at two levels. First, the best‑performing variant is extracted and its successful steps are added to the bank as new templates. Second, a contrastive distillation step compares all variants, extracting common successful strategies (C_strat) and systematic failure causes (C_fail). These are stored as separate entries, enabling the system to pre‑emptively avoid known pitfalls and to prioritize high‑probability configurations in future queries.

The authors evaluate GeoEvolver on three tool‑integrated EO benchmarks (e.g., TVDI computation, annual average calculation, linear trend analysis) using multiple LLM backbones (GPT‑4, Claude‑2, Llama‑2). Across all settings GeoEvolver improves end‑to‑end task success by an average of 12 % relative to baseline agents that lack the experience‑driven loop. Detailed ablations show that the gain is primarily due to increased sub‑goal success probabilities pₙ, which stem from the reuse of distilled execution priors. Failure analysis confirms that most baseline errors arise from violations of implicit physical constraints rather than from high‑level planning mistakes.

In the discussion, the paper contrasts GeoEvolver with prior multi‑agent systems that rely on static workflows (debate, role‑playing) or on fine‑tuned skill libraries. Those approaches become brittle when faced with new domains or novel toolchains, whereas GeoEvolver’s dynamic decomposition and memory‑based specialization make it adaptable without any gradient updates. The authors also note that their memory design deliberately captures both successes and failures, addressing a common shortcoming in existing experience‑based methods that focus only on positive examples.

Overall, the contribution is a novel paradigm: by treating tool‑level interaction as a source of reusable knowledge and by feeding that knowledge back into the LLM via in‑context demonstrations, an agent can progressively acquire domain expertise “for free.” The paper suggests future work on scaling the memory mechanisms, extending to more complex multimodal pipelines, and applying the framework to other scientific domains such as climate modeling or bioinformatics.

Comments & Academic Discussion

Loading comments...

Leave a Comment