PA-MIL: Phenotype-Aware Multiple Instance Learning Guided by Language Prompting and Genotype-to-Phenotype Relationships

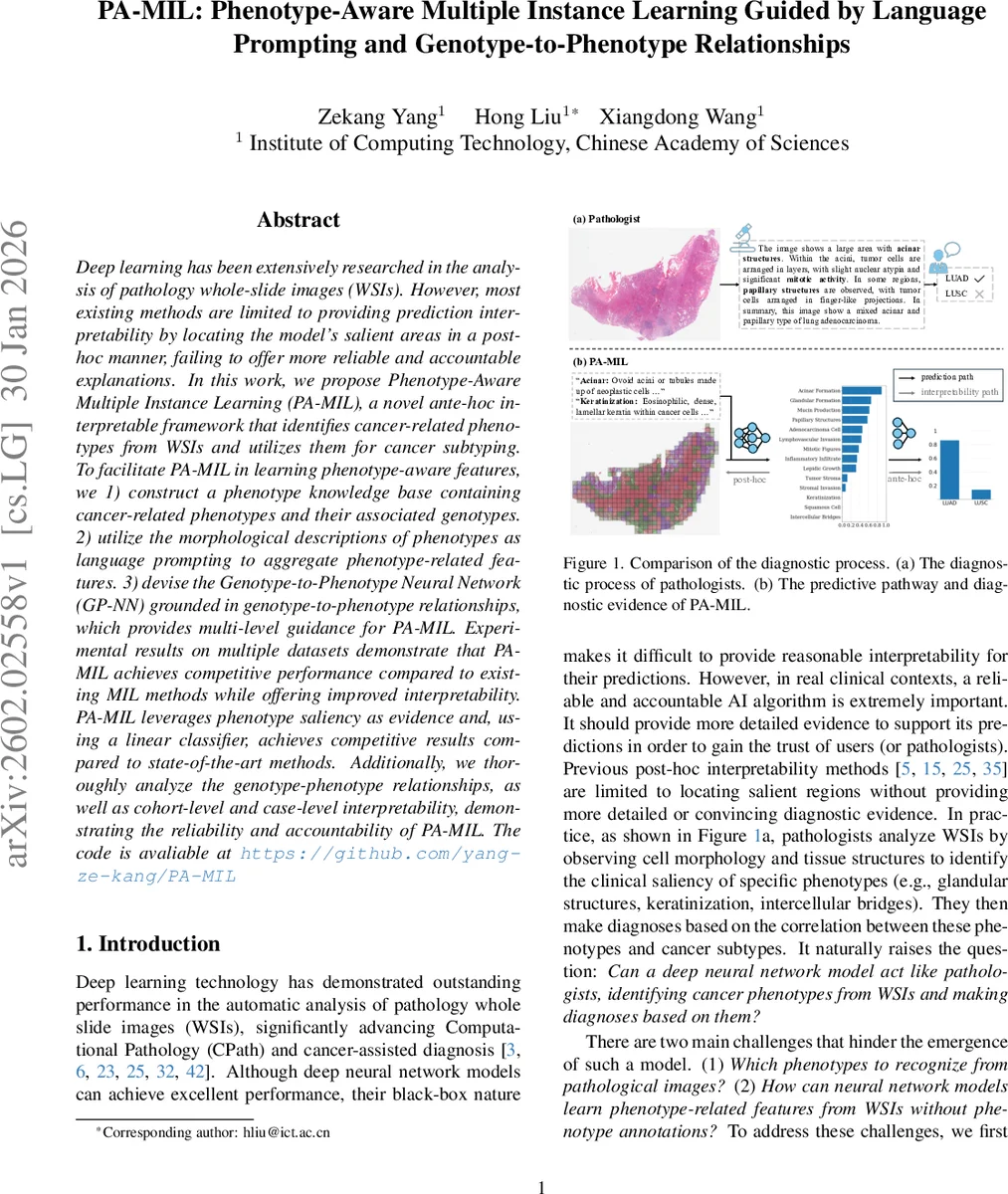

Deep learning has been extensively researched in the analysis of pathology whole-slide images (WSIs). However, most existing methods are limited to providing prediction interpretability by locating the model’s salient areas in a post-hoc manner, failing to offer more reliable and accountable explanations. In this work, we propose Phenotype-Aware Multiple Instance Learning (PA-MIL), a novel ante-hoc interpretable framework that identifies cancer-related phenotypes from WSIs and utilizes them for cancer subtyping. To facilitate PA-MIL in learning phenotype-aware features, we 1) construct a phenotype knowledge base containing cancer-related phenotypes and their associated genotypes. 2) utilize the morphological descriptions of phenotypes as language prompting to aggregate phenotype-related features. 3) devise the Genotype-to-Phenotype Neural Network (GP-NN) grounded in genotype-to-phenotype relationships, which provides multi-level guidance for PA-MIL. Experimental results on multiple datasets demonstrate that PA-MIL achieves competitive performance compared to existing MIL methods while offering improved interpretability. PA-MIL leverages phenotype saliency as evidence and, using a linear classifier, achieves competitive results compared to state-of-the-art methods. Additionally, we thoroughly analyze the genotype-phenotype relationships, as well as cohort-level and case-level interpretability, demonstrating the reliability and accountability of PA-MIL.

💡 Research Summary

The paper introduces PA‑MIL (Phenotype‑Aware Multiple Instance Learning), a novel ante‑hoc interpretable framework for computational pathology that directly models cancer‑related phenotypes from whole‑slide images (WSIs) and uses these phenotypes as evidence for cancer subtyping. The authors first construct a phenotype knowledge base by prompting large language models (LLMs) and consulting pathologists to identify a set of N phenotypes relevant to a given cancer type. For each phenotype they collect (i) a textual morphological description and (ii) a gene set associated with the phenotype, retrieved via the GenCLiP tool from PubMed. This knowledge base supplies both language prompts and genotype‑phenotype links that guide the learning process.

PA‑MIL processes a WSI by dividing it into M patches, extracting patch embeddings with a pretrained image encoder (e.g., ResNet‑50), and encoding phenotype names plus descriptions with a frozen CLIP‑style text encoder. A learnable cross‑attention module aligns the textual embeddings (queries) with the patch embeddings (keys/values), producing N phenotype‑specific feature vectors V_j for each slide. To enforce that the same phenotype across different slides yields similar embeddings while different phenotypes stay distinct, a contrastive loss is applied, with cluster centers updated via a momentum scheme.

The phenotype feature vectors are passed through a bottleneck linear layer followed by layer‑normalization to produce saliency scores S_j for each phenotype. These scores serve as interpretable concepts: a linear classifier then predicts the cancer subtype from S_j, making the contribution of each phenotype directly readable. Because the classifier is linear, the weight of each phenotype reflects its diagnostic importance.

To further guide the model, the authors design a Genotype‑to‑Phenotype Neural Network (GP‑NN) that consumes RNA‑Seq data. For each phenotype, the corresponding gene set is fed into a dedicated MLP, yielding genotype‑derived phenotype features Z_j. The same bottleneck‑LN pipeline predicts saliency scores ˆS_j, which are used to classify the subtype. GP‑NN shares the same architecture as PA‑MIL, allowing it to act as a teacher: its phenotype features and saliency predictions provide multi‑level supervision (feature‑level and concept‑level) to PA‑MIL during training.

Training optimizes three components: (1) contrastive loss for phenotype feature separation, (2) a loss aligning PA‑MIL saliency scores with GP‑NN predictions, and (3) cross‑entropy loss for the final subtype classification. The combined objective enables PA‑MIL to learn phenotype‑aware representations that are biologically grounded and visually discriminative.

Experiments on several TCGA cohorts (lung adenocarcinoma vs. squamous cell carcinoma, gastric cancer, breast cancer) show that PA‑MIL achieves AUCs comparable to or slightly better than state‑of‑the‑art MIL methods such as ABMIL, DSMIL, and CLAM. Importantly, the phenotype saliency maps align with pathologists’ diagnostic cues (e.g., acinar structures, keratinization, intercellular bridges). The GP‑NN analysis further reveals biologically plausible genotype‑phenotype links, such as KRAS mutations correlating with acinar morphology and EGFR alterations with keratinization, confirming that the model’s internal reasoning mirrors known molecular pathology.

The authors discuss limitations: the phenotype knowledge base relies on expert‑curated LLM outputs, requiring reconstruction for new cancer types; large paired WSI‑transcriptome datasets are scarce; and the linear classifier, while interpretable, may limit performance on more nuanced subtyping tasks. Future work could explore automated phenotype discovery, integration of single‑cell transcriptomics, and hybrid models balancing interpretability with non‑linear predictive power.

In summary, PA‑MIL bridges language, genomics, and image analysis to embed clinically meaningful phenotypes within a MIL framework, delivering both high diagnostic accuracy and transparent, concept‑level explanations—an important step toward trustworthy AI in pathology.

Comments & Academic Discussion

Loading comments...

Leave a Comment