ToolTok: Tool Tokenization for Efficient and Generalizable GUI Agents

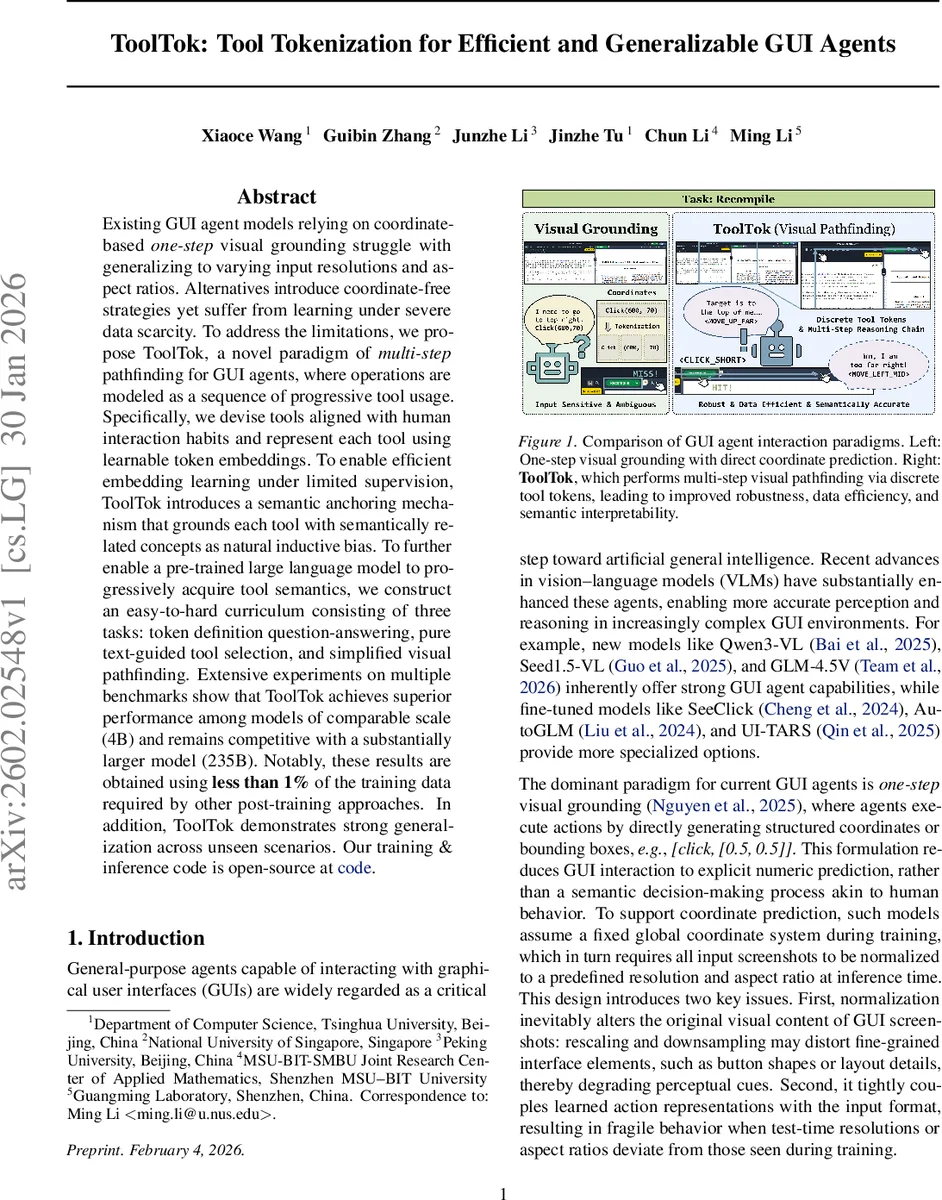

Existing GUI agent models relying on coordinate-based one-step visual grounding struggle with generalizing to varying input resolutions and aspect ratios. Alternatives introduce coordinate-free strategies yet suffer from learning under severe data scarcity. To address the limitations, we propose ToolTok, a novel paradigm of multi-step pathfinding for GUI agents, where operations are modeled as a sequence of progressive tool usage. Specifically, we devise tools aligned with human interaction habits and represent each tool using learnable token embeddings. To enable efficient embedding learning under limited supervision, ToolTok introduces a semantic anchoring mechanism that grounds each tool with semantically related concepts as natural inductive bias. To further enable a pre-trained large language model to progressively acquire tool semantics, we construct an easy-to-hard curriculum consisting of three tasks: token definition question-answering, pure text-guided tool selection, and simplified visual pathfinding. Extensive experiments on multiple benchmarks show that ToolTok achieves superior performance among models of comparable scale (4B) and remains competitive with a substantially larger model (235B). Notably, these results are obtained using less than 1% of the training data required by other post-training approaches. In addition, ToolTok demonstrates strong generalization across unseen scenarios. Our training & inference code is open-source at https://github.com/ZephinueCode/ToolTok.

💡 Research Summary

ToolTok introduces a fundamentally new approach to GUI‑agent interaction by replacing one‑step coordinate regression with a multi‑step, token‑based decision process that mirrors how humans manipulate graphical interfaces. Instead of predicting absolute screen coordinates, the agent selects from a discrete vocabulary of “tool” tokens that encode high‑level actions such as moving the cursor, navigating the OS, clicking, scrolling, and entering text. Movement tokens are further decomposed into direction (up, down, left, right) and scale (far, mid, close), enabling a coarse‑to‑fine search strategy akin to human cursor control.

A central technical contribution is “Spherical Semantic Anchoring.” For each tool token, a small set of natural‑language anchors (e.g., {move, up, north, far} for <MOVE UP FAR>) is curated. The pre‑trained vision‑language model’s embedding function is used to compute the average embedding of these anchors, which is then projected onto the hypersphere defined by the average norm of the original vocabulary. This yields an initialization that places new tokens within the semantic manifold of the pre‑trained model, dramatically reducing the cold‑start problem and allowing effective learning with extremely limited supervision.

Training follows a three‑stage curriculum. Stage I uses 5,000 synthetic examples—token‑definition QA, pure‑text tool selection, and simplified visual path‑finding—to teach the model the pure linguistic semantics of each tool. Stage II introduces “oracle trajectories”: given a random start cursor position and a target bounding box, a greedy algorithm selects the optimal tool token at each step to minimize Euclidean distance, thereby converting static (image, bbox) pairs into multi‑step supervision sequences. Stage III fine‑tunes on real‑world GUI datasets (e.g., ScreenSpot, ScreenSpot‑Pro) using these synthesized trajectories, augmented with procedurally generated Chain‑of‑Thought (CoT) explanations that force the model to articulate why a particular tool was chosen.

Extensive evaluation shows that a 4‑billion‑parameter model trained with ToolTok outperforms both coordinate‑based and coordinate‑free baselines across multiple benchmarks, and remains competitive with a 235‑billion‑parameter model. Crucially, ToolTok’s performance is robust to drastic changes in screenshot resolution and aspect ratio, and it achieves these results using less than 1 % of the training data required by prior post‑training methods (approximately 5 k synthetic samples).

The paper acknowledges limitations: the current tool vocabulary covers only basic actions and does not yet support complex gestures such as drag‑and‑drop, multi‑touch, or dynamic UI elements. Moreover, the oracle trajectory generation relies on a heuristic distance‑minimization that may be suboptimal for non‑linear or highly dynamic interfaces. Future work is suggested to expand the tool set dynamically, integrate reinforcement‑learning‑based trajectory optimization, and incorporate multimodal feedback (audio, haptic) to handle richer interaction scenarios. Overall, ToolTok demonstrates that semantic anchoring combined with curriculum learning can yield data‑efficient, generalizable GUI agents that operate through interpretable, discrete tool tokens.

Comments & Academic Discussion

Loading comments...

Leave a Comment