Auto-Augmentation Contrastive Learning for Wearable-based Human Activity Recognition

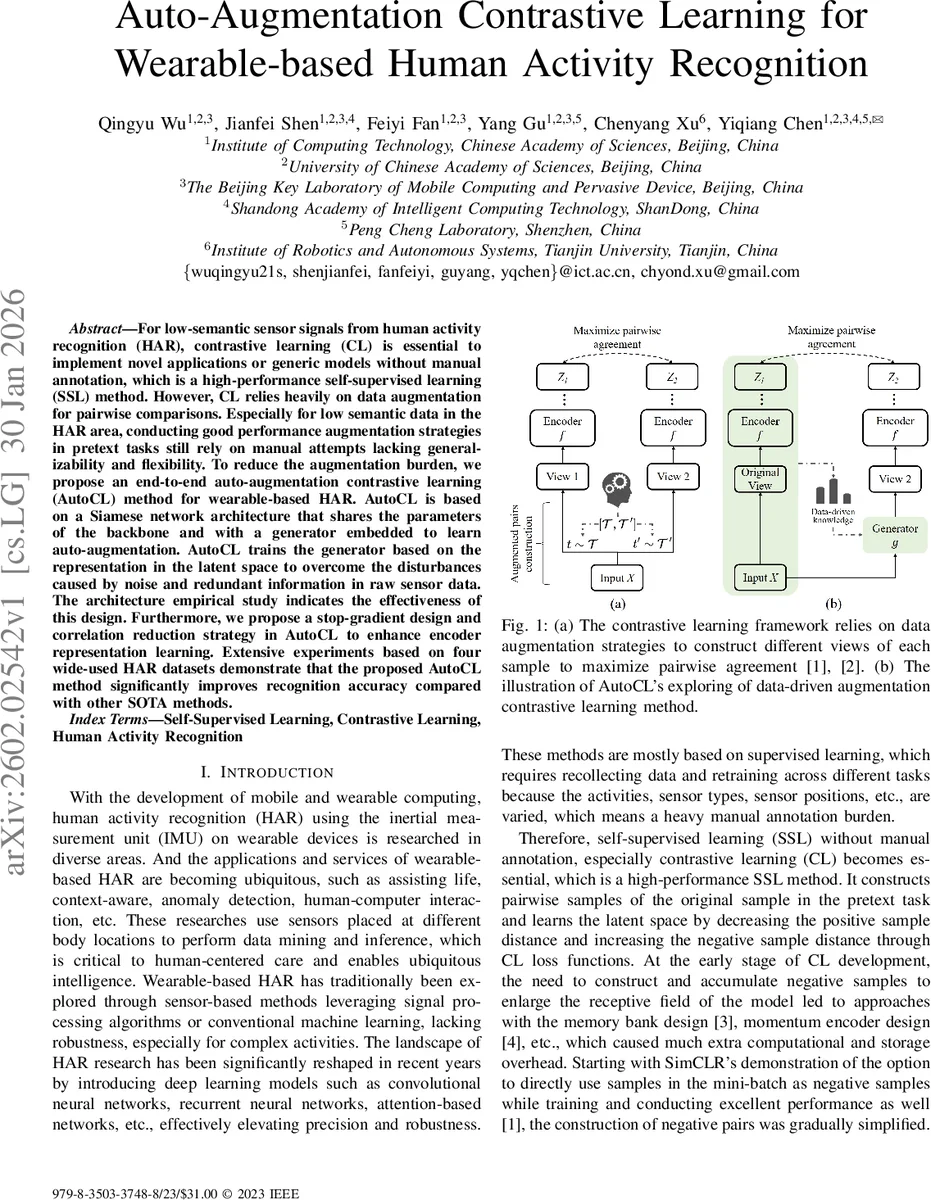

For low-semantic sensor signals from human activity recognition (HAR), contrastive learning (CL) is essential to implement novel applications or generic models without manual annotation, which is a high-performance self-supervised learning (SSL) method. However, CL relies heavily on data augmentation for pairwise comparisons. Especially for low semantic data in the HAR area, conducting good performance augmentation strategies in pretext tasks still rely on manual attempts lacking generalizability and flexibility. To reduce the augmentation burden, we propose an end-to-end auto-augmentation contrastive learning (AutoCL) method for wearable-based HAR. AutoCL is based on a Siamese network architecture that shares the parameters of the backbone and with a generator embedded to learn auto-augmentation. AutoCL trains the generator based on the representation in the latent space to overcome the disturbances caused by noise and redundant information in raw sensor data. The architecture empirical study indicates the effectiveness of this design. Furthermore, we propose a stop-gradient design and correlation reduction strategy in AutoCL to enhance encoder representation learning. Extensive experiments based on four wide-used HAR datasets demonstrate that the proposed AutoCL method significantly improves recognition accuracy compared with other SOTA methods.

💡 Research Summary

The paper addresses a fundamental bottleneck in contrastive learning (CL) for wearable‑based human activity recognition (HAR): the reliance on manually designed data augmentation strategies. Sensor signals from inertial measurement units (IMU) are low‑semantic and highly susceptible to noise, making it difficult to define generic augmentations that work across devices, sampling rates, and activity sets. To eliminate this “augmentation burden,” the authors propose Auto‑augmentation Contrastive Learning (AutoCL), an end‑to‑end self‑supervised framework that learns how to augment data automatically while training the encoder.

Core Architecture

AutoCL builds on a Siamese network (two identical branches sharing parameters) composed of an encoder fθ and a projector pθ, similar to SimCLR. A novel generator gξ is embedded between the two branches. Instead of feeding raw time‑series into the generator, AutoCL first passes the raw sample x through the encoder and projector to obtain a latent embedding yθ. This embedding is duplicated over the temporal dimension and fed into a three‑layer bidirectional GRU (BiGRU). The BiGRU’s hidden size is set to 4 × C (C = number of sensor channels). A final 1‑D group convolution maps the hidden representation back to the original channel dimension, producing an auto‑augmented sample x′θ,ξ. By operating in the latent space, the generator can focus on meaningful transformations while being less affected by sensor noise and redundant information.

Training Objectives

The primary loss is the normalized temperature‑scaled cross‑entropy (NT‑Xent) used in SimCLR, which encourages the projection of the original sample (yθ) and its auto‑augmented counterpart (y′θ,ξ) to be close while pushing away all other samples in the batch. To further improve the quality of positive pairs, the authors add a correlation reduction term that penalizes high Pearson correlation between the raw signal x and its generated version x′. This term forces the generator to produce sufficiently diverse augmentations, preventing the model from collapsing into an auto‑encoder‑like behavior where x′≈x.

A stop‑gradient operation is applied to yθ before it is fed into the generator. This ensures that gradients from the generator do not flow back into the encoder‑projector path for the original view, keeping the two learning streams distinct: the encoder learns from both views, while the generator learns solely from the frozen latent representation.

The final loss is: L_AutoCL = L_NTXent + λ · R_corr, where R_corr is the correlation reduction term and λ is a hyper‑parameter tuned empirically.

Implementation Details

- Encoder: 3‑layer fully convolutional network (FCN) with 1‑D convolutions (kernel = 8, stride = 1), batch normalization, ReLU, max‑pool (size = 2), and 10 % dropout.

- Projector: Two‑layer MLP (256 → 128 units) with batch normalization, ReLU, and a softmax‑like final activation for representation normalization.

- Generator: Feature duplication → BiGRU (3 layers) → 1‑D group convolution (kernel = 1) to reconstruct the time‑series shape.

- Optimizer: Adam with learning rate = 1e‑3 and weight decay = 1e‑3.

Experimental Evaluation

Four widely used HAR datasets were used: UCI HAR, PAMAP2, HHAR, and WISDM. For each dataset, the authors performed self‑supervised pre‑training on the unlabeled portion (D_pre) and then fine‑tuned the encoder on a labeled subset (D_tune). Baselines included SimCLR, BYOL, MoCo, and several handcrafted augmentation pipelines (scale, permutation, jitter, etc.).

Results show that AutoCL consistently outperforms all baselines, achieving absolute accuracy gains of 3–5 percentage points across datasets. The improvement is especially pronounced on datasets with fewer sensor channels or shorter windows, indicating that the learned augmentations adapt well to limited information scenarios. Ablation studies confirm that both the stop‑gradient and correlation reduction components are essential: removing stop‑gradient leads to unstable training, while omitting the correlation term reduces accuracy by ~1 %.

t‑SNE visualizations of the learned embeddings reveal clearer class separation for AutoCL compared with SimCLR, and qualitative inspection of generated samples demonstrates a richer variety of transformations than manually designed augmentations, while still preserving activity semantics.

Discussion and Limitations

The paper acknowledges that the current generator design is tailored to 1‑D IMU data; extending to multimodal inputs (e.g., video + IMU) would require architectural changes, possibly replacing the BiGRU with a Transformer‑based generator. Training time is modestly higher (≈30 % longer) due to on‑the‑fly generation of augmented samples. Moreover, the correlation reduction term introduces an extra hyper‑parameter λ that may need tuning for new domains.

Future work suggested includes: (1) exploring more expressive generators (e.g., Temporal Convolutional Networks, Transformers), (2) integrating meta‑learning to adapt augmentation policies across devices, and (3) evaluating the framework in fully unsupervised settings where downstream tasks are unknown at pre‑training time.

Conclusion

AutoCL introduces a principled way to learn data augmentations automatically for low‑semantic wearable sensor streams, eliminating the need for handcrafted augmentation pipelines. By coupling a latent‑space generator with stop‑gradient and correlation‑reduction mechanisms, the method achieves superior self‑supervised representation learning, leading to state‑of‑the‑art performance on multiple HAR benchmarks. This work significantly advances the practicality of self‑supervised learning in wearable‑based activity recognition, where labeling costs are high and sensor characteristics vary widely.

Comments & Academic Discussion

Loading comments...

Leave a Comment