DETOUR: An Interactive Benchmark for Dual-Agent Search and Reasoning

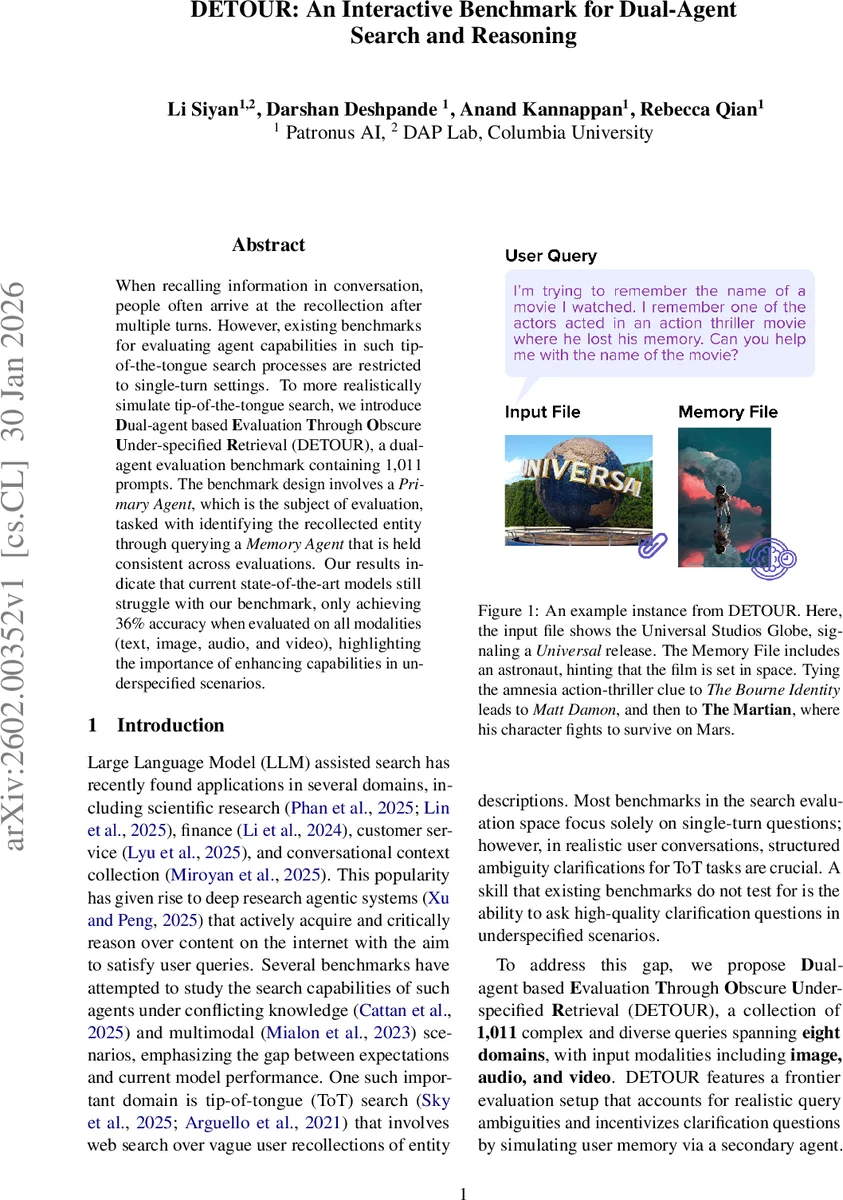

When recalling information in conversation, people often arrive at the recollection after multiple turns. However, existing benchmarks for evaluating agent capabilities in such tip-of-the-tongue search processes are restricted to single-turn settings. To more realistically simulate tip-of-the-tongue search, we introduce Dual-agent based Evaluation Through Obscure Under-specified Retrieval (DETOUR), a dual-agent evaluation benchmark containing 1,011 prompts. The benchmark design involves a Primary Agent, which is the subject of evaluation, tasked with identifying the recollected entity through querying a Memory Agent that is held consistent across evaluations. Our results indicate that current state-of-the-art models still struggle with our benchmark, only achieving 36% accuracy when evaluated on all modalities (text, image, audio, and video), highlighting the importance of enhancing capabilities in underspecified scenarios.

💡 Research Summary

**

The paper introduces DETOUR, a novel interactive benchmark designed to evaluate dual‑agent search and reasoning capabilities in tip‑of‑the‑tongue (ToT) scenarios. Traditional ToT benchmarks focus on single‑turn queries, which fail to capture the multi‑turn clarification process that humans naturally employ when trying to recall an entity. DETOUR addresses this gap by modeling a conversation between two agents: a Primary Agent, which is the system under test, and a Memory Agent, which holds a “memory file” containing abstract cues (images, audio, video, or text) about the target entity but never the answer itself. The Primary Agent can call two tools—web search and a memory‑query tool—to iteratively ask clarification questions and retrieve information, mimicking a realistic multi‑turn search interaction.

The dataset consists of 1,011 carefully curated prompts spanning eight domains (film, music, history, science, technology, sports, culture, everyday life). Input modalities are diverse: 25 % text‑only, 37 % image, 19 % audio, and 19 % video. Memory files are overwhelmingly visual (96 % images), with a small proportion of audio and video, ensuring that multimodal reasoning is required. Professional annotators constructed each instance by recalling a vague memory, creating the underspecified query, gathering the appropriate media files, and writing a “thought chain” that demonstrates how a competent agent would solve the problem using both tools. Quality control included a second annotator verification, a 30‑minute time‑budget per query, and a GPT‑5 sanity‑check that forced annotators to revise any prompt that could be solved without the Memory Agent.

Evaluation uses a consistent prompting scheme for the Primary Agent (derived from Sky et al., 2025) and a fixed Memory Agent implemented with Gemini‑2.5‑Pro. The Memory Agent is constrained to answer solely from its memory file; a prompt‑optimization loop (using DSPy’s MIPR Ov2) raised the grounding rate from 83.1 % to 97.5 %. Primary Agent answers are judged by GPT‑4o (LLM‑as‑judge) for correctness against the human‑authored ground truth.

Experiments cover a range of tool‑calling capable models: GPT‑5, Gemini‑2.5‑Pro, Claude‑4.5, Llama‑4‑Scout, Llama‑4‑Maverick, Qwen3‑Next‑80B‑A3B‑Thinking, and Kimi‑K2‑Thinking. Results are reported for text‑only, text + image, and full multimodal (text + image + audio + video) settings. GPT‑5 achieves the highest text‑only accuracy (66.9 %) but drops to 36 % when all modalities are present. Other models stay below 70 % even in the easiest setting, and performance degrades markedly when images are added, highlighting the added reasoning burden of visual content. A detailed analysis of memory‑agent query counts shows a negative correlation between the number of queries and accuracy (e.g., GPT‑5: –0.336 Spearman ρ), indicating that excessive or irrelevant clarification attempts hurt performance.

Error analysis reveals two dominant failure modes: (1) agents frequently ask redundant or irrelevant questions to the Memory Agent, leading to “question fatigue” and wasted interaction steps; (2) agents rely heavily on web search and under‑utilize the multimodal cues present in the memory files, especially when those cues are non‑textual. Sample interaction logs (included in the appendix) illustrate how Claude‑4.5 repeatedly asks “Who are the actors?” despite receiving an image that contains no facial details, eventually aborting without a correct answer.

The authors discuss mitigation strategies: improving prompt design to encourage more targeted memory queries, employing reinforcement‑learning‑based policies that reward efficient clarification, and enhancing multimodal encoders to better extract semantic information from images, audio, and video. They also propose future work on human‑in‑the‑loop evaluation of clarification question quality, scaling DETOUR to larger domains, and integrating more sophisticated tool‑use (e.g., code execution, database lookup).

In conclusion, DETOUR provides the first large‑scale, multimodal, multi‑turn benchmark for assessing how well LLM‑based agents can handle ambiguous, under‑specified queries through interactive clarification. The stark performance gap—state‑of‑the‑art models achieving only ~36 % accuracy in the full setting—highlights the urgent need for research on uncertainty detection, high‑quality question generation, and robust multimodal reasoning. DETOUR thus serves as a valuable testbed for the next generation of search agents that must operate effectively in realistic conversational environments.

Comments & Academic Discussion

Loading comments...

Leave a Comment