ReLAPSe: Reinforcement-Learning-trained Adversarial Prompt Search for Erased concepts in unlearned diffusion models

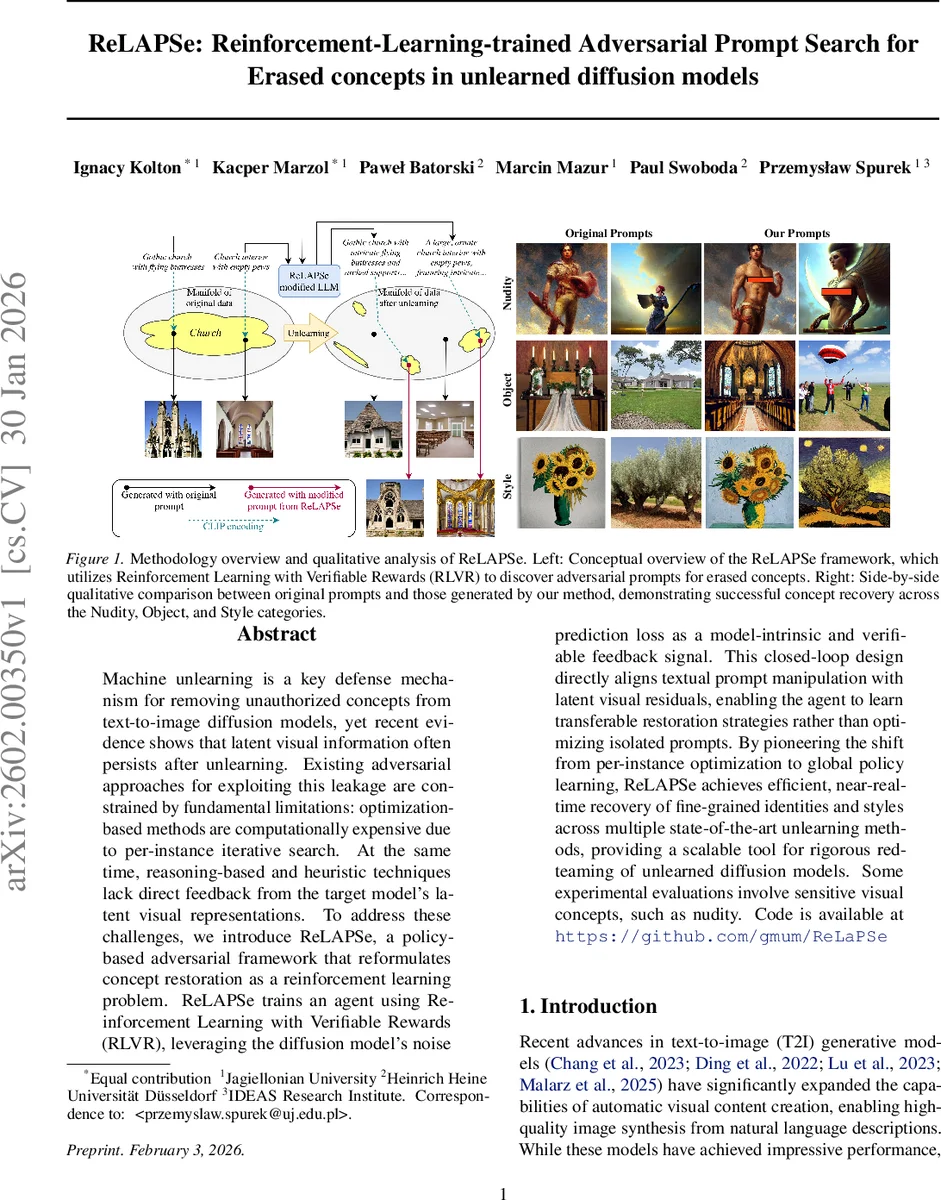

Machine unlearning is a key defense mechanism for removing unauthorized concepts from text-to-image diffusion models, yet recent evidence shows that latent visual information often persists after unlearning. Existing adversarial approaches for exploiting this leakage are constrained by fundamental limitations: optimization-based methods are computationally expensive due to per-instance iterative search. At the same time, reasoning-based and heuristic techniques lack direct feedback from the target model’s latent visual representations. To address these challenges, we introduce ReLAPSe, a policy-based adversarial framework that reformulates concept restoration as a reinforcement learning problem. ReLAPSe trains an agent using Reinforcement Learning with Verifiable Rewards (RLVR), leveraging the diffusion model’s noise prediction loss as a model-intrinsic and verifiable feedback signal. This closed-loop design directly aligns textual prompt manipulation with latent visual residuals, enabling the agent to learn transferable restoration strategies rather than optimizing isolated prompts. By pioneering the shift from per-instance optimization to global policy learning, ReLAPSe achieves efficient, near-real-time recovery of fine-grained identities and styles across multiple state-of-the-art unlearning methods, providing a scalable tool for rigorous red-teaming of unlearned diffusion models. Some experimental evaluations involve sensitive visual concepts, such as nudity. Code is available at https://github.com/gmum/ReLaPSe

💡 Research Summary

ReLAPSe introduces a reinforcement‑learning framework for uncovering residual concepts in text‑to‑image diffusion models that have undergone machine unlearning. The core idea is to treat prompt generation as a stochastic policy implemented by a large language model (LLM). For each candidate prompt, the target diffusion model’s noise‑prediction loss is measured against a reference image at several diffusion timesteps. The reward is defined as the reduction in mean‑squared error between the true injected noise and the model’s prediction, relative to an unconditional (empty‑prompt) baseline. By averaging this “conditional improvement” across timesteps, the method obtains a verifiable, model‑intrinsic signal that directly links textual manipulation to latent visual residuals.

Training uses Group Relative Policy Optimization (GRPO), which normalizes rewards within each sampled group of prompts, thereby amplifying subtle but consistent improvements and stabilizing learning under sparse reward conditions. ReLAPSe supports two optimization regimes: (1) Single‑Prompt Optimization, which iteratively refines a prompt for a specific target image, and (2) Multi‑Prompt Optimization, a novel approach that discovers diverse prompt ensembles to increase attack robustness and latent‑space coverage. The multi‑prompt strategy enables a single trained policy to generate many effective adversarial prompts, facilitating transfer across different unlearning techniques and concepts.

Empirical evaluation on state‑of‑the‑art unlearning methods (e.g., ESD, FMN) demonstrates that ReLAPSe recovers fine‑grained identities, object categories, and artistic styles with near‑real‑time efficiency—orders of magnitude faster than prior gradient‑based attacks that require thousands of optimization steps per instance. Moreover, the discovered prompt ensembles show high transferability across models, confirming that the learned policy captures generalizable restoration strategies rather than overfitting to a single prompt.

The paper also discusses ethical considerations, noting that sensitive visual concepts (such as nudity) were used solely to benchmark the limits of unlearning defenses. By releasing code and emphasizing red‑team usage, the authors aim to provide a scalable tool for rigorous safety assessment while warning about potential misuse given the method’s speed and generality. In summary, ReLAPSe reframes adversarial concept recovery as a reinforcement‑learning problem with verifiable rewards, offering an efficient, transferable, and principled approach to evaluate and stress‑test unlearned diffusion models.

Comments & Academic Discussion

Loading comments...

Leave a Comment