When RAG Hurts: Diagnosing and Mitigating Attention Distraction in Retrieval-Augmented LVLMs

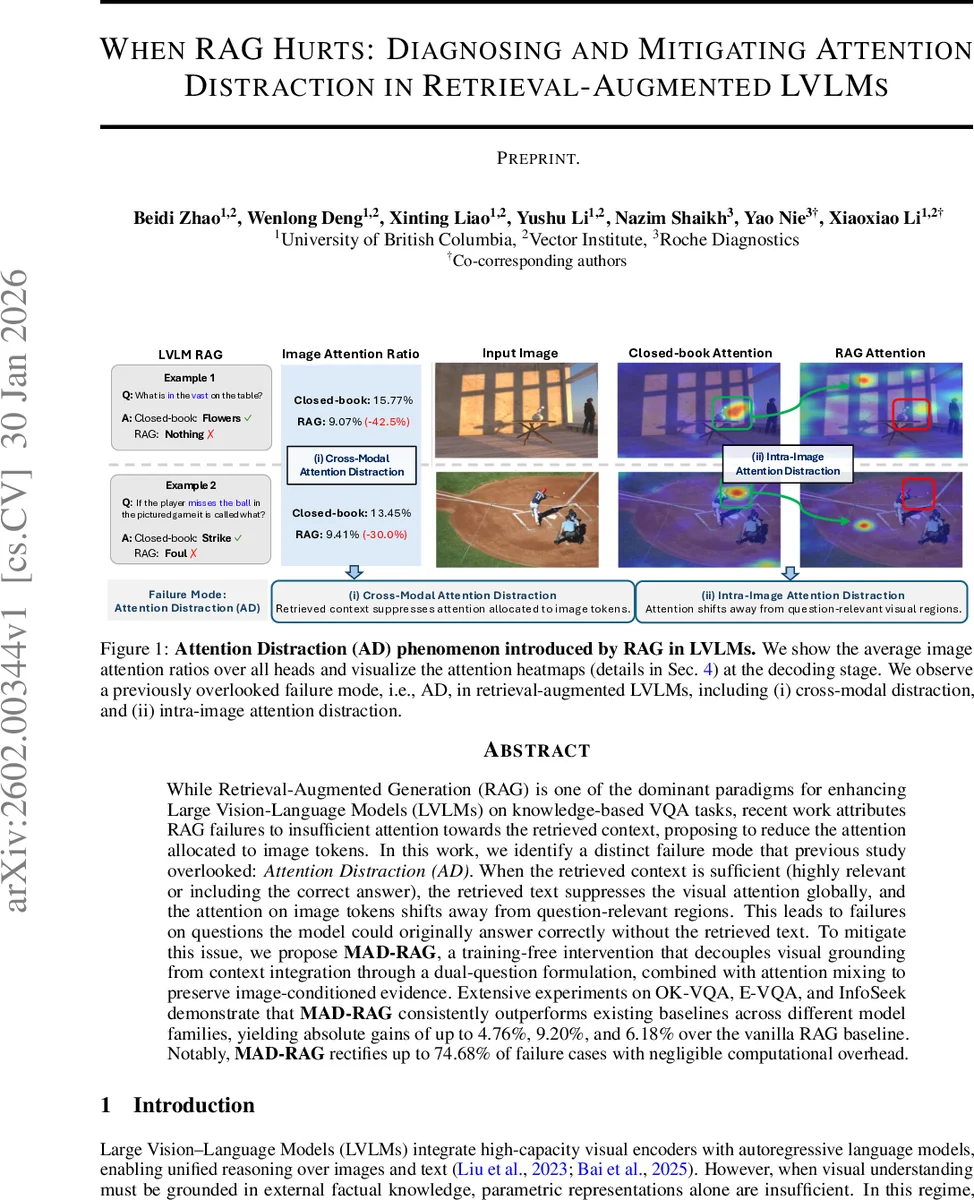

While Retrieval-Augmented Generation (RAG) is one of the dominant paradigms for enhancing Large Vision-Language Models (LVLMs) on knowledge-based VQA tasks, recent work attributes RAG failures to insufficient attention towards the retrieved context, proposing to reduce the attention allocated to image tokens. In this work, we identify a distinct failure mode that previous study overlooked: Attention Distraction (AD). When the retrieved context is sufficient (highly relevant or including the correct answer), the retrieved text suppresses the visual attention globally, and the attention on image tokens shifts away from question-relevant regions. This leads to failures on questions the model could originally answer correctly without the retrieved text. To mitigate this issue, we propose MAD-RAG, a training-free intervention that decouples visual grounding from context integration through a dual-question formulation, combined with attention mixing to preserve image-conditioned evidence. Extensive experiments on OK-VQA, E-VQA, and InfoSeek demonstrate that MAD-RAG consistently outperforms existing baselines across different model families, yielding absolute gains of up to 4.76%, 9.20%, and 6.18% over the vanilla RAG baseline. Notably, MAD-RAG rectifies up to 74.68% of failure cases with negligible computational overhead.

💡 Research Summary

**

The paper investigates a previously overlooked failure mode of Retrieval‑Augmented Generation (RAG) in large vision‑language models (LVLMs) called Attention Distraction (AD). While prior work (e.g., ALF‑AR) argued that RAG under‑utilizes retrieved text because models attend too much to image tokens, this study shows the opposite: when high‑quality, answer‑containing text is added, the model’s attention to visual inputs is globally suppressed and, more critically, the spatial focus on the image shifts away from question‑relevant regions. The authors decompose AD into two phenomena:

- Cross‑modal attention distraction – the proportion of attention allocated to image tokens drops by 12‑41 % across heads, while textual tokens dominate the attention distribution.

- Intra‑image attention distraction – visual attention heatmaps reveal a migration from salient, question‑related patches to background or semantically irrelevant areas.

Importantly, this effect occurs even when the retrieved passage is perfectly relevant, indicating that the degradation stems from how the multimodal decoder integrates long textual contexts, not from retrieval quality.

To counter AD, the authors propose MAD‑RAG (Mitigating Attention Distraction in Retrieval‑Augmented Generation), a training‑free, inference‑time intervention. MAD‑RAG introduces a dual‑question format: the original question is duplicated into two token groups—(Q_I) placed immediately after the image tokens and (Q_C) placed after the retrieved documents. The final input sequence becomes (

Comments & Academic Discussion

Loading comments...

Leave a Comment