Faithful-Patchscopes: Understanding and Mitigating Model Bias in Hidden Representations Explanation of Large Language Models

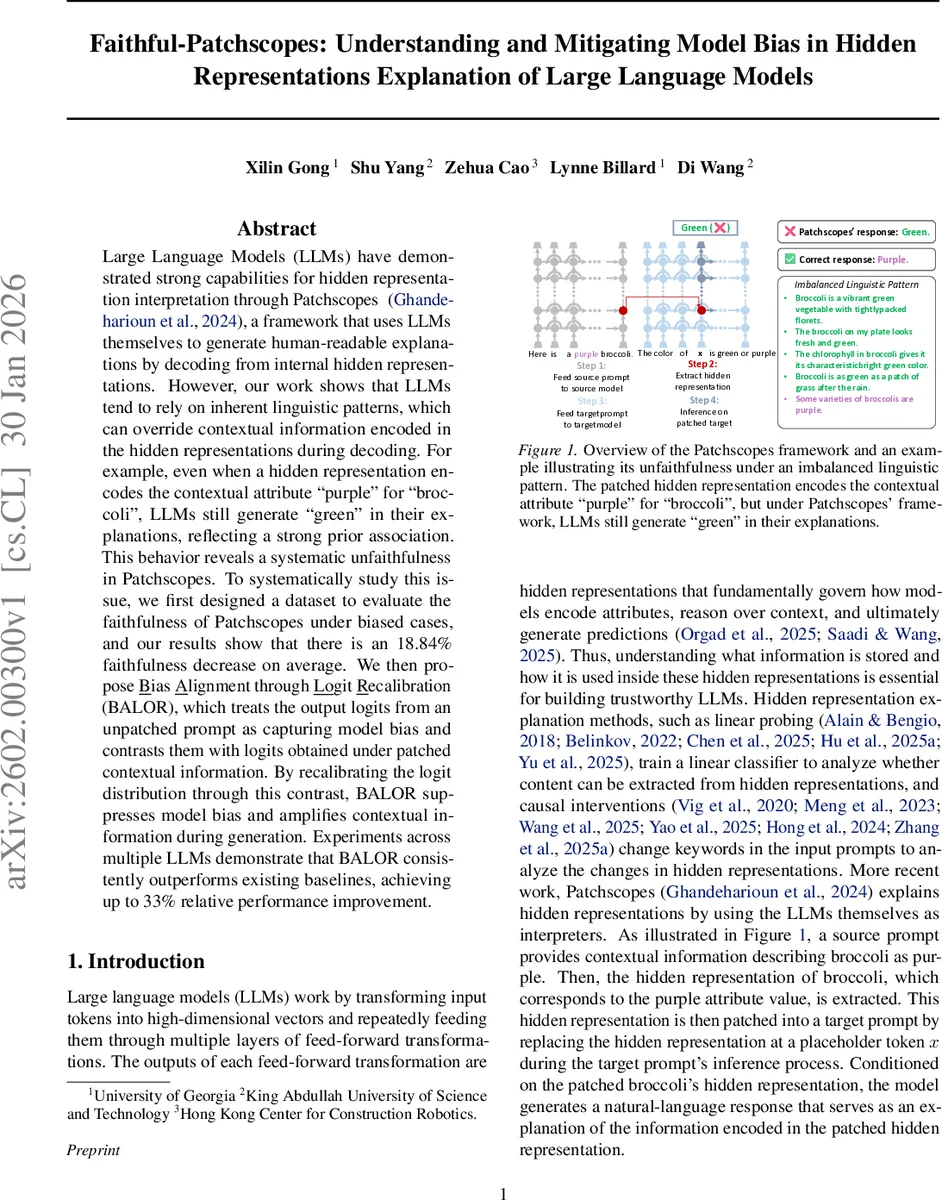

Large Language Models (LLMs) have demonstrated strong capabilities for hidden representation interpretation through Patchscopes, a framework that uses LLMs themselves to generate human-readable explanations by decoding from internal hidden representations. However, our work shows that LLMs tend to rely on inherent linguistic patterns, which can override contextual information encoded in the hidden representations during decoding. For example, even when a hidden representation encodes the contextual attribute “purple” for “broccoli”, LLMs still generate “green” in their explanations, reflecting a strong prior association. This behavior reveals a systematic unfaithfulness in Patchscopes. To systematically study this issue, we first designed a dataset to evaluate the faithfulness of Patchscopes under biased cases, and our results show that there is an 18.84% faithfulness decrease on average. We then propose Bias Alignment through Logit Recalibration (BALOR), which treats the output logits from an unpatched prompt as capturing model bias and contrasts them with logits obtained under patched contextual information. By recalibrating the logit distribution through this contrast, BALOR suppresses model bias and amplifies contextual information during generation. Experiments across multiple LLMs demonstrate that BALOR consistently outperforms existing baselines, achieving up to 33% relative performance improvement.

💡 Research Summary

Patchscopes is a recently proposed framework that interprets hidden representations of large language models (LLMs) by “patching” a source hidden vector into a target prompt and letting the model generate a natural‑language explanation. While this approach promises faithful read‑outs of what the model has encoded, the authors demonstrate a systematic failure: the generated explanations are heavily biased by the model’s learned linguistic priors rather than the actual content of the hidden vector. A concrete example is given with the noun “broccoli”. Even when the hidden representation encodes the color “purple”, the model almost always answers “green”, reflecting the high‑frequency association of broccoli with green in the training data.

To quantify this problem, the authors construct a controlled dataset covering four attribute‑prediction tasks (color, gender, culture, age). Each task contains a biased subset—where the model shows a strong prior preference for one attribute—and a non‑biased subset. They evaluate faithfulness using a “Show Rate” metric, which measures the proportion of generated answers that match the contextual attribute encoded in the hidden vector. Results show a substantial drop in Show Rate for biased cases, ranging from about 11 % to 28 % relative to non‑biased cases, confirming that linguistic bias directly harms explanation fidelity.

The paper then investigates where in the network this bias emerges. Two layer‑wise diagnostics are introduced: Logit Difference (LD), which measures the gap between logits for the contextual versus prior attribute at each layer, and Gradient Similarity Alignment (GSA), which computes the cosine similarity between the output gradient and a linear probe direction for the attribute. Both metrics identify intermediate layers as “bias‑sensitive” – layers where the model begins to encode the prior and where downstream decisions become increasingly dominated by it.

Building on this analysis, the authors propose Bias Alignment through Logit Recalibration (BALOR). BALOR operates at inference time: it first runs the unpatched (original) prompt to obtain a baseline logit distribution, then runs the patched prompt to obtain a second distribution. By subtracting the baseline logits from the patched logits, BALOR effectively removes the component of the prediction that stems from the model’s prior bias, amplifying the signal coming from the patched hidden representation. This logit‑level correction is simple, training‑free, and can be applied to any LLM without altering its parameters.

Extensive experiments on four modern LLMs (Llama‑2‑7B‑Chat, Llama‑3.2‑1B‑Instruct, Llama‑3‑8B‑Instruct, Qwen‑3‑4B‑Instruct‑2507) show that BALOR consistently improves explanation faithfulness. Relative gains reach up to 33 % over the vanilla Patchscopes baseline, and the method remains robust across different decoding temperatures and hyper‑parameter settings. The authors also present an efficient O(n) procedure for locating bias‑sensitive layers, avoiding the quadratic cost of exhaustive search.

In summary, the paper makes three main contributions: (1) a diagnostic dataset and analysis that reveal how linguistic frequency biases degrade hidden‑representation explanations; (2) the BALOR logit‑recalibration technique that mitigates this bias at inference time; and (3) comprehensive empirical validation demonstrating substantial, model‑agnostic improvements in explanation fidelity. The work highlights the importance of accounting for model bias when interpreting internal representations and opens avenues for extending logit‑level debiasing to other types of attributes such as gender or cultural stereotypes.

Comments & Academic Discussion

Loading comments...

Leave a Comment