Divide and Conquer: Multimodal Video Deepfake Detection via Cross-Modal Fusion and Localization

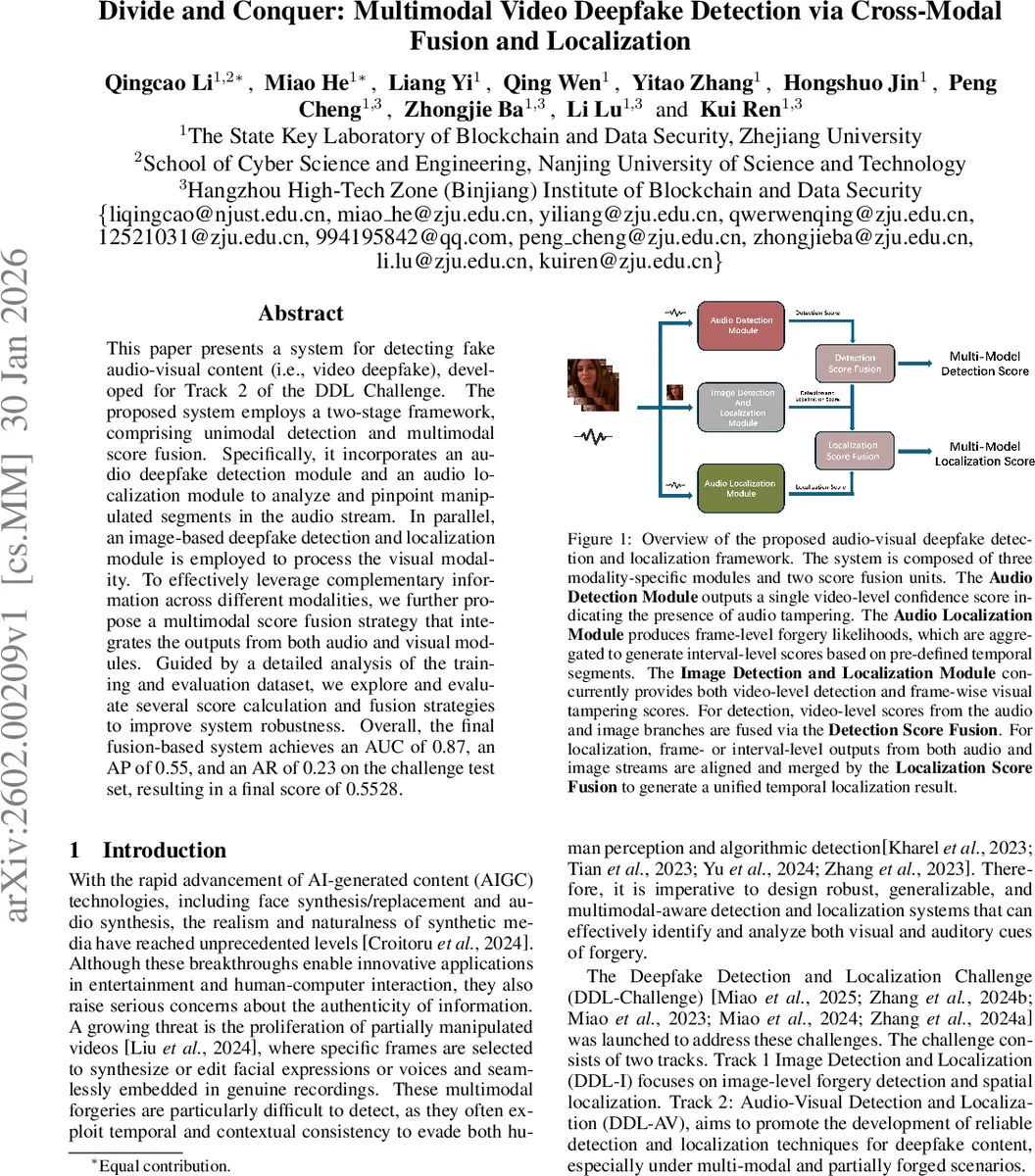

This paper presents a system for detecting fake audio-visual content (i.e., video deepfake), developed for Track 2 of the DDL Challenge. The proposed system employs a two-stage framework, comprising unimodal detection and multimodal score fusion. Specifically, it incorporates an audio deepfake detection module and an audio localization module to analyze and pinpoint manipulated segments in the audio stream. In parallel, an image-based deepfake detection and localization module is employed to process the visual modality. To effectively leverage complementary information across different modalities, we further propose a multimodal score fusion strategy that integrates the outputs from both audio and visual modules. Guided by a detailed analysis of the training and evaluation dataset, we explore and evaluate several score calculation and fusion strategies to improve system robustness. Overall, the final fusion-based system achieves an AUC of 0.87, an AP of 0.55, and an AR of 0.23 on the challenge test set, resulting in a final score of 0.5528.

💡 Research Summary

The paper presents a two‑stage multimodal deepfake detection and localization system designed for Track 2 of the Deepfake Detection and Localization Challenge (DDL‑Challenge). In the first stage, separate unimodal modules process audio and visual streams. The audio detection component builds on the WAV2VEC2‑VEC2.0‑AASIST architecture: XLS‑R extracts multilingual acoustic features, and an AASIST graph neural network classifies 2‑second audio segments. A dynamic labeling scheme re‑assigns segment labels according to overlap with annotated forged intervals, allowing the model to learn partial forgeries. To counter class imbalance and noisy conditions, the loss is re‑weighted and a suite of augmentations (Rawboost, SpecAugment, MUSAN‑based noise, music, and speech) is applied to half of the training data. During inference, a sliding window (2 s length, 1 s stride) generates segment scores that are aggregated by max‑pooling to produce a video‑level confidence. For audio localization, the authors adopt a Boundary‑aware Attention Mechanism (BAM) that uses the self‑supervised WaveLM‑Large front‑end, a Boundary Enhancement Module (BEM) and a Boundary Frame‑wise Attention Module (BFAM). BEM learns intra‑ and inter‑frame boundary features, while BFAM uses a boundary adjacency matrix to suppress information flow across predicted boundaries, yielding frame‑wise forgery probabilities and explicit start/end predictions. The visual branch employs a standard image deepfake detection and localization network (referencing Chollet 2017) to output both video‑level scores and frame‑wise spatial tampering likelihoods. In the second stage, the system fuses the unimodal outputs. Detection Score Fusion combines the audio and visual video‑level confidences (via averaging or weighted averaging) into a single deepfake likelihood. Localization Score Fusion aligns the temporal predictions from audio and visual streams, merges them, and produces unified intervals indicating manipulated segments. This hierarchical cross‑modal fusion exploits complementary cues while preserving modality‑specific anomalies, which is crucial for partially forged or temporally misaligned attacks. The authors also conduct a detailed dataset analysis: the training/validation set contains 325 k videos, with only 34 % authentic and the majority of forged segments lasting ≤0.5 s (57 % audio, 46 % video). Various interference factors such as background noise, blurring, and multi‑speaker speech are prevalent, motivating robust augmentation and short‑segment detection strategies. Experimental results on the challenge test set show an AUC of 0.87 for detection, an Average Precision of 0.55 and an Average Recall of 0.23 for localization, yielding a final composite score of 0.5528. While detection performance is strong, the relatively modest localization scores indicate room for improvement, suggesting future work on finer temporal alignment, multi‑scale attention, and specialized modeling of asynchronous multimodal forgeries.

Comments & Academic Discussion

Loading comments...

Leave a Comment