Intra-Class Subdivision for Pixel Contrastive Learning: Application to Semi-supervised Cardiac Image Segmentation

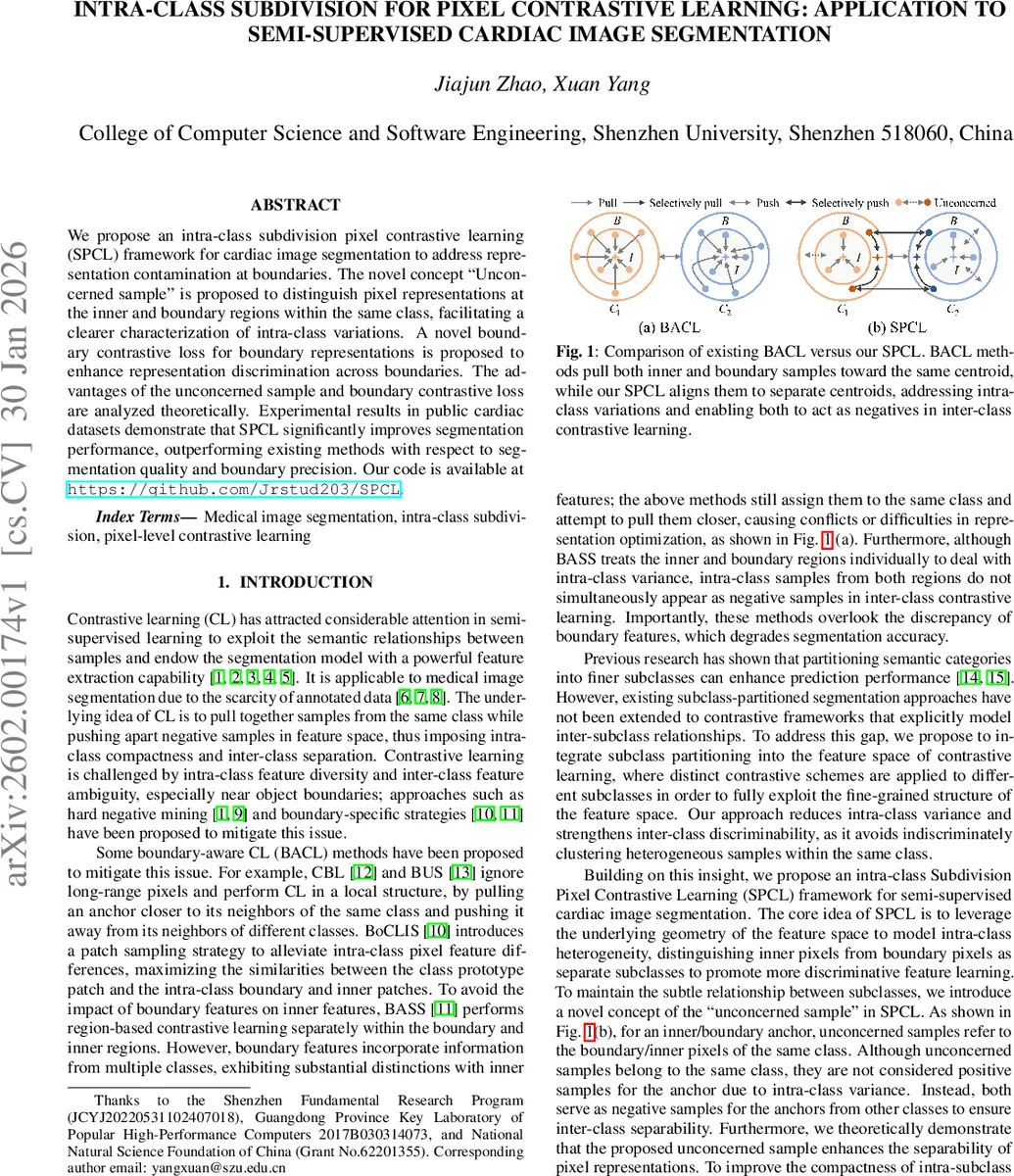

We propose an intra-class subdivision pixel contrastive learning (SPCL) framework for cardiac image segmentation to address representation contamination at boundaries. The novel concept ``Unconcerned sample’’ is proposed to distinguish pixel representations at the inner and boundary regions within the same class, facilitating a clearer characterization of intra-class variations. A novel boundary contrastive loss for boundary representations is proposed to enhance representation discrimination across boundaries. The advantages of the unconcerned sample and boundary contrastive loss are analyzed theoretically. Experimental results in public cardiac datasets demonstrate that SPCL significantly improves segmentation performance, outperforming existing methods with respect to segmentation quality and boundary precision. Our code is available at https://github.com/Jrstud203/SPCL.

💡 Research Summary

The paper introduces a novel semi‑supervised segmentation framework called Subclass‑guided Pixel Contrastive Learning (SPCL) aimed at improving cardiac MRI segmentation, particularly at object boundaries where traditional contrastive learning suffers from representation contamination. The authors first partition each semantic class into two subclasses: inner pixels and boundary pixels, using a Canny edge detector to obtain binary boundary masks (B_c) and defining inner masks (I_c) as the complement. Consequently, the total number of subclasses doubles (2C).

A central concept is the “unconcerned sample.” For an inner‑class anchor, boundary pixels of the same class are treated as unconcerned samples, and vice‑versa. These samples are neither positive nor negative for anchors within the same class, but they act as negatives for anchors of other classes. This design reduces intra‑class variance while preserving inter‑class separability.

Training follows a teacher‑student paradigm. The teacher network, updated by exponential moving average (β = 0.999) of the student, provides pseudo‑labels for unlabeled images. Anchors are selected as hard examples: pixels where the student’s confidence is low (≤ γ_s = 0.75) but the teacher’s confidence is high (≥ γ_t = 0.95). This hard‑example mining encourages the model to focus on ambiguous regions. To alleviate the scarcity of negative samples for certain cardiac structures, the method maintains subclass‑wise memory banks Q^I_c and Q^B_c that store feature centroids computed over a FIFO queue.

Two contrastive losses are defined. Inner Contrastive Learning (ICL) uses the standard InfoNCE formulation but explicitly excludes unconcerned samples from the negative set. The authors analytically show that incorporating unconcerned samples tightens the lower bound of the InfoNCE loss, leading to more compact inner features and clearer separation from other classes. Boundary Contrastive Learning (BCL) adopts a ReLU‑based margin loss that forces the minimum similarity between a boundary anchor and its positive samples to exceed the maximum similarity with its negatives. This loss is less “greedy” than InfoNCE, making it suitable for the limited and heterogeneous boundary pixel set.

The overall objective combines supervised cross‑entropy and Dice losses (L_sup), an unsupervised Dice loss on confident pseudo‑labels (L_unsup), and the SPCL loss (L_spcl = (1‑α)L_icl + αL_bcl) with λ_u = 0.3, λ_c = 0.1, and a linearly increasing α from 0 to 0.1.

Experiments were conducted on three public cardiac datasets: SCD, ACDC, and M&Ms. Semi‑supervised settings used only 10 %/20 % (SCD), 5 %/10 % (ACDC), and 2.5 %/5 % (M&Ms) of the training scans as labeled data. SPCL consistently outperformed recent semi‑supervised methods (ADMT, ABD), contrastive‑learning baselines (UGPCL, DGCL), and boundary‑aware contrastive approaches (BUS, BASS) across Dice, Jaccard, and 95‑percentile Hausdorff distance (HD95). Notably, SPCL achieved the lowest HD95 values, indicating superior boundary precision. t‑SNE visualizations demonstrated tighter intra‑class clusters and clearer inter‑class separation compared to competing methods.

The authors acknowledge limitations: reliance on Canny edge detection may be sensitive to noise and resolution; hyper‑parameters such as memory‑bank size and temperature τ were not exhaustively studied; and the current implementation operates on 2‑D slices, leaving 3‑D volumetric extension as future work. Nonetheless, the subdivision of semantic classes into inner and boundary subclasses, together with the unconcerned‑sample mechanism, provides a compelling strategy for enhancing contrastive learning in medical image segmentation.

Comments & Academic Discussion

Loading comments...

Leave a Comment