VideoGPA: Distilling Geometry Priors for 3D-Consistent Video Generation

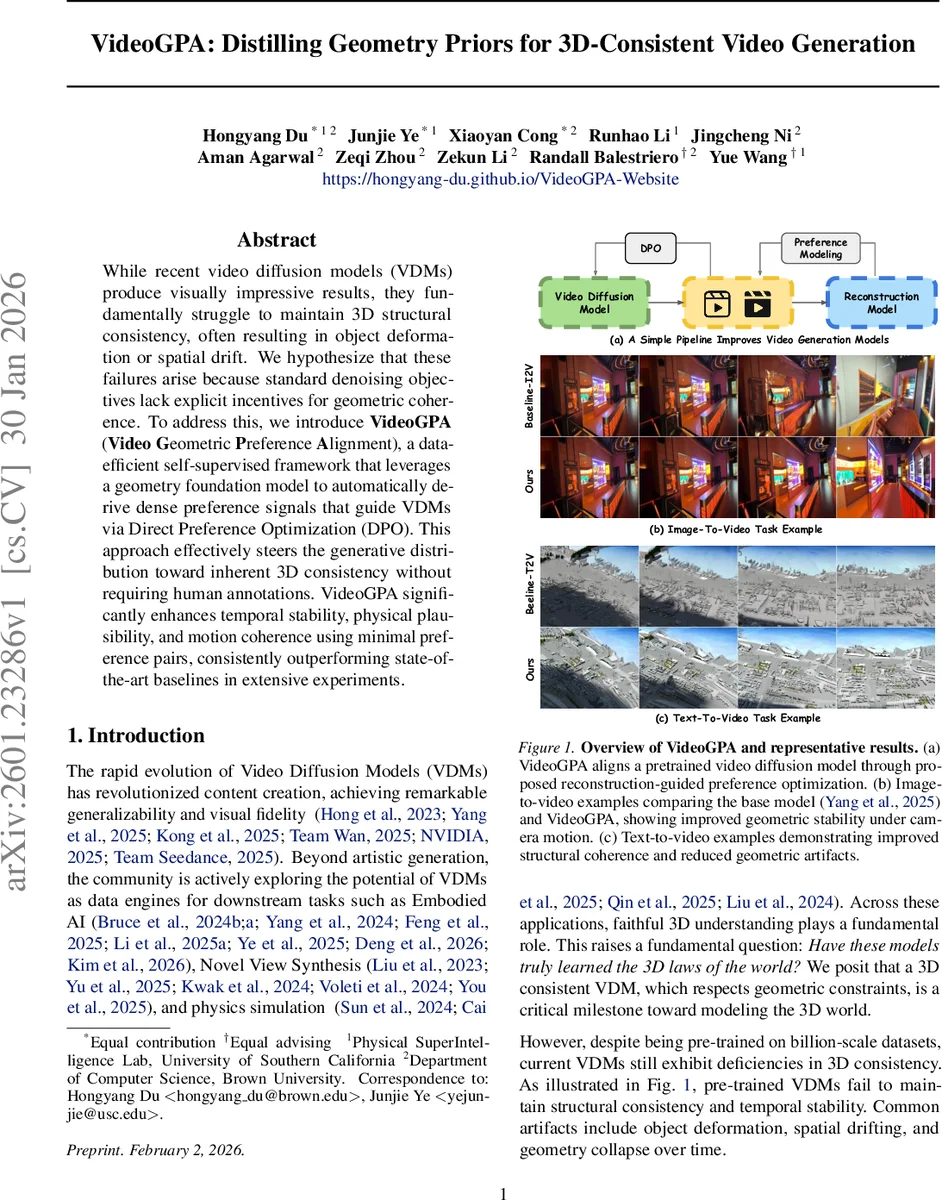

While recent video diffusion models (VDMs) produce visually impressive results, they fundamentally struggle to maintain 3D structural consistency, often resulting in object deformation or spatial drift. We hypothesize that these failures arise because standard denoising objectives lack explicit incentives for geometric coherence. To address this, we introduce VideoGPA (Video Geometric Preference Alignment), a data-efficient self-supervised framework that leverages a geometry foundation model to automatically derive dense preference signals that guide VDMs via Direct Preference Optimization (DPO). This approach effectively steers the generative distribution toward inherent 3D consistency without requiring human annotations. VideoGPA significantly enhances temporal stability, physical plausibility, and motion coherence using minimal preference pairs, consistently outperforming state-of-the-art baselines in extensive experiments.

💡 Research Summary

VideoGPA (Video Geometric Preference Alignment) tackles a fundamental shortcoming of current video diffusion models (VDMs): the inability to preserve 3‑dimensional structural consistency across frames. While VDMs excel at producing high‑fidelity, photorealistic video, their denoising objectives are purely pixel‑level and provide no explicit incentive for geometric coherence. Consequently, generated videos often exhibit object deformation, spatial drift, or geometry collapse when the virtual camera moves or objects rotate.

The authors hypothesize that the lack of a geometric regularizer is the root cause and propose a self‑supervised, data‑efficient framework that leverages a Geometry Foundation Model (GFM) as an automatic reward model. The pipeline consists of four stages. First, a pretrained GFM (e.g., DUST3R, MASt3R, or later multi‑view variants) processes each frame of a generated video to predict dense depth maps and camera poses (R, t). From these predictions a colored point cloud is assembled by back‑projecting each pixel into world coordinates using the intrinsic matrix K.

Second, the point cloud is re‑projected into each original view, producing a set of reconstructed frames ˆIₜ. The discrepancy between ˆIₜ and the original frames Iₜ is measured with a combination of Mean‑Squared Error (MSE) and Learned Perceptual Image Patch Similarity (LPIPS). This reconstruction loss, denoted E_Recon, serves as a proxy for 3‑D consistency: lower values indicate that a single 3‑D structure explains all views well, while higher values reveal violations of geometric coherence.

Third, for a given conditioning input (either an image prompt for I2V or a textual caption for T2V), the base VDM is sampled multiple times with different random seeds, yielding a set of videos that share semantic content but differ in geometry. The videos are ranked by their E_Recon scores, and pairs (x_w, x_l) are formed where the “winner” x_w has a significantly lower reconstruction error than the “loser” x_l. This creates a large collection of preference pairs without any human annotation.

Fourth, the preference pairs are fed into Direct Preference Optimization (DPO), a recent method that reframes preference learning as a log‑likelihood ratio maximization derived from the Bradley‑Terry model. In the context of v‑prediction diffusion models, the DPO loss reduces to a weighted difference between the velocity‑prediction errors of the winner and loser samples. The authors fine‑tune only a small LoRA adapter (≈1 % of total parameters), leaving the bulk of the pretrained VDM untouched. This lightweight adaptation preserves the original model’s visual quality while nudging the latent distribution toward the manifold of geometrically consistent videos.

Experiments cover two major scenarios. In the Image‑to‑Video (I2V) setting, the authors use initial frames from the DL3D V‑10K dataset as visual prompts and design structured camera‑motion primitives (pull‑back, roll, orbit, etc.) to provoke geometric failures. In the Text‑to‑Video (T2V) setting, they employ captions generated by CogVLM2‑Video, introducing broader semantic diversity. Across both settings, only ~2,500 preference pairs are required, and training consists of a few thousand LoRA updates.

Quantitative results are reported on a suite of metrics: 3‑D reconstruction error, Epipolar consistency, Multi‑View Consistency Score (MVCS), 3‑D Consistency Score (3DCS), as well as traditional image quality measures (PSNR, SSIM, LPIPS) and a human‑aligned VideoReward win‑rate. VideoGPA consistently achieves the lowest reconstruction errors while dramatically improving Epipolar and MVCS scores (e.g., Epipolar win‑rate rises from ~45 % for a supervised fine‑tune baseline to 74 % for VideoGPA in I2V). Perceptual quality remains on par or slightly better than the base model, and the VideoReward win‑rate climbs to 76 % in T2V, far surpassing both supervised fine‑tuning and prior Epipolar‑DPO approaches.

Ablation studies confirm that (1) the dense, self‑supervised preference signal is essential—random or noisy preferences degrade performance; (2) using only a few thousand LoRA parameters is sufficient; (3) the method is robust to the choice of GFM, though higher‑quality depth/pose estimators yield stronger signals.

The paper’s contributions are threefold: (i) introducing a fully self‑supervised preference generation pipeline that converts 3‑D reconstruction error into a learning signal; (ii) demonstrating that DPO can be seamlessly adapted to video diffusion models with v‑prediction; (iii) showing that minimal data and parameter updates can substantially improve geometric fidelity without sacrificing visual realism.

Limitations include dependence on the accuracy of the underlying GFM—if depth or pose estimates are noisy, the preference signal may be misleading. Dynamic scenes with non‑rigid motion (e.g., fluids, smoke) produce higher reconstruction errors that could be misinterpreted as geometric inconsistency. The current framework also focuses on relatively short clips (≈10 frames) and does not explicitly model long‑range temporal dependencies.

Future directions suggested by the authors involve (a) integrating multi‑modal GFMs that predict normals, illumination, or optical flow to provide richer geometric supervision; (b) designing reconstruction losses that are robust to non‑rigid motion; (c) scaling the preference generation pipeline to millions of pairs using distributed GFM inference, potentially enabling end‑to‑end training of VDMs with built‑in geometric regularizers.

In summary, VideoGPA presents a novel paradigm where a geometry foundation model acts as an automatic critic, turning dense 3‑D consistency assessments into preference pairs that steer a pretrained video diffusion model toward physically plausible, temporally stable outputs. The approach bridges the gap between 2‑D generative prowess and 3‑D world understanding, offering a practical and scalable path toward truly 3‑D‑consistent video generation.

Comments & Academic Discussion

Loading comments...

Leave a Comment