FOCUS: DLLMs Know How to Tame Their Compute Bound

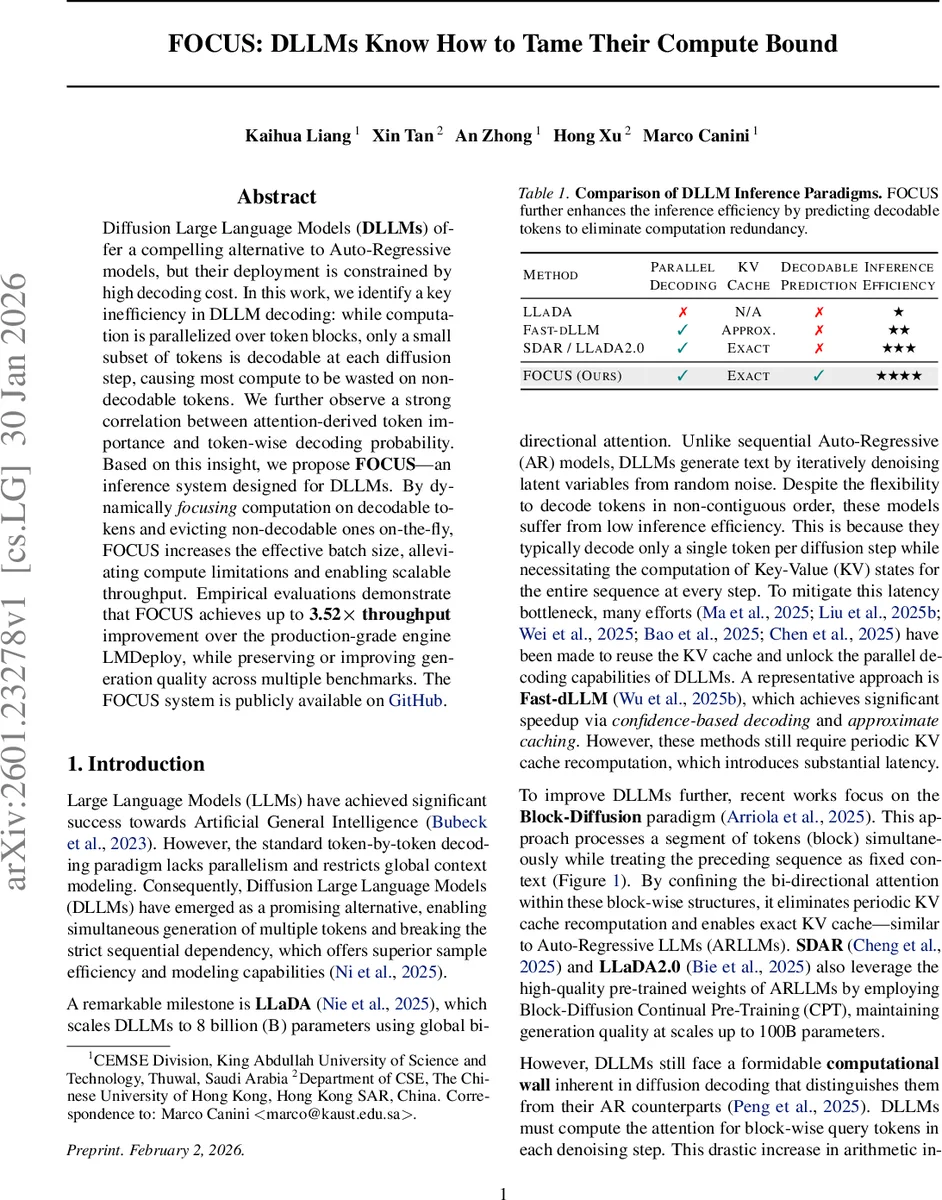

Diffusion Large Language Models (DLLMs) offer a compelling alternative to Auto-Regressive models, but their deployment is constrained by high decoding cost. In this work, we identify a key inefficiency in DLLM decoding: while computation is parallelized over token blocks, only a small subset of tokens is decodable at each diffusion step, causing most compute to be wasted on non-decodable tokens. We further observe a strong correlation between attention-derived token importance and token-wise decoding probability. Based on this insight, we propose FOCUS – an inference system designed for DLLMs. By dynamically focusing computation on decodable tokens and evicting non-decodable ones on-the-fly, FOCUS increases the effective batch size, alleviating compute limitations and enabling scalable throughput. Empirical evaluations demonstrate that FOCUS achieves up to 3.52$\times$ throughput improvement over the production-grade engine LMDeploy, while preserving or improving generation quality across multiple benchmarks. The FOCUS system is publicly available on GitHub: https://github.com/sands-lab/FOCUS.

💡 Research Summary

The paper addresses a fundamental inefficiency in diffusion‑based large language models (DLLMs) during inference. While DLLMs process entire blocks of tokens in parallel, only a small fraction (≈10 %) of those tokens are actually decoded at each diffusion step. Consequently, the model spends the majority of its FLOPs recomputing attention and KV caches for tokens that will not be emitted, turning the inference workload from memory‑bound (as in auto‑regressive LLMs) to compute‑bound.

The authors first investigate token‑wise attention patterns and discover that the change in attention importance between the first two layers (ΔI) is a strong predictor of whether a token will be decoded later. Tokens that quickly acquire high attention mass in layer 1 (relative to layer 0) tend to be decoded, whereas tokens whose importance remains low are almost never emitted. This “importance delta” provides a cheap, training‑free signal that can be computed on‑the‑fly.

Building on this insight, they propose FOCUS, an inference system that dynamically focuses computation on promising tokens and evicts the rest. FOCUS consists of two main components: (1) Dynamic Budgeting, which determines a per‑step retention budget K based on historical decoded‑token counts and a single hyper‑parameter α, ensuring that the system adapts to the varying number of decodable tokens across diffusion steps; (2) Delta Calculation and Token Eviction, which computes ΔI for each token after the first layer and discards those with low deltas, thereby removing them from all subsequent attention and feed‑forward operations. Because evicted tokens are excluded from KV cache updates, the FLOP reduction propagates through the entire remaining depth of the model.

Experiments are conducted on the SDAR‑8B‑Chat model with a block size of 32 and a confidence threshold of 0.9. Across four benchmarks (GSM8K, Math500, HumanEval, MBPP) the system eliminates roughly 65 %–80 % of tokens per step, cutting total FLOPs by about 70 %. Compared to the production‑grade engine LMDeploy, FOCUS achieves up to 3.52× higher throughput, especially at large batch sizes where conventional DLLM pipelines saturate the compute units. Importantly, generation quality is preserved or slightly improved, as measured by standard metrics (BLEU, ROUGE, exact match, etc.).

FOCUS requires only minimal changes to the existing DLLM inference pipeline and is released as open‑source software on GitHub, facilitating immediate adoption. The work not only provides a practical solution to the compute‑bound bottleneck of DLLM decoding but also uncovers a general principle: early‑layer attention dynamics can serve as reliable proxies for downstream decoding decisions. This opens new avenues for further research into token‑level pruning, adaptive diffusion schedules, and hybrid AR/DLLM systems that can combine the parallelism of diffusion with the efficiency of auto‑regressive decoding.

Comments & Academic Discussion

Loading comments...

Leave a Comment