UPA: Unsupervised Prompt Agent via Tree-Based Search and Selection

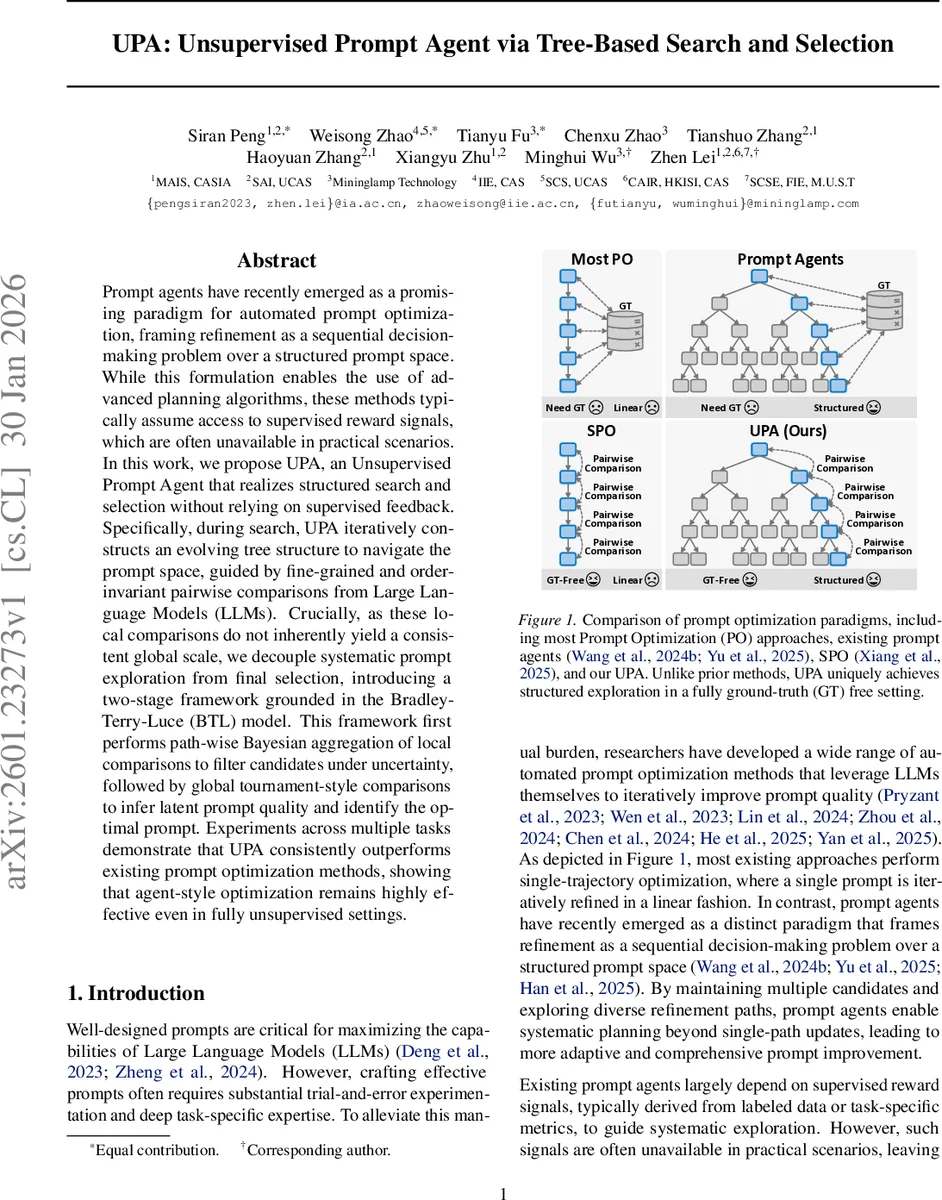

Prompt agents have recently emerged as a promising paradigm for automated prompt optimization, framing refinement as a sequential decision-making problem over a structured prompt space. While this formulation enables the use of advanced planning algorithms, these methods typically assume access to supervised reward signals, which are often unavailable in practical scenarios. In this work, we propose UPA, an Unsupervised Prompt Agent that realizes structured search and selection without relying on supervised feedback. Specifically, during search, UPA iteratively constructs an evolving tree structure to navigate the prompt space, guided by fine-grained and order-invariant pairwise comparisons from Large Language Models (LLMs). Crucially, as these local comparisons do not inherently yield a consistent global scale, we decouple systematic prompt exploration from final selection, introducing a two-stage framework grounded in the Bradley-Terry-Luce (BTL) model. This framework first performs path-wise Bayesian aggregation of local comparisons to filter candidates under uncertainty, followed by global tournament-style comparisons to infer latent prompt quality and identify the optimal prompt. Experiments across multiple tasks demonstrate that UPA consistently outperforms existing prompt optimization methods, showing that agent-style optimization remains highly effective even in fully unsupervised settings.

💡 Research Summary

The paper introduces UPA (Unsupervised Prompt Agent), a novel framework for automatically optimizing prompts without any supervised reward signal. Traditional prompt agents rely on labeled data or task‑specific metrics to guide planning algorithms such as Monte‑Carlo Tree Search (MCTS). In many real‑world scenarios such signals are unavailable, especially for new domains or open‑ended tasks. UPA addresses this gap by (1) constructing a dynamic tree that explores multiple refinement trajectories in parallel, and (2) selecting the best prompt through a two‑stage statistical pipeline grounded in the Bradley‑Terry‑Luce (BTL) model.

During the search phase, the algorithm starts from an initial prompt (the root) and iteratively expands the tree. Each node stores a concrete prompt, while each edge corresponds to a refinement generated by an “optimization LLM”. Because there is no absolute reward, UPA uses a separate “judge LLM” to perform fine‑grained, order‑invariant pairwise comparisons between a child prompt and its parent on a batch of sampled queries. The judge returns a 5‑point Likert score; to mitigate positional bias the order of the two responses is swapped and the scores are averaged, yielding a debiased ordinal score. This score is linearly mapped to a soft‑win value in

Comments & Academic Discussion

Loading comments...

Leave a Comment