Segment Any Events with Language

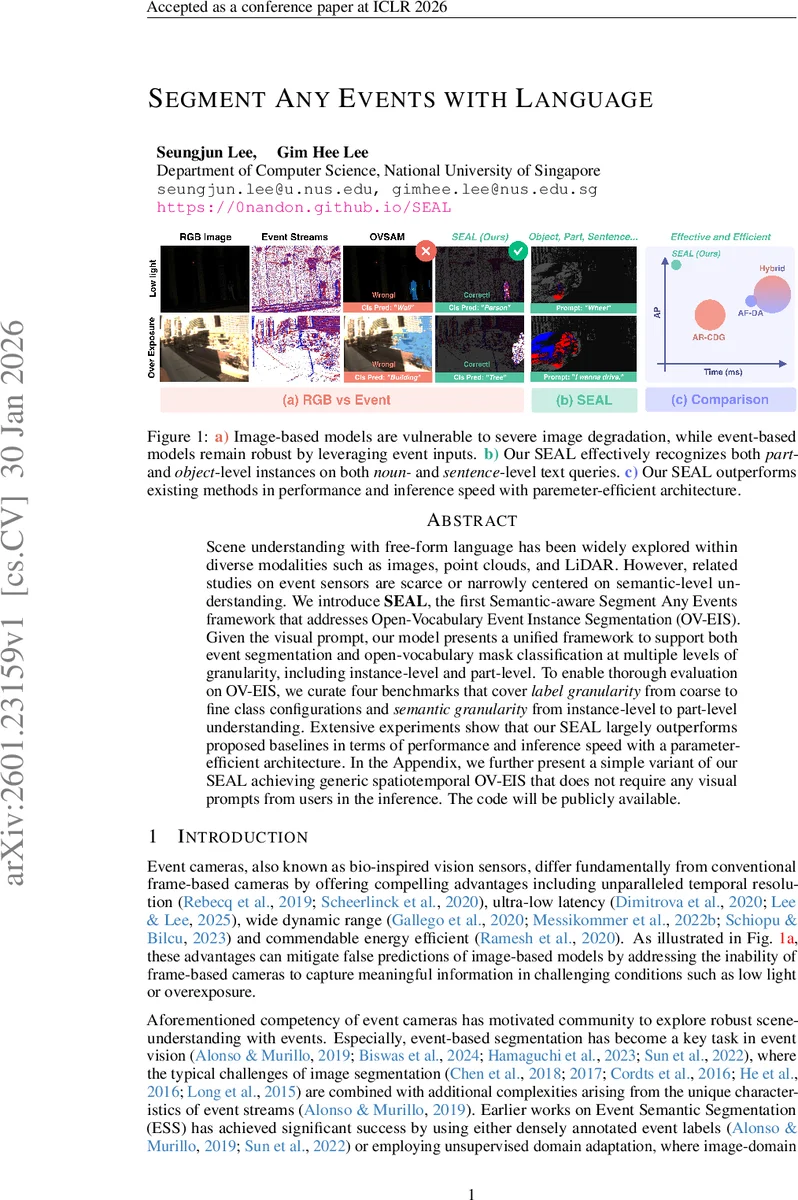

Scene understanding with free-form language has been widely explored within diverse modalities such as images, point clouds, and LiDAR. However, related studies on event sensors are scarce or narrowly centered on semantic-level understanding. We introduce SEAL, the first Semantic-aware Segment Any Events framework that addresses Open-Vocabulary Event Instance Segmentation (OV-EIS). Given the visual prompt, our model presents a unified framework to support both event segmentation and open-vocabulary mask classification at multiple levels of granularity, including instance-level and part-level. To enable thorough evaluation on OV-EIS, we curate four benchmarks that cover label granularity from coarse to fine class configurations and semantic granularity from instance-level to part-level understanding. Extensive experiments show that our SEAL largely outperforms proposed baselines in terms of performance and inference speed with a parameter-efficient architecture. In the Appendix, we further present a simple variant of our SEAL achieving generic spatiotemporal OV-EIS that does not require any visual prompts from users in the inference. Check out our project page in https://0nandon.github.io/SEAL

💡 Research Summary

The paper introduces SEAL (Segment Any Events with Language), the first framework that tackles Open‑Vocabulary Event Instance Segmentation (OV‑EIS). Unlike prior work that either performs closed‑set semantic segmentation on event streams or generates class‑agnostic masks, SEAL simultaneously produces instance‑level and part‑level masks while assigning open‑vocabulary labels derived from free‑form textual queries.

The architecture consists of two novel components. First, the Multimodal Hierarchical Semantic Guidance (MHSG) module leverages pretrained vision‑language models—SAM for hierarchical mask generation (semantic, instance, part) and CLIP for text embeddings. By aligning these image‑derived masks with event‑image pairs, MHSG provides rich, multi‑granular semantic supervision without requiring dense event annotations. Second, a lightweight Multimodal Fusion Network refines event features through three sub‑modules: (1) Backbone Feature Enhancer injects language‑derived semantic priors directly into the event backbone; (2) Spatial Encoding transfers SAM’s spatial priors to the event feature space, preserving positional cues; (3) Mask Feature Enhancer combines the enriched semantic and spatial information to produce CLIP‑aligned mask embeddings for open‑vocabulary classification.

Training is annotation‑free: only event‑image pairs are needed. Event streams are converted into voxel grids, reconstructed frames, or spike tensors, while corresponding conventional images are processed by SAM and CLIP. The loss jointly optimizes mask alignment, feature consistency, and text‑mask similarity, enabling the model to learn rich representations across multiple granularity levels.

To evaluate OV‑EIS, the authors construct four benchmarks that vary both label granularity (coarse to fine) and semantic granularity (instance‑level to part‑level). They compare SEAL against three families of baselines: (i) Annotation‑Rich Cross‑Domain Generalization (AR‑CDG) that adapts image‑trained SAM to events via E2VID reconstruction; (ii) Annotation‑Free Domain Adaptation (AF‑DA) that uses EventSAM for mask generation and OpenESS for mask classification without text supervision; (iii) Hybrid approaches that combine AF‑DA mask generation with CLIP‑based classification (crop‑based or feature‑pooling).

Across all benchmarks, SEAL achieves 8–12 % higher average precision than the strongest baselines, while using fewer than 30 % of the parameters and delivering more than a 2× speed‑up at inference time. Notably, SEAL excels at part‑level queries such as “the small sensor on the wrist,” demonstrating true open‑vocabulary understanding beyond mere object categories.

The paper also discusses a prompt‑free variant (detailed in the appendix) that removes the need for visual prompts at inference, moving toward fully automatic spatiotemporal OV‑EIS. However, the main model still relies on image‑derived masks as auxiliary guidance, and the alignment between asynchronous event streams and reconstructed images can introduce noise, especially under fast motion or low event rates. Future work is suggested to address temporal misalignment with attention mechanisms and to explore fully text‑driven mask generation.

In summary, SEAL bridges the gap between event‑based vision and multimodal language models, delivering a parameter‑efficient, high‑performance solution for open‑vocabulary instance and part segmentation on event data. This advancement opens new possibilities for robotics, autonomous driving, and AR/VR applications where low‑light, high‑speed perception combined with natural‑language interaction is essential.

Comments & Academic Discussion

Loading comments...

Leave a Comment