From Similarity to Vulnerability: Key Collision Attack on LLM Semantic Caching

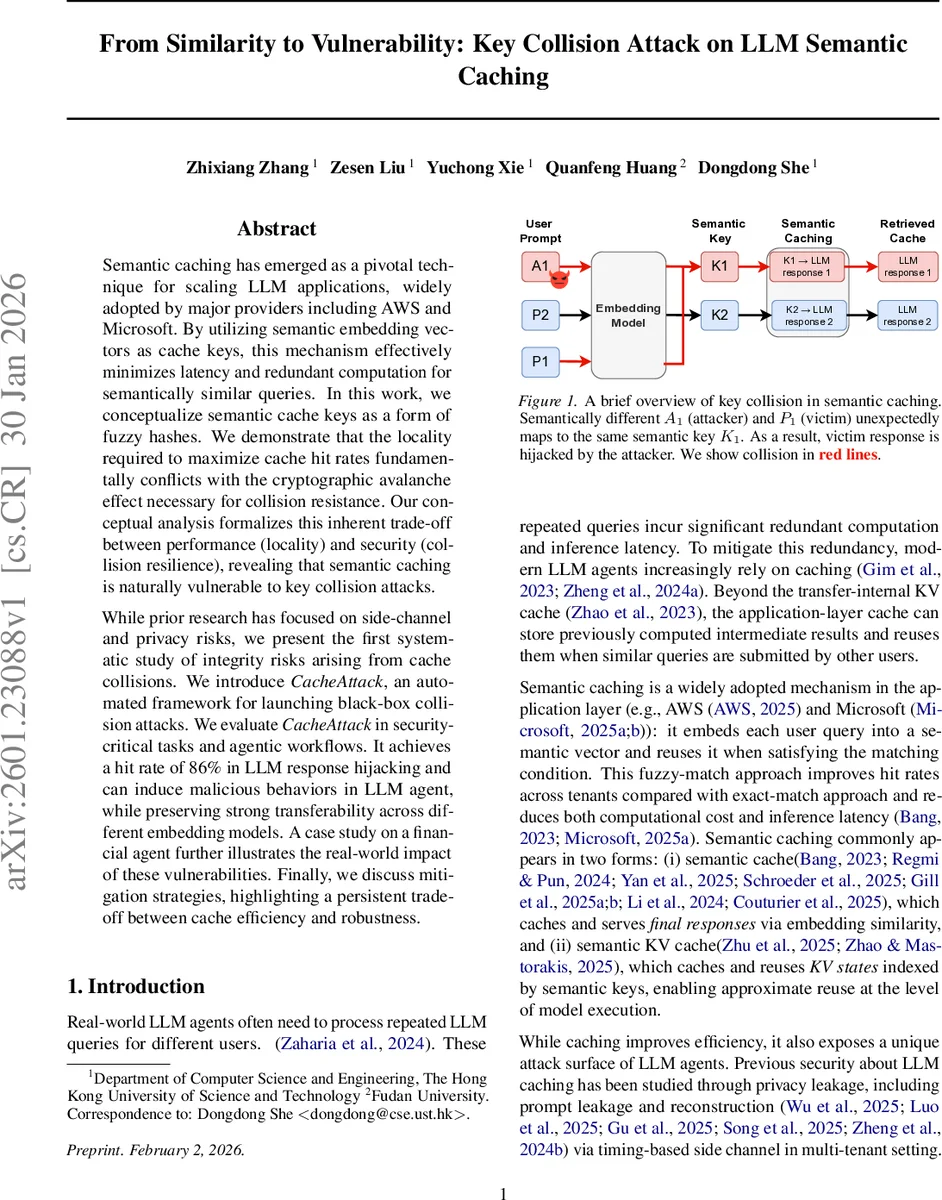

Semantic caching has emerged as a pivotal technique for scaling LLM applications, widely adopted by major providers including AWS and Microsoft. By utilizing semantic embedding vectors as cache keys, this mechanism effectively minimizes latency and redundant computation for semantically similar queries. In this work, we conceptualize semantic cache keys as a form of fuzzy hashes. We demonstrate that the locality required to maximize cache hit rates fundamentally conflicts with the cryptographic avalanche effect necessary for collision resistance. Our conceptual analysis formalizes this inherent trade-off between performance (locality) and security (collision resilience), revealing that semantic caching is naturally vulnerable to key collision attacks. While prior research has focused on side-channel and privacy risks, we present the first systematic study of integrity risks arising from cache collisions. We introduce CacheAttack, an automated framework for launching black-box collision attacks. We evaluate CacheAttack in security-critical tasks and agentic workflows. It achieves a hit rate of 86% in LLM response hijacking and can induce malicious behaviors in LLM agent, while preserving strong transferability across different embedding models. A case study on a financial agent further illustrates the real-world impact of these vulnerabilities. Finally, we discuss mitigation strategies.

💡 Research Summary

The paper investigates a previously overlooked security weakness in semantic caching, a technique widely used to accelerate large language model (LLM) services. Semantic caches store the result of a previous query and reuse it when a new query is “similar” according to an embedding‑based key. The authors argue that such keys behave like fuzzy hashes: they deliberately map semantically close inputs to the same value to maximize cache hit rates. This design conflicts with the avalanche property required of cryptographic hash functions, which ensures that tiny input changes produce wildly different outputs and thus provides collision resistance. By formalizing the matching rule (similarity ≥ τ or LSH bucket equality) they show that the very locality that yields performance gains also creates an intrinsic susceptibility to key collisions.

A threat model is defined where an attacker can submit arbitrary prompts to a multi‑tenant LLM system but has no knowledge of the internal embedding model or similarity threshold. The attacker therefore trains a surrogate embedding model (using publicly released models such as BAAI/bge‑small‑en‑v1.5) and employs a generator‑validator pipeline to craft adversarial prompts that produce the same semantic key as a victim’s benign prompt. Two attack variants are presented: CacheAttack‑1, which directly queries the target system for validation (high success, high detection risk), and CacheAttack‑2, which relies on the surrogate to pre‑filter candidates before sending them to the target (more efficient, lower detection probability).

The authors build a dataset of 4,185 indirect prompt‑injection examples (SC‑IPI) and evaluate the attacks against several LLM back‑ends (Claude, GPT‑4, LLaMA‑2) and embedding models (BGE, MiniLM, OpenAI embeddings). Results show an average cache‑hit rate of 86 % and an injection success rate above 80 %. In agentic workflows, hijacked cache entries cause tool‑invocation errors and a drop of more than 30 % in overall answer accuracy, demonstrating cascading failures. A financial‑agent case study illustrates real‑world impact: a malicious cache entry can trigger unauthorized trade orders, leading to potential monetary loss. Importantly, the attack transfers across different embedding models, with surrogate‑based attacks retaining >70 % success even when the target model differs structurally.

Mitigation strategies are discussed, including (1) normalizing embeddings and dynamically adjusting similarity thresholds, (2) attaching cryptographic signatures to cache entries and verifying integrity before reuse, and (3) performing a secondary LLM verification step on cache hits (e.g., sampling multiple responses, comparing with a temperature‑adjusted generation). All defenses incur a trade‑off, reducing cache efficiency or increasing latency, and none fully eliminate the underlying locality‑vs‑collision‑resistance dilemma.

The paper concludes that semantic caching, while beneficial for performance, is fundamentally vulnerable to key‑collision attacks. The proposed CacheAttack framework demonstrates that an adversary can reliably hijack LLM responses and manipulate agent behavior without ever modifying cached values or the underlying model. The authors call for future work on designing cryptographically‑secure semantic hash functions, policy‑based isolation in multi‑tenant caches, and anomaly‑detection mechanisms that can flag suspicious cache reuse. This study highlights the urgent need to balance efficiency with robustness in the next generation of LLM serving infrastructures.

Comments & Academic Discussion

Loading comments...

Leave a Comment