When Meanings Meet: Investigating the Emergence and Quality of Shared Concept Spaces during Multilingual Language Model Training

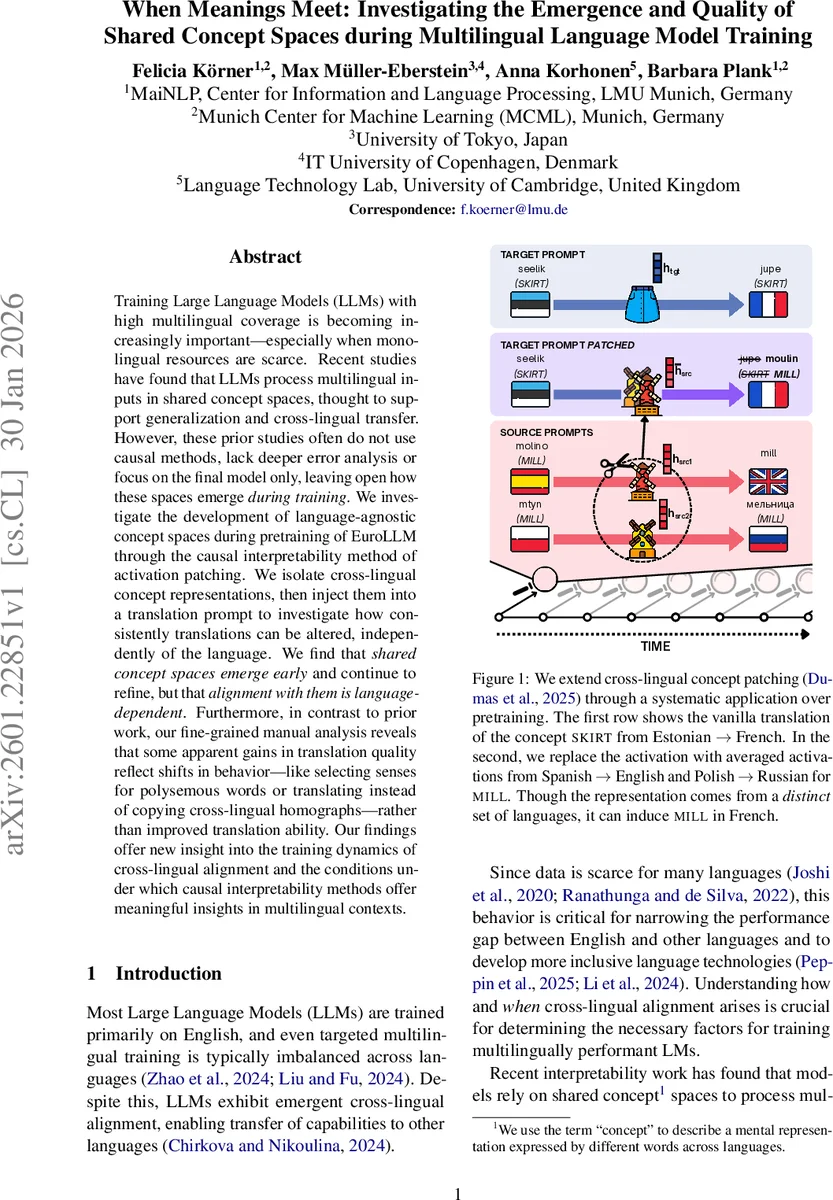

Training Large Language Models (LLMs) with high multilingual coverage is becoming increasingly important – especially when monolingual resources are scarce. Recent studies have found that LLMs process multilingual inputs in shared concept spaces, thought to support generalization and cross-lingual transfer. However, these prior studies often do not use causal methods, lack deeper error analysis or focus on the final model only, leaving open how these spaces emerge during training. We investigate the development of language-agnostic concept spaces during pretraining of EuroLLM through the causal interpretability method of activation patching. We isolate cross-lingual concept representations, then inject them into a translation prompt to investigate how consistently translations can be altered, independently of the language. We find that shared concept spaces emerge early} and continue to refine, but that alignment with them is language-dependent}. Furthermore, in contrast to prior work, our fine-grained manual analysis reveals that some apparent gains in translation quality reflect shifts in behavior – like selecting senses for polysemous words or translating instead of copying cross-lingual homographs – rather than improved translation ability. Our findings offer new insight into the training dynamics of cross-lingual alignment and the conditions under which causal interpretability methods offer meaningful insights in multilingual contexts.

💡 Research Summary

This paper investigates how language‑agnostic concept representations—often referred to as shared concept spaces—emerge and evolve during the pre‑training of a multilingual large language model (LLM). The authors focus on EuroLLM‑1.7B, a model released with 26 intermediate checkpoints, allowing a fine‑grained view of training dynamics. Their central methodological tool is an adaptation of activation patching, termed cross‑lingual concept patching, which provides causal evidence for the existence of shared spaces.

The procedure works as follows. First, a set of “source” language pairs (e.g., Spanish→English, Polish→Russian) is used to translate a particular concept C_src (e.g., the noun “MILL”). During this translation, the activations at the final token of the target word and all subsequent layers are recorded. These activations are averaged across the source language pairs to create a “patch”. Next, a completely different “target” language pair (e.g., Estonian→French) is prompted to translate a distinct concept C_tgt (e.g., “SKIRT”). While the model processes this prompt, the previously computed patch is injected at the same token position and subsequent layers. If the model outputs the source concept (“MILL”) in the target output language (French) instead of the intended “SKIRT”, the intervention demonstrates that the model relies on a language‑independent representation of the concept.

The authors evaluate this intervention across three output languages (English, Russian, Chinese) and a variety of source language configurations: (1) “Seen” languages that appear in EuroLLM’s training data but are not the target languages, (2) an “en_en” control where both input and output are English (a copying task), and (3) a “tgt” ablation that removes the target language from the source set. They also construct a large multilingual dataset from Multi‑SimLex, selecting 256 compatible noun pairs (398 distinct concepts) to avoid confounds from function words or overlapping translations.

Key findings:

-

Early emergence of shared spaces – Even at checkpoint 5–10 (roughly the first 20 % of training tokens), the patched activations cause the model to output the source concept with a noticeable success rate (>30 %). This indicates that language‑agnostic concept representations begin to form very early in training, rather than only after extensive exposure to multilingual data.

-

Alignment depends on data proportion – Source languages that are heavily represented in the training corpus (English, Spanish, French) yield high patch success (often >70 %). In contrast, languages with scarce data (Estonian, Finnish) produce much lower success (<20 %). Thus, while a shared space exists, each language’s alignment to that space is strongly mediated by its training data volume.

-

Phase‑wise dynamics – EuroLLM is trained in two phases: Phase 1 (0–90 % of tokens) dominated by web data and English‑centric parallel corpora, and Phase 2 (final 10 %) enriched with high‑quality multilingual parallel data. After Phase 2 begins, the success rate for output languages with different scripts (Russian, Chinese) improves markedly, suggesting that the later multilingual parallel data helps the model map the shared concept space to diverse orthographies.

-

Control task validates methodology – The en_en copying condition achieves near‑perfect success, confirming that the patching mechanism works when the source concept is well‑defined in the dominant language.

-

Fine‑grained error analysis reveals nuanced behavior – Relying solely on first‑token probability (a common metric in activation‑patching studies) proved insufficient because many concepts share the same initial token but diverge later (e.g., “organ” vs. “organizer”). The authors therefore evaluate full‑sequence word‑level accuracy and conduct a manual inspection of 400 patched outputs. They identify three primary failure modes: (a) Sense selection shifts for polysemous words, where the model chooses a different meaning after patching; (b) Homograph copying vs. translation, where the model decides to copy a cross‑lingual homograph rather than translate it; and (c) Partial concept leakage, where the patched representation influences the output but does not fully replace the target concept, leading to hybrid translations. Notably, some apparent gains in translation quality are actually due to these sense‑selection changes rather than genuine improvements.

Overall, the study demonstrates that shared concept spaces are not a late‑stage emergent property but arise early, and their alignment to specific languages is heavily contingent on the amount and type of multilingual data the model sees. The causal patching framework proves to be a powerful tool for probing the internal mechanics of multilingual LLMs, uncovering subtle behavioral shifts that standard probing or performance metrics would miss.

Implications: For practitioners aiming to build more inclusive multilingual models, the results suggest that increasing the proportion of high‑quality parallel data for low‑resource languages—especially in later training phases—can improve alignment of those languages to the shared space, thereby enhancing cross‑lingual transfer. Moreover, the paper highlights the importance of using full‑sequence evaluation and manual error analysis when interpreting mechanistic interventions, as simplistic token‑probability metrics may mask meaningful changes in meaning.

In sum, this work provides the first causal, checkpoint‑level analysis of shared concept space emergence, offers a robust experimental framework for future mechanistic studies, and cautions against conflating apparent translation improvements with genuine semantic fidelity.

Comments & Academic Discussion

Loading comments...

Leave a Comment