Decomposing and Composing: Towards Efficient Vision-Language Continual Learning via Rank-1 Expert Pool in a Single LoRA

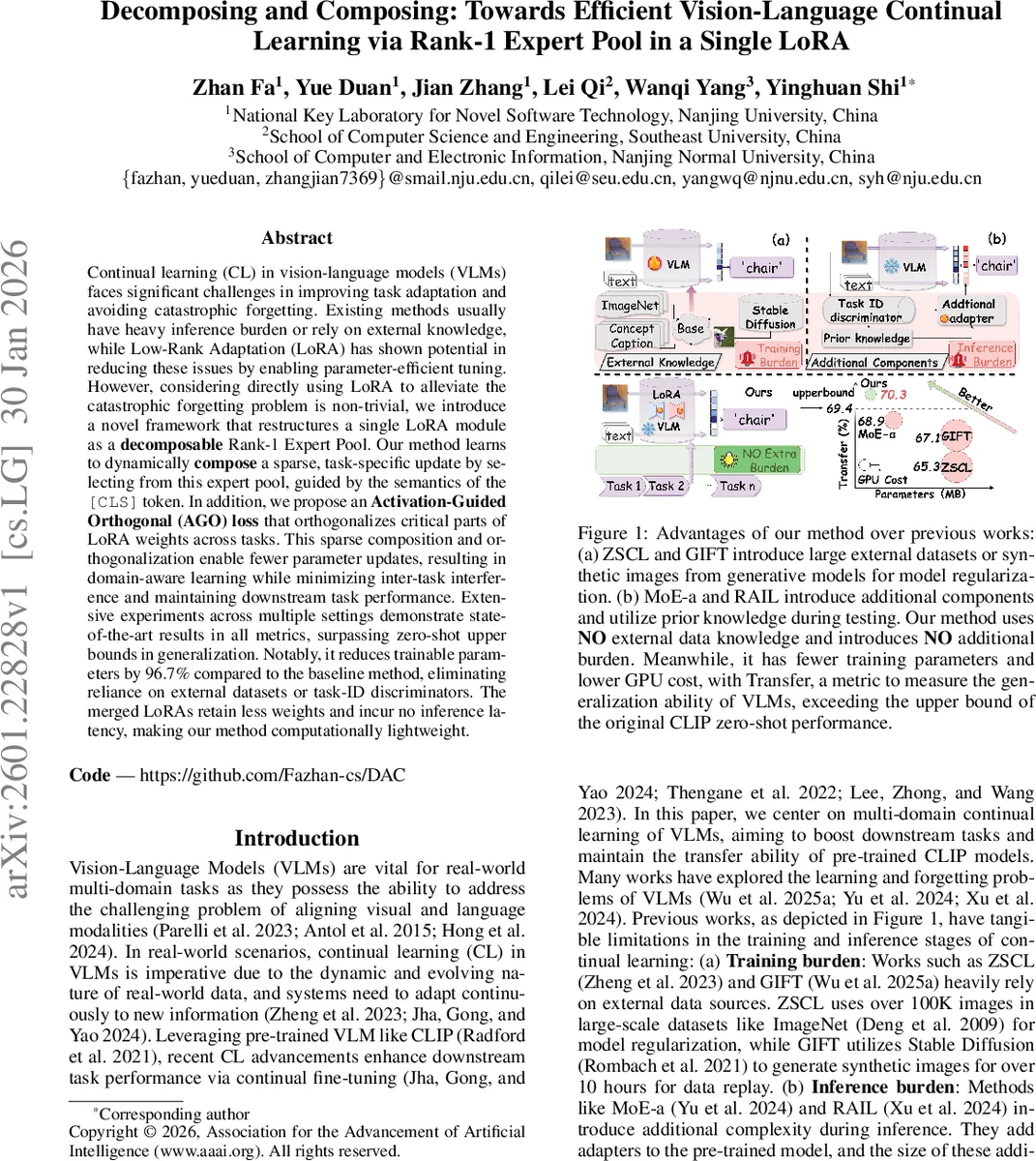

Continual learning (CL) in vision-language models (VLMs) faces significant challenges in improving task adaptation and avoiding catastrophic forgetting. Existing methods usually have heavy inference burden or rely on external knowledge, while Low-Rank Adaptation (LoRA) has shown potential in reducing these issues by enabling parameter-efficient tuning. However, considering directly using LoRA to alleviate the catastrophic forgetting problem is non-trivial, we introduce a novel framework that restructures a single LoRA module as a decomposable Rank-1 Expert Pool. Our method learns to dynamically compose a sparse, task-specific update by selecting from this expert pool, guided by the semantics of the [CLS] token. In addition, we propose an Activation-Guided Orthogonal (AGO) loss that orthogonalizes critical parts of LoRA weights across tasks. This sparse composition and orthogonalization enable fewer parameter updates, resulting in domain-aware learning while minimizing inter-task interference and maintaining downstream task performance. Extensive experiments across multiple settings demonstrate state-of-the-art results in all metrics, surpassing zero-shot upper bounds in generalization. Notably, it reduces trainable parameters by 96.7% compared to the baseline method, eliminating reliance on external datasets or task-ID discriminators. The merged LoRAs retain less weights and incur no inference latency, making our method computationally lightweight.

💡 Research Summary

The paper tackles the persistent problem of catastrophic forgetting in continual learning (CL) for vision‑language models (VLMs) by leveraging Low‑Rank Adaptation (LoRA) in a novel, highly efficient manner. Traditional CL approaches for VLMs either rely on massive external datasets (e.g., ImageNet, synthetic images from diffusion models) for regularization, or they introduce additional adapters, mixture‑of‑experts (MoE) modules, and task‑ID discriminators that increase training and inference overhead. In contrast, the authors propose to restructure a single LoRA module into a decomposable pool of rank‑1 experts.

Core Idea – Rank‑1 Expert Pool

A LoRA matrix ΔW of rank r can be expressed as ΔW = BA, where B∈ℝ^{d×r} and A∈ℝ^{r×d}. This factorization is equivalent to a sum of r rank‑1 matrices b_i a_i^T. The authors treat each rank‑1 component as an independent “expert”. Instead of updating the whole low‑rank matrix for every new task, they dynamically select a small subset of experts that best match the current data distribution, thereby creating a sparse, task‑specific update.

**Dynamic Routing via the

Comments & Academic Discussion

Loading comments...

Leave a Comment