AR-BENCH: Benchmarking Legal Reasoning with Judgment Error Detection, Classification and Correction

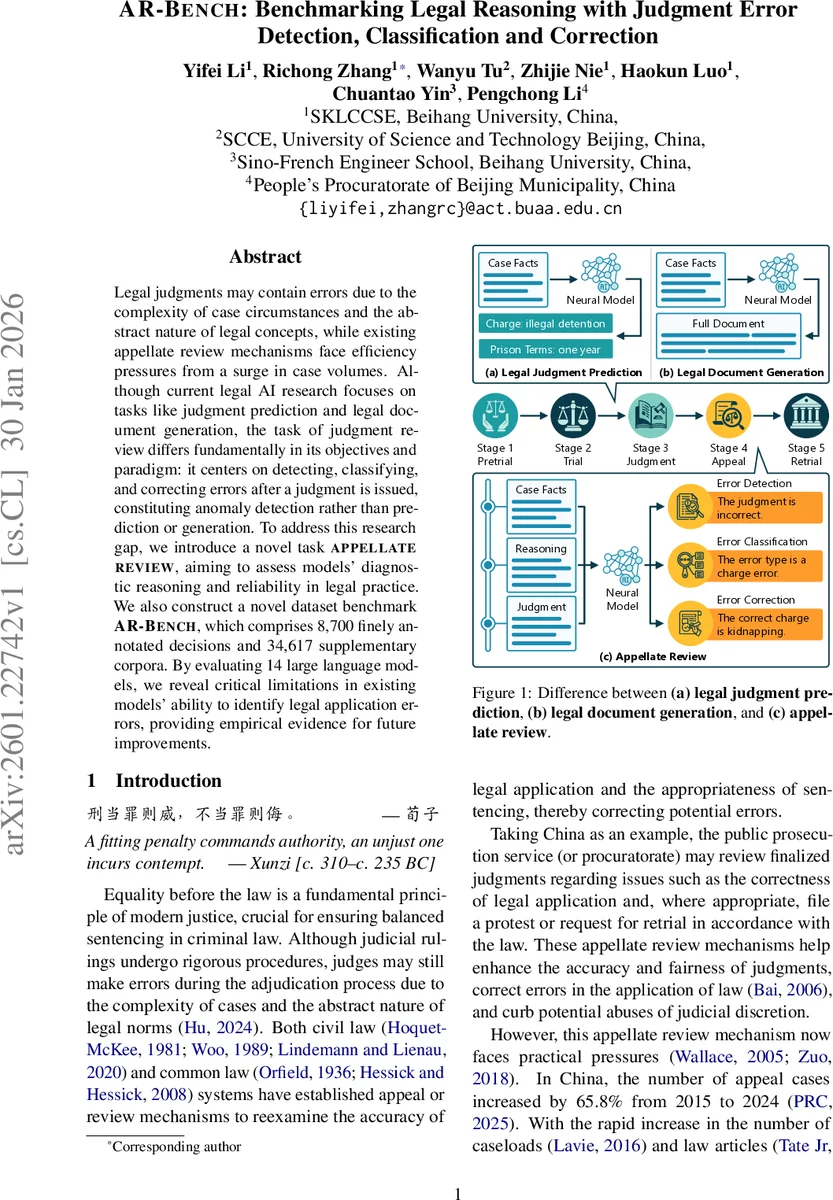

Legal judgments may contain errors due to the complexity of case circumstances and the abstract nature of legal concepts, while existing appellate review mechanisms face efficiency pressures from a surge in case volumes. Although current legal AI research focuses on tasks like judgment prediction and legal document generation, the task of judgment review differs fundamentally in its objectives and paradigm: it centers on detecting, classifying, and correcting errors after a judgment is issued, constituting anomaly detection rather than prediction or generation. To address this research gap, we introduce a novel task APPELLATE REVIEW, aiming to assess models’ diagnostic reasoning and reliability in legal practice. We also construct a novel dataset benchmark AR-BENCH, which comprises 8,700 finely annotated decisions and 34,617 supplementary corpora. By evaluating 14 large language models, we reveal critical limitations in existing models’ ability to identify legal application errors, providing empirical evidence for future improvements.

💡 Research Summary

This paper addresses a critical gap in legal artificial intelligence by focusing on the post‑verdict phase of judicial work: the detection, classification, and correction of errors in finalized judgments. While most prior legal‑AI research has concentrated on judgment prediction or legal document generation—tasks that assist practitioners before a decision is rendered—this study introduces a fundamentally different task called “Appellate Review.” The authors decompose this task into three sequential subtasks: (1) error detection, where a model must decide whether a given judgment contains any legal‑application error; (2) error classification, where the model must identify the specific type(s) of error from a predefined taxonomy; and (3) error correction, where the model must generate a legally valid amendment to the erroneous portion of the judgment.

To evaluate models on this new task, the authors construct a large‑scale benchmark named AR‑BENCH. The dataset is derived from China Judgments Online and consists of 8,700 meticulously annotated judgments plus a supplementary corpus of 34,617 related documents. Each case is enriched with 20 fine‑grained annotations covering factual description, reasoning steps, cited law articles, sentencing factors, and more. Errors are manually injected to reflect realistic appellate scenarios, resulting in six well‑defined error categories: (E1) Erroneous charge, (E2) Omitted charge, (E3) Sentencing outside statutory limits, (E4) Failure to consider sentencing factors, (E5) Fixed‑amount fine outside limits, and (E6) Percentage‑based fine outside limits. The taxonomy allows for single or multiple concurrent errors, mirroring the complexity of real cases.

The authors evaluate fourteen contemporary large language models (LLMs), spanning general‑purpose, reasoning‑enhanced, and domain‑specific variants. For detection and classification, standard classification metrics—accuracy, macro‑precision, macro‑recall, and macro‑F1—are reported. For correction, the authors adopt classification metrics for charge‑related errors and the ImpScore and Acc@0.1 metrics (the latter measuring whether the corrected numeric value falls within a 0.1 tolerance) for sentencing‑term and fine corrections.

Results reveal a stark performance gap. Most models achieve moderate accuracy (≈70‑80 %) on the binary detection task, indicating that LLMs can often spot that something is wrong. However, when required to pinpoint the exact error type, macro‑F1 scores drop below 40 %, especially for the more nuanced sentencing and fine categories. In the correction stage, generated amendments frequently violate statutory limits or ignore critical sentencing factors; overall ImpScore hovers around 0.35, and Acc@0.1 remains low. These findings suggest that current LLMs excel at surface‑level language understanding but struggle with the rigorous logical and quantitative reasoning demanded by legal rule application.

The paper also discusses why simply providing the full text of relevant statutes as auxiliary input does not substantially improve performance, highlighting the need for more sophisticated knowledge integration—such as legal knowledge graphs, structured law‑article embeddings, or retrieval‑augmented generation tailored to the error type.

In summary, the authors make three major contributions: (1) they define the novel “Appellate Review” task, shifting the focus of legal AI from prediction to post‑verdict error diagnosis; (2) they release AR‑BENCH, a richly annotated benchmark that supports fine‑grained evaluation of detection, classification, and correction capabilities; and (3) they provide a comprehensive empirical analysis exposing the current limitations of state‑of‑the‑art LLMs in handling complex legal reasoning. This work lays the groundwork for future research aimed at building AI assistants that can reliably support judges in appellate review, thereby enhancing the accuracy, fairness, and efficiency of judicial systems.

Comments & Academic Discussion

Loading comments...

Leave a Comment