StreamSense: Streaming Social Task Detection with Selective Vision-Language Model Routing

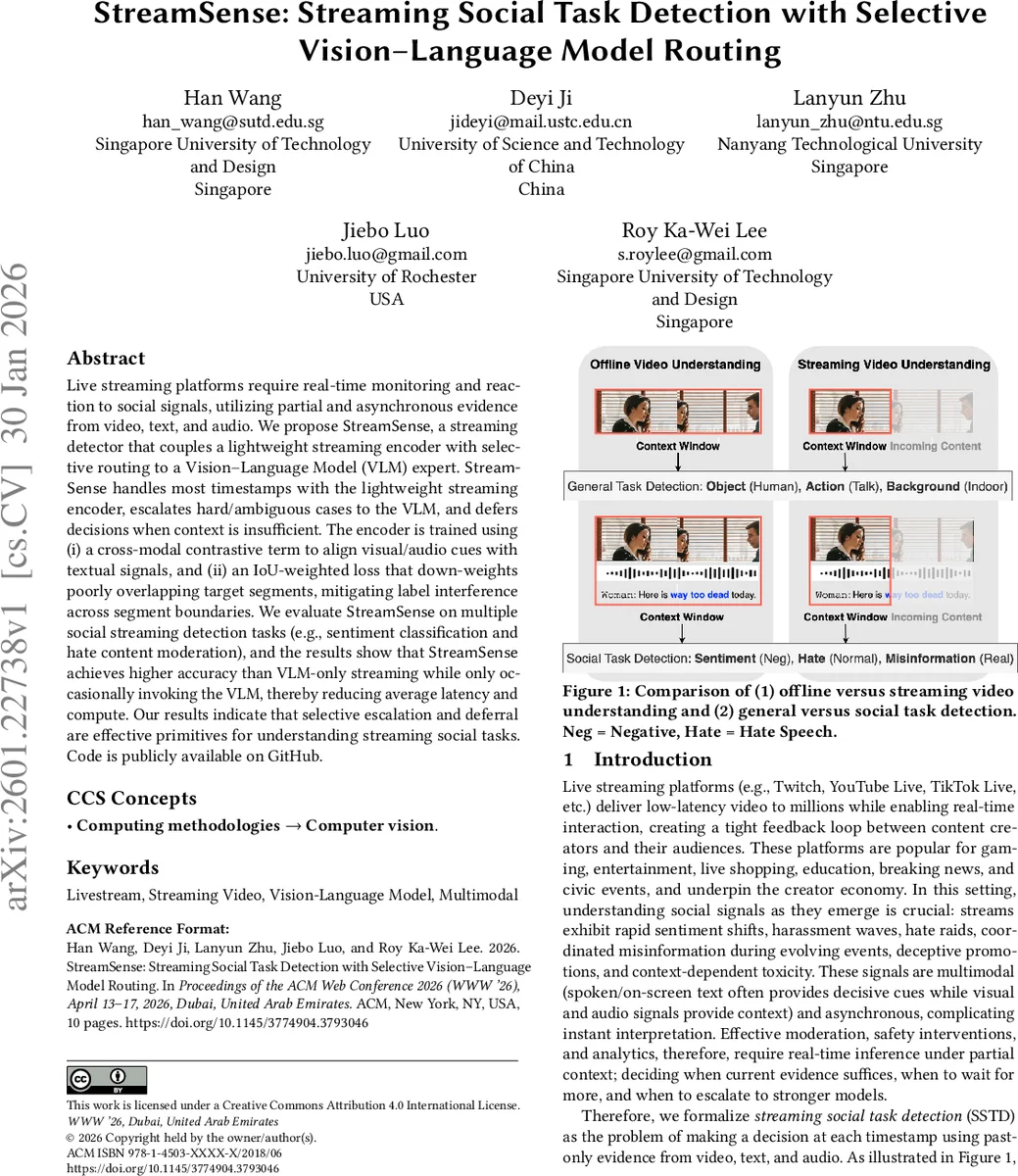

Live streaming platforms require real-time monitoring and reaction to social signals, utilizing partial and asynchronous evidence from video, text, and audio. We propose StreamSense, a streaming detector that couples a lightweight streaming encoder with selective routing to a Vision-Language Model (VLM) expert. StreamSense handles most timestamps with the lightweight streaming encoder, escalates hard/ambiguous cases to the VLM, and defers decisions when context is insufficient. The encoder is trained using (i) a cross-modal contrastive term to align visual/audio cues with textual signals, and (ii) an IoU-weighted loss that down-weights poorly overlapping target segments, mitigating label interference across segment boundaries. We evaluate StreamSense on multiple social streaming detection tasks (e.g., sentiment classification and hate content moderation), and the results show that StreamSense achieves higher accuracy than VLM-only streaming while only occasionally invoking the VLM, thereby reducing average latency and compute. Our results indicate that selective escalation and deferral are effective primitives for understanding streaming social tasks. Code is publicly available on GitHub.

💡 Research Summary

StreamSense tackles the emerging problem of Streaming Social Task Detection (SSTD), where a live multimodal stream (video, on‑screen text, and audio) must be interpreted in real time using only past information. The authors argue that SSTD differs fundamentally from conventional offline video tasks along three axes: causality (no future look‑ahead), multimodality (social cues often reside in speech or text rather than pure vision), and social inference (understanding intent, toxicity, or sentiment requires background knowledge). To meet these constraints, StreamSense combines three components: (1) a lightweight streaming encoder that processes past‑only windows of multimodal data; (2) a router that decides, for each timestamp, whether the encoder’s confidence is sufficient to emit a label, whether the instance is hard/ambiguous and should be escalated to a high‑capacity Vision‑Language Model (VLM) expert, or whether evidence is insufficient and the decision should be deferred; and (3) an explicit deferral policy that postpones prediction until more context arrives. Training is complicated by the fact that publicly available datasets provide only segment‑level annotations created after viewing the whole clip. Propagating these labels to every frame creates label interference at segment boundaries and forces models to predict even when evidence is absent. StreamSense addresses this mismatch with two loss mechanisms: (i) a cross‑modal contrastive loss that aligns visual and audio cues with decisive textual signals, improving multimodal fusion under partial context; and (ii) an IoU‑weighted cross‑entropy loss that down‑weights windows with low overlap to the target segment, thereby reducing conflicting gradients near boundaries. Experiments on two representative social tasks—sentiment classification and hate‑speech detection—show that StreamSense outperforms VLM‑only baselines in both accuracy and macro‑F1 while invoking the VLM in less than 10 % of timestamps. This selective escalation dramatically cuts average latency and computational cost, confirming that most timestamps can be handled by the lightweight encoder. Ablation studies demonstrate the importance of the IoU weighting and the deferral mechanism for stabilizing training and improving real‑time performance. In summary, StreamSense presents a practical framework that leverages a small, fast encoder for the common case, escalates only the difficult instances to a powerful VLM, and intelligently defers when context is lacking, thereby achieving high‑quality, low‑latency detection of social signals in live streams.

Comments & Academic Discussion

Loading comments...

Leave a Comment