Pushing the Boundaries of Natural Reasoning: Interleaved Bonus from Formal-Logic Verification

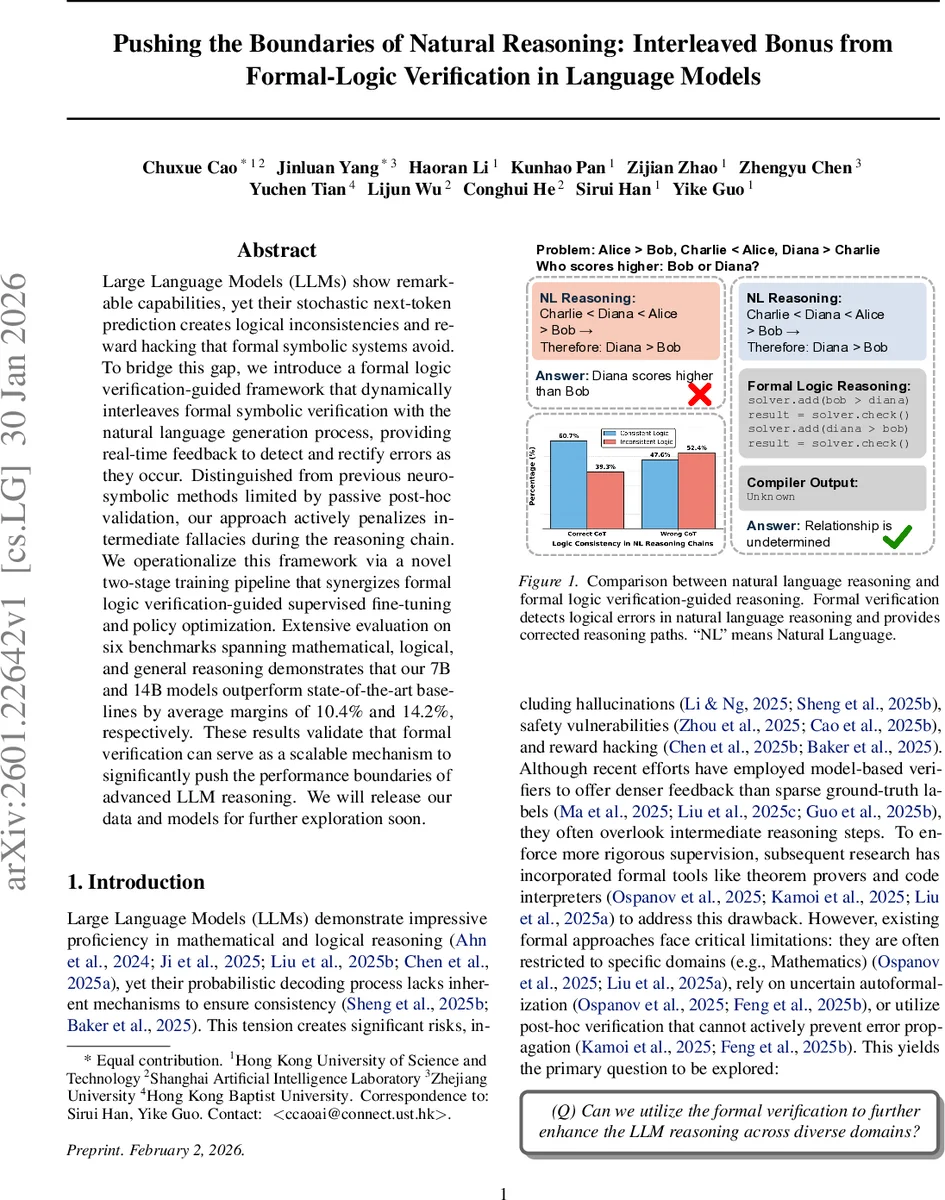

Large Language Models (LLMs) show remarkable capabilities, yet their stochastic next-token prediction creates logical inconsistencies and reward hacking that formal symbolic systems avoid. To bridge this gap, we introduce a formal logic verification-guided framework that dynamically interleaves formal symbolic verification with the natural language generation process, providing real-time feedback to detect and rectify errors as they occur. Distinguished from previous neuro-symbolic methods limited by passive post-hoc validation, our approach actively penalizes intermediate fallacies during the reasoning chain. We operationalize this framework via a novel two-stage training pipeline that synergizes formal logic verification-guided supervised fine-tuning and policy optimization. Extensive evaluation on six benchmarks spanning mathematical, logical, and general reasoning demonstrates that our 7B and 14B models outperform state-of-the-art baselines by average margins of 10.4% and 14.2%, respectively. These results validate that formal verification can serve as a scalable mechanism to significantly push the performance boundaries of advanced LLM reasoning.

💡 Research Summary

The paper addresses the persistent problem of logical inconsistency and reward hacking in large language models (LLMs) that arise from their stochastic next‑token generation. To bridge the gap between probabilistic natural‑language reasoning and the rigor of formal symbolic systems, the authors introduce a novel framework that interleaves formal logic verification directly into the generation process. Unlike prior neuro‑symbolic approaches that rely on static, post‑hoc checks or are confined to specific domains such as mathematics, this method provides real‑time feedback at each reasoning step, allowing the model to detect and correct errors on the fly.

The training pipeline consists of two synergistic stages. In the supervised fine‑tuning (SFT) stage, a strong teacher model generates multiple chain‑of‑thought (CoT) candidates for each problem. Each natural‑language step is automatically translated into a formal specification (e.g., SMT, Lean, Z3) and an expected execution result. A three‑stage validation—exact match, semantic equivalence, and proof rewriting—ensures that only high‑fidelity (natural‑language, formal proof, execution output) triples are retained for training. This mitigates the noise inherent in auto‑formalization and aligns the model’s output distribution with a verifiable reasoning format.

In the reinforcement learning (RL) stage, the policy model generates interleaved natural‑language and formal steps. A formal verifier evaluates each formal component, returning satisfiability results, counterexamples, or execution traces. These signals are incorporated into a composite reward function via Group Relative Policy Optimization (GRPO), penalizing intermediate logical fallacies and rewarding verified steps. The loop of verification → feedback → regeneration enables iterative self‑correction during inference.

Empirical evaluation on six benchmarks spanning logical puzzles, mathematical problems, and general reasoning shows substantial gains. The 7‑billion‑parameter model improves average accuracy by 10.4 % over state‑of‑the‑art baselines, while the 14‑billion‑parameter model achieves a 14.2 % boost. Detailed analysis reveals that even when final answers are correct, up to 39.3 % of intermediate steps are formally disproved; this rate rises to 52.4 % for incorrect answers, underscoring the necessity of in‑process verification.

Key contributions include: (1) the first dynamic interleaving of formal verification with LLM reasoning across diverse domains, (2) a hierarchical data synthesis pipeline with execution‑based validation to produce high‑quality training triples, and (3) a reinforcement learning scheme that directly leverages verification feedback to enforce structural integrity. Limitations involve the domain coverage and speed of current theorem provers, and residual errors from imperfect auto‑formalization. Future work should explore broader formal systems, lightweight verifiers, and hybrid human‑in‑the‑loop feedback to further scale and accelerate verification‑guided reasoning.

Comments & Academic Discussion

Loading comments...

Leave a Comment