MC-GRPO: Median-Centered Group Relative Policy Optimization for Small-Rollout Reinforcement Learning

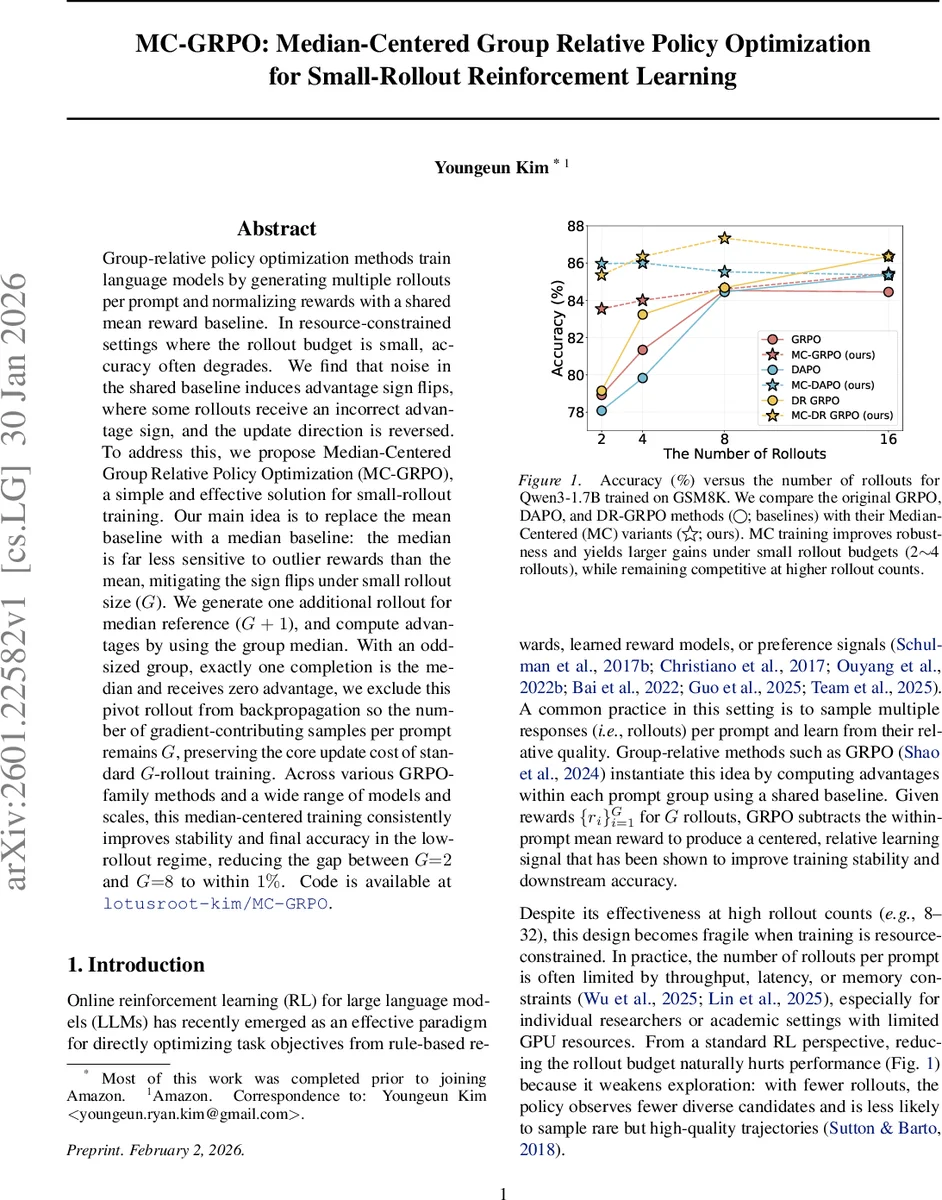

Group-relative policy optimization methods train language models by generating multiple rollouts per prompt and normalizing rewards with a shared mean reward baseline. In resource-constrained settings where the rollout budget is small, accuracy often degrades. We find that noise in the shared baseline induces advantage sign flips, where some rollouts receive an incorrect advantage sign, and the update direction is reversed. To address this, we propose Median-Centered Group Relative Policy Optimization (MC-GRPO), a simple and effective solution for small-rollout training. Our main idea is to replace the mean baseline with a median baseline: the median is far less sensitive to outlier rewards than the mean, mitigating the sign flips under small rollout size (G). We generate one additional rollout for median reference (G+1), and compute advantages by using the group median. With an odd-sized group, exactly one completion is the median and receives zero advantage, we exclude this pivot rollout from backpropagation so the number of gradient-contributing samples per prompt remains G, preserving the core update cost of standard G-rollout training. Across various GRPO-family methods and a wide range of models and scales, this median-centered training consistently improves stability and final accuracy in the low-rollout regime, reducing the gap between G=2 and G=8 to within 1%. Code is available at https://github.com/lotusroot-kim/MC-GRPO

💡 Research Summary

The paper addresses a critical failure mode in group‑relative policy optimization (GRPO) methods for large language model (LLM) reinforcement learning when the rollout budget per prompt is small. Standard GRPO‑style algorithms generate G responses for each prompt, compute rewards, and subtract the within‑prompt mean reward to obtain advantages. With few rollouts, a single outlier reward can shift the mean dramatically, causing many advantages to flip sign. This “advantage sign flip” reverses the intended gradient direction, reinforcing poor completions and penalizing good ones, which empirically leads to a noticeable drop in downstream accuracy (e.g., a 5 % sign‑flip rate yields roughly a 4 % accuracy loss).

To mitigate this, the authors propose Median‑Centered GRPO (MC‑GRPO). The key idea is to replace the mean baseline with a robust median baseline. For each prompt they sample G + 1 responses (an odd number), compute the median of the G + 1 rewards, and use this median as the shared baseline. The median sample receives zero advantage; it is excluded from back‑propagation, so the effective number of gradient‑contributing rollouts remains G, preserving the original computational budget. Advantages are normalized using the Median Absolute Deviation (MAD) rather than the standard deviation, further reducing sensitivity to outliers. The resulting advantage formula is

Aᵢ = (rᵢ − median) / (MAD + ε).

Because the change is confined to the advantage estimator, MC‑GRPO can be dropped into any GRPO‑family loss (GRPO, DAPO, DR‑GRPO) without altering other components such as importance‑sampling ratios, clipping, or KL regularization. The algorithm adds only one extra inference pass per prompt, which is negligible on modern high‑throughput inference engines (e.g., vLLM).

The authors evaluate MC‑GRPO across five LLMs (Qwen3‑1.7B, Llama‑3.2‑3B, Qwen2.5‑Math‑1.5B, Qwen3‑4B‑Instruct, Qwen2.5‑7B‑Instruct) on two math‑reasoning benchmarks (GSM8K and Math‑500) and also test generalization on AMC 2023 and AIME 2024. Experiments vary the rollout count G ∈ {2, 4, 8}. Results show that in the low‑budget regime (G = 2 or 4) MC‑GRPO consistently narrows the performance gap to the high‑budget (G = 8) setting, often achieving 4–6 % absolute accuracy improvements. For example, Qwen3‑1.7B on GSM8K improves from 78.9 % (GRPO, G=2) to 83.5 % (MC‑GRPO, G=2), reducing the gap to the G=8 baseline (84.5 %) to within 1 %. Sign‑flip rates drop dramatically when using the median baseline, confirming the causal link between sign flips and degraded learning. At larger rollout counts, MC‑GRPO matches or slightly exceeds the original methods, demonstrating that the median approach does not harm performance when more samples are available.

Additional analysis highlights that MAD provides a robust scale estimate, preventing a single high‑reward outlier from inflating the denominator of the advantage normalization. The exclusion of the median sample (zero advantage) does not affect the gradient because its contribution is identically zero, allowing the update size to stay fixed at G samples.

In summary, MC‑GRPO offers a simple, computationally cheap modification—adding one extra rollout and using median‑based centering—that dramatically improves stability and final accuracy for reinforcement‑learning fine‑tuning of LLMs under tight rollout budgets. Its compatibility with existing GRPO‑family algorithms and negligible overhead make it an attractive solution for researchers and practitioners constrained by GPU memory, latency, or throughput limits.

Comments & Academic Discussion

Loading comments...

Leave a Comment