PhoStream: Benchmarking Real-World Streaming for Omnimodal Assistants in Mobile Scenarios

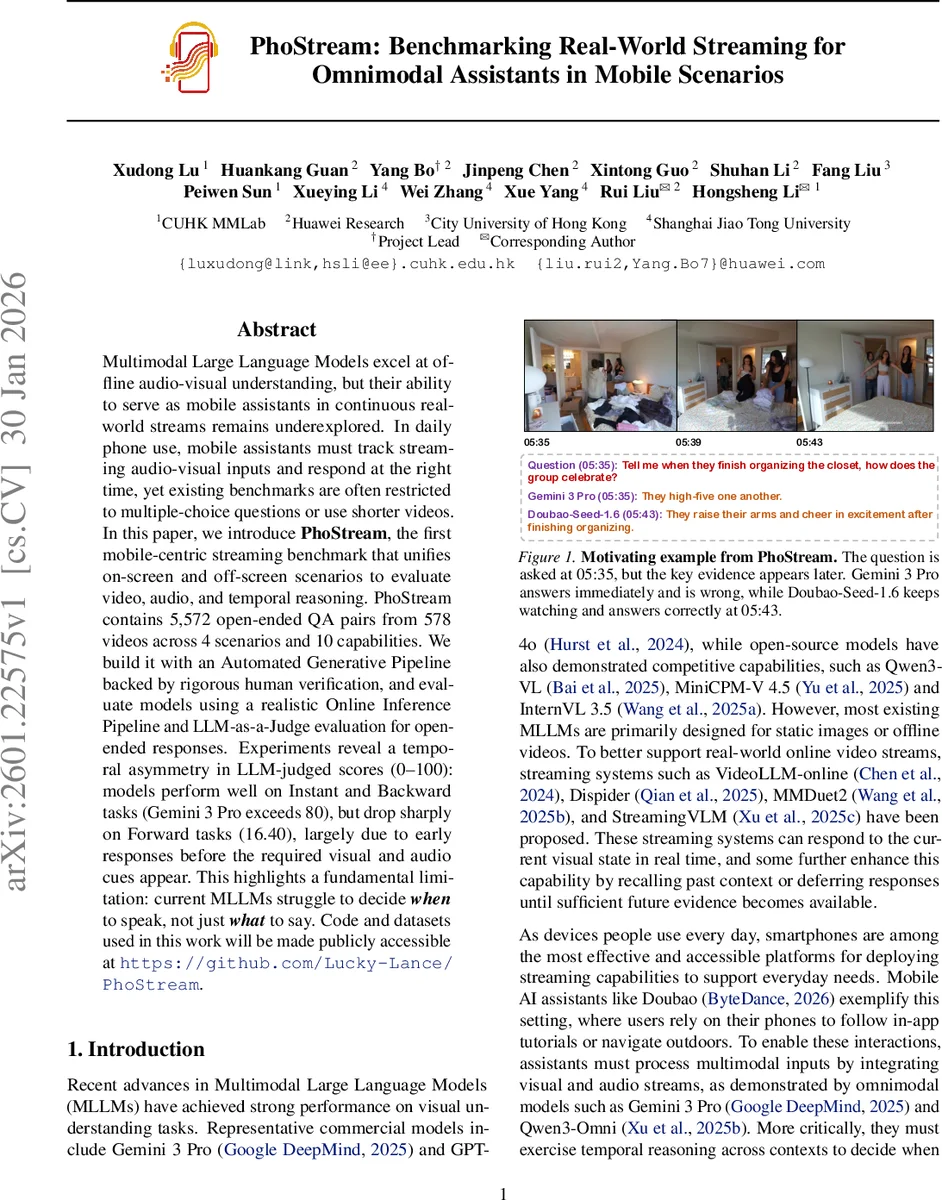

Multimodal Large Language Models excel at offline audio-visual understanding, but their ability to serve as mobile assistants in continuous real-world streams remains underexplored. In daily phone use, mobile assistants must track streaming audio-visual inputs and respond at the right time, yet existing benchmarks are often restricted to multiple-choice questions or use shorter videos. In this paper, we introduce PhoStream, the first mobile-centric streaming benchmark that unifies on-screen and off-screen scenarios to evaluate video, audio, and temporal reasoning. PhoStream contains 5,572 open-ended QA pairs from 578 videos across 4 scenarios and 10 capabilities. We build it with an Automated Generative Pipeline backed by rigorous human verification, and evaluate models using a realistic Online Inference Pipeline and LLM-as-a-Judge evaluation for open-ended responses. Experiments reveal a temporal asymmetry in LLM-judged scores (0-100): models perform well on Instant and Backward tasks (Gemini 3 Pro exceeds 80), but drop sharply on Forward tasks (16.40), largely due to early responses before the required visual and audio cues appear. This highlights a fundamental limitation: current MLLMs struggle to decide when to speak, not just what to say. Code and datasets used in this work will be made publicly accessible at https://github.com/Lucky-Lance/PhoStream.

💡 Research Summary

The paper introduces PhoStream, the first benchmark specifically designed to evaluate multimodal large language models (MLLMs) in real‑world mobile streaming scenarios. While recent MLLMs such as Gemini 3 Pro and Qwen3‑Omni have demonstrated impressive offline video and audio‑visual understanding, their ability to act as mobile assistants that continuously monitor a live video‑audio stream and decide the correct moment to respond has not been systematically measured. PhoStream fills this gap by providing a large‑scale, open‑ended question‑answer dataset that reflects both on‑screen (app UI tutorials) and off‑screen (daily vlogs, egocentric recordings) use cases.

Dataset construction: 578 videos with an average duration of 13.3 minutes are collected from four categories—YouTube vlogs (58.5 %), phone tutorials (24.1 %), phone recordings of app usage (10.3 %), and EgoBlind first‑person videos (7.1 %). From these videos, 5,572 open‑ended QA pairs are generated, covering ten capability dimensions ranging from UI navigation to audio‑visual event recognition. The authors employ an automated generative pipeline powered by Gemini 3 Pro to propose timestamped questions, candidate answers, and provisional verification. Ten human experts then perform a two‑round review, correcting errors, discarding ambiguous items, and ensuring that each answer is fully supported by the video content up to a defined cutoff timestamp.

Task taxonomy: Questions are divided into three temporal categories. Instant questions can be answered immediately using the current 1–2 second window. Backward questions require only past context; they are further split into short‑range retrospectives and long‑range comprehensive queries that may span the entire video up to the question timestamp. Forward questions are asked before the necessary evidence appears; the model must wait and answer only when the evidence becomes available. For Forward items, the cutoff timestamp is set to the earliest moment the answer can be justified. This design explicitly tests “when to speak” rather than merely “what to say.”

Evaluation protocol: An online inference pipeline streams each video at a 1‑second granularity. At the exact moment a question appears, the model receives the query once and may either respond immediately or continue watching. Early responses (answering before the cutoff) and non‑responses are penalized with a score of zero, making the metric highly sensitive to timing errors. Answers are judged by an LLM‑as‑a‑Judge framework that scores on a 0–100 scale, calibrated to align with human judgments.

Experimental findings: State‑of‑the‑art commercial (Gemini 3 Pro) and open‑source models (Qwen3‑Omni, MiniCPM‑V, InternVL) are evaluated. On Instant and Backward tasks, Gemini 3 Pro achieves scores above 80, indicating strong perception and reasoning when the required evidence is already present. However, on Forward tasks the same model drops to 16.40, and Qwen3‑Omni falls to around 12.3. Early‑response rates are alarming: Gemini 3 Pro answers prematurely in 79.12 % of Forward cases, while Qwen3‑Omni does so in 97.89 % of cases. This “impatience” reveals a fundamental limitation: current MLLMs lack mechanisms to defer answers until sufficient multimodal cues have accumulated.

Analysis: The benchmark’s high question density (average 9.6 questions per video) and long video lengths force models to maintain long‑term memory and perform temporal integration across many seconds or minutes. The results suggest that while perception modules (vision, audio, OCR) are mature, the decision‑making component that governs response timing is underdeveloped. The authors propose future directions such as reinforcement‑learning policies that explicitly learn when to answer, dedicated “answer‑time prediction” modules, and on‑device memory‑efficient architectures to support real‑time streaming on smartphones.

In summary, PhoStream provides a comprehensive, realistic, and publicly available platform for assessing multimodal LLMs in mobile streaming contexts. It uncovers a critical, previously overlooked failure mode—early, ill‑timed responses—and sets the stage for research aimed at building truly conversational, temporally aware mobile assistants.

Comments & Academic Discussion

Loading comments...

Leave a Comment