Darwinian Memory: A Training-Free Self-Regulating Memory System for GUI Agent Evolution

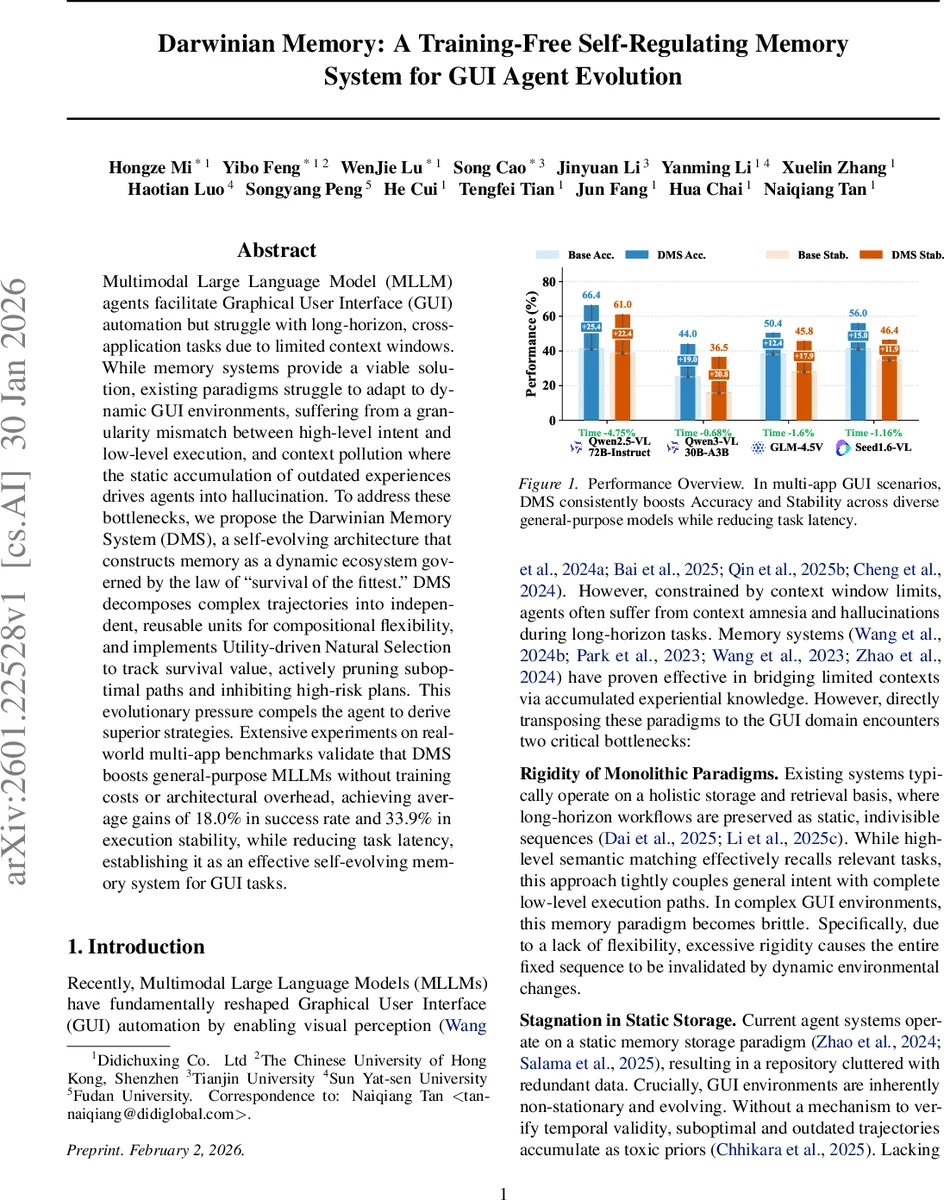

Multimodal Large Language Model (MLLM) agents facilitate Graphical User Interface (GUI) automation but struggle with long-horizon, cross-application tasks due to limited context windows. While memory systems provide a viable solution, existing paradigms struggle to adapt to dynamic GUI environments, suffering from a granularity mismatch between high-level intent and low-level execution, and context pollution where the static accumulation of outdated experiences drives agents into hallucination. To address these bottlenecks, we propose the Darwinian Memory System (DMS), a self-evolving architecture that constructs memory as a dynamic ecosystem governed by the law of survival of the fittest. DMS decomposes complex trajectories into independent, reusable units for compositional flexibility, and implements Utility-driven Natural Selection to track survival value, actively pruning suboptimal paths and inhibiting high-risk plans. This evolutionary pressure compels the agent to derive superior strategies. Extensive experiments on real-world multi-app benchmarks validate that DMS boosts general-purpose MLLMs without training costs or architectural overhead, achieving average gains of 18.0% in success rate and 33.9% in execution stability, while reducing task latency, establishing it as an effective self-evolving memory system for GUI tasks.

💡 Research Summary

The paper tackles two fundamental limitations of multimodal large language model (MLLM)‑based GUI agents: (1) limited context windows that cause “context amnesia” on long‑horizon tasks, and (2) static memory accumulation that leads to “context pollution” and hallucinations. Existing memory approaches store entire interaction histories as monolithic sequences and retrieve them via similarity matching. This tightly couples high‑level intent with low‑level actions, making the system brittle to UI changes and allowing outdated trajectories to persist as toxic priors.

To overcome these issues, the authors introduce the Darwinian Memory System (DMS), a training‑free, self‑evolving memory architecture integrated into a hierarchical Planner‑Actor framework. DMS reshapes memory from a single long sequence into a collection of independent, reusable sub‑plans. Each sub‑plan is expressed as a <Precondition, Goal> pair that describes the required UI state and the desired state transformation. A memory entry consists of (i) the natural‑language plan (semantic index), (ii) the dense execution trajectory τ = {(observation, action)…}, and (iii) meta‑data such as success flag, creation time, reuse count, and failure count. Single‑action trajectories are filtered out to keep the memory dense and meaningful.

Dual‑Factor Retrieval is used to match the current planner instruction with stored sub‑plans. The similarity score is the product of cosine similarities between the precondition embeddings and the goal embeddings, ensuring that both the starting UI context and the intended outcome must align for a hit. This dramatically reduces false positives compared with naïve whole‑sequence matching. The semantic indices are kept in memory while the heavy trajectories are stored on disk, cutting token usage and latency.

To prevent the system from stagnating on sub‑optimal solutions, DMS adds two evolutionary mechanisms:

-

ε‑Mutation – With a small probability ε (e.g., 0.05), the agent ignores a high‑confidence retrieved trajectory and re‑plans from scratch. If the newly generated trajectory τ′ is successful and shorter than the retrieved one, it replaces the existing memory entry in‑place, continuously pushing the memory pool toward higher efficiency.

-

Utility‑Driven Natural Selection – Each memory m_i receives a survival value S(m_i) that combines three factors:

- Marginal Utility: ln(1 + n_i) + V_new, where n_i is the reuse count and V_new boosts newly created memories to protect them from early pruning.

- Adaptive Temporal Decay: a sigmoid 1/(1 + e^{β(Δt − T_half(n_i))}) that penalizes long periods of inactivity. The half‑life T_half grows logarithmically with usage, so frequently used memories decay more slowly.

- Reliability Penalty: 1/(1 + γK_i), where K_i counts verification failures; this quickly demotes error‑prone trajectories.

The final survival score is the product of these three components. Periodically, an Elbow‑Method threshold prunes entries whose scores fall below the elbow point, keeping the memory bank compact and high‑quality.

The authors evaluate DMS on a suite of real‑world multi‑application benchmarks (e.g., file navigation → email composition → web search) using eight state‑of‑the‑art MLLMs (Qwen2.5‑VL, Qwen3‑VL, GLM‑4.5V, etc.) without any fine‑tuning or architectural changes. Results show an average +18 % increase in task success rate and +33.9 % improvement in execution stability. Because the agent reuses stored trajectories, token consumption drops, leading to 4 %–2 % reductions in overall latency. Moreover, the self‑pruning mechanism eliminates outdated or risky memories, markedly reducing hallucination incidents.

Key contributions are:

- A structural shift from monolithic to compositional memory, enabling flexible plan recombination.

- A biologically inspired self‑regulating ecosystem that autonomously prunes toxic priors and applies evolutionary pressure via utility, decay, and reliability signals.

- Demonstration that such a system can be added as a plug‑in to existing models, incurring no training cost while delivering substantial performance gains.

In summary, Darwinian Memory presents a novel, training‑free, self‑evolving memory framework that equips GUI agents with lifelong learning capabilities, allowing them to adapt to dynamic interfaces, avoid stale knowledge, and continuously improve their problem‑solving strategies. This work paves the way for more robust, long‑horizon multimodal agents that can operate reliably across ever‑changing software ecosystems.

Comments & Academic Discussion

Loading comments...

Leave a Comment