Controllable Information Production

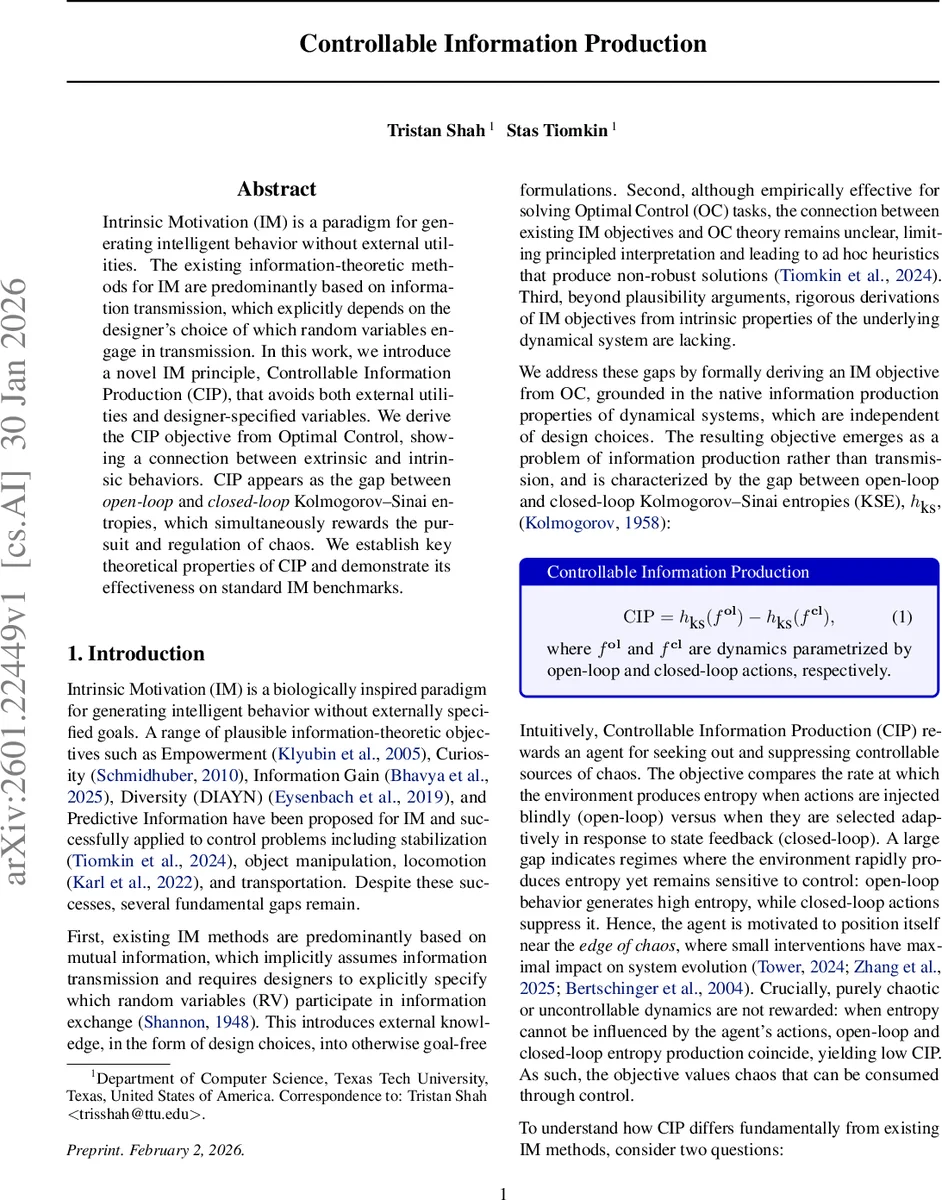

Intrinsic Motivation (IM) is a paradigm for generating intelligent behavior without external utilities. The existing information-theoretic methods for IM are predominantly based on information transmission, which explicitly depends on the designer’s choice of which random variables engage in transmission. In this work, we introduce a novel IM principle, Controllable Information Production (CIP), that avoids both external utilities and designer-specified variables. We derive the CIP objective from Optimal Control, showing a connection between extrinsic and intrinsic behaviors. CIP appears as the gap between open-loop and closed-loop Kolmogorov-Sinai entropies, which simultaneously rewards the pursuit and regulation of chaos. We establish key theoretical properties of CIP and demonstrate its effectiveness on standard IM benchmarks.

💡 Research Summary

The paper introduces a novel intrinsic‑motivation (IM) principle called Controllable Information Production (CIP), which departs from the prevailing information‑transmission framework that requires designers to specify source and target random variables. Instead of measuring how much pre‑existing information is conveyed through the dynamics, CIP quantifies how much new information (entropy) the environment generates and how much of that entropy can be suppressed by an agent’s feedback control.

Formally, the authors consider a discrete‑time dynamical system (x_{t+1}=f(x_t,u_t)) and linearize it around a nominal trajectory. Using a standard optimal‑control cost (J=\sum_{t=0}^{T-1}c_t(x_t,u_t)+c_T(x_T)), they derive the discrete‑time Algebraic Riccati Equation (D‑ARE) for the value‑function Hessian (V^{\pi}_{xx}). Lemma 4.1 shows that the optimal linear feedback policy naturally decomposes into an extrinsic drift term (goal‑directed) and an intrinsic feedback term that stabilizes unstable perturbations.

The key insight is that the Hessian (V^{\pi}{xx}) contains two hidden entropy rates. By selectively removing control‑dependent terms from the D‑ARE recursion, the authors define two auxiliary matrices (X_t) and (Y_t). The asymptotic growth rates of (\log\det X_0) and (\log\det Y_0) are proved (Lemma 4.3) to equal the Kolmogorov‑Sinai entropy (KSE) of the closed‑loop dynamics (h{KS}(f_{cl})) and the open‑loop dynamics (h_{KS}(f_{ol})), respectively. Consequently, the difference

\

Comments & Academic Discussion

Loading comments...

Leave a Comment