Beyond Activation Patterns: A Weight-Based Out-of-Context Explanation of Sparse Autoencoder Features

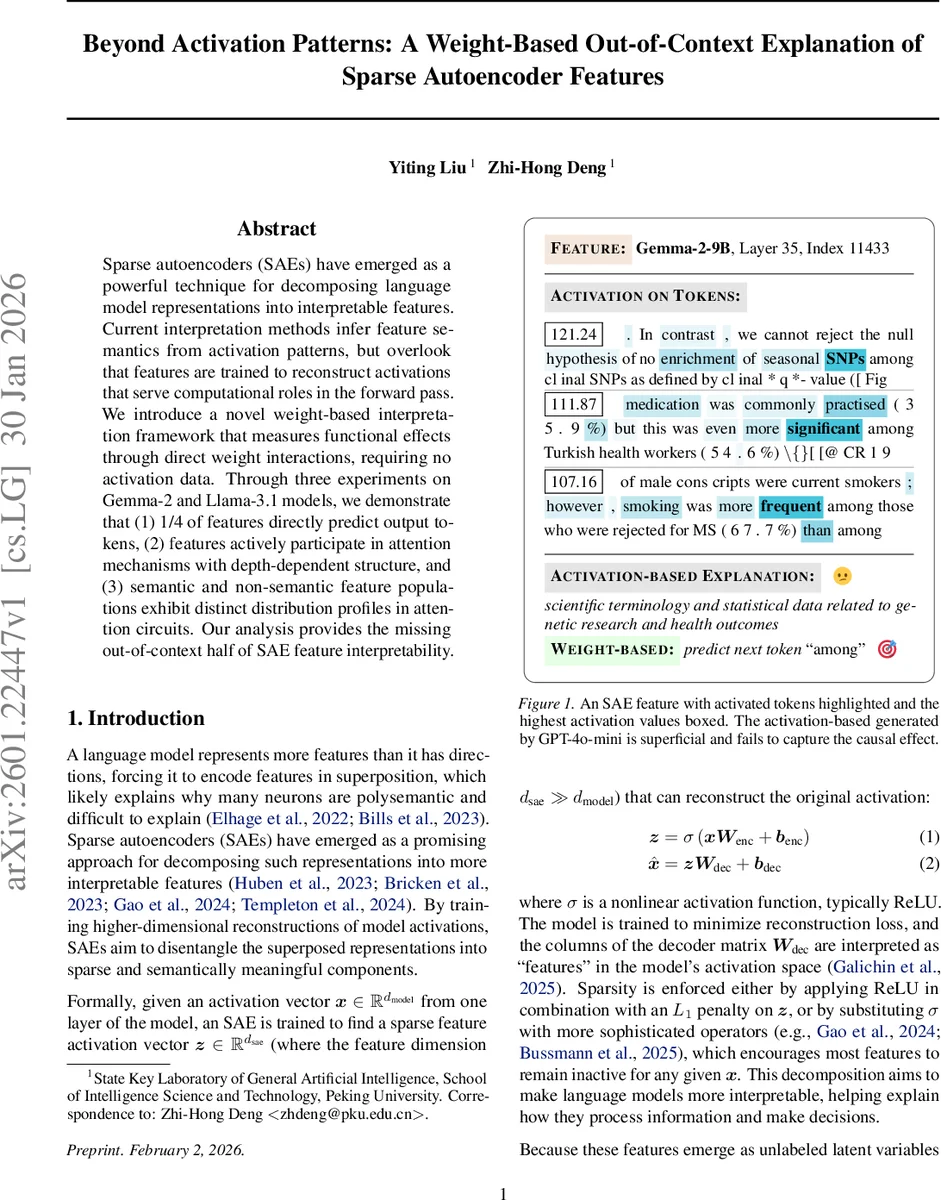

Sparse autoencoders (SAEs) have emerged as a powerful technique for decomposing language model representations into interpretable features. Current interpretation methods infer feature semantics from activation patterns, but overlook that features are trained to reconstruct activations that serve computational roles in the forward pass. We introduce a novel weight-based interpretation framework that measures functional effects through direct weight interactions, requiring no activation data. Through three experiments on Gemma-2 and Llama-3.1 models, we demonstrate that (1) 1/4 of features directly predict output tokens, (2) features actively participate in attention mechanisms with depth-dependent structure, and (3) semantic and non-semantic feature populations exhibit distinct distribution profiles in attention circuits. Our analysis provides the missing out-of-context half of SAE feature interpretability.

💡 Research Summary

Sparse autoencoders (SAEs) have become a popular tool for dissecting the high‑dimensional hidden states of large language models (LLMs) into more interpretable components. Existing interpretation work, however, relies almost exclusively on “activation‑based” analyses: researchers collect input contexts that strongly activate a given feature, feed those contexts to a language model, and ask a separate LLM to generate a human‑readable label. While this approach tells us when a feature fires, it does not reveal what effect the feature has on the model’s computation when it is turned on or off. In other words, activation‑based methods capture correlation but miss causality.

The present paper proposes a complementary “weight‑based out‑of‑context” framework that bypasses activation data entirely. The key insight is that each SAE feature consists of an encoder vector (W^{enc}_{·,i}) and a decoder vector (W^{dec}_i). When the feature is active, its decoder vector is added linearly to the residual stream and eventually passes through the model’s unembedding matrix (W_U). By multiplying (W^{dec}_i) with (W_U) (and applying the final layer norm) the authors obtain a logit vector (l_i^D) that directly shows which vocabulary tokens the feature promotes or suppresses. Symmetrically, multiplying the encoder vector with the embedding matrix (W_E) yields an “input logit” (l_i^E), indicating how the feature aligns with input tokens.

To decide whether a feature is semantically meaningful, the authors design 23 candidate metrics and settle on three that are only moderately correlated: (1) Levenshtein similarity among the top‑10 tokens (low distance indicates lexical variants of a single concept), (2) cosine similarity of the embeddings of those tokens (high similarity indicates semantic cohesion), and (3) entropy of the top‑100 logits (low entropy means the feature’s influence is concentrated on a few tokens). They set each metric’s threshold at the 50th percentile of a representative middle layer (layer 27 for Gemma‑2‑9B) and label a feature as “semantic” only if it passes all three thresholds.

Experiment 1 – Output‑centric analysis

Applying the three‑metric filter to SAEs trained on Gemma‑2‑2B, Gemma‑2‑9B, and Llama‑3.1‑8B reveals that roughly one‑quarter of all features directly predict output tokens. In Gemma models the pass‑rate follows a U‑shaped curve across depth: high in early layers (close to the input embedding), low in the middle, and high again in the final layers (close to the logits). This pattern matches the intuition that early layers encode concrete lexical information, middle layers perform abstract composition, and late layers specialize for token generation.

In Llama‑3.1 the picture is different because the model unties its embedding and unembedding matrices. The decoder‑unembedding analysis shows a monotonic increase: virtually no semantic alignment in the first few layers, rising steadily to over 60 % in the top layer. The symmetric encoder‑embedding analysis shows the opposite trend: strong alignment in the first third of the network, then a rapid drop to near zero. Together these trajectories suggest that untied matrices allow the network to specialize depth‑wise: early layers focus on input‑space representations, while later layers become dedicated to output‑space predictions.

Experiment 2 – Interaction with attention

Beyond token prediction, the authors probe whether features influence the attention mechanism. By multiplying each decoder vector with the query, key, and value matrices of every attention head, they compute a “head‑impact score.” Approximately 25 % of features have high scores for at least one head, indicating that those features can reshape attention weights and thus affect how information flows between tokens. This demonstrates that SAE features are not passive reconstructions but active participants in the model’s computational graph.

Experiment 3 – Semantic vs. non‑semantic populations

The authors compare the distribution of semantic and non‑semantic features across layers. Non‑semantic features tend to cluster in the middle layers, exhibit higher entropy logits, and have flatter token distributions, suggesting they serve more diffuse, possibly regularizing roles. Semantic features, by contrast, have sharply peaked logits and low entropy, confirming their direct causal role in token selection.

Overall, the paper establishes that (1) a sizable fraction of SAE features are genuine output predictors, (2) these features are organized in depth‑dependent patterns that differ across architectures, and (3) many features actively modulate attention, revealing a mechanistic role that activation‑based methods miss. By releasing the code and trained SAEs, the authors enable reproducibility and invite the community to extend weight‑based analysis to other model families (e.g., multimodal transformers, encoder‑decoder systems). This work shifts interpretability research from merely describing when a neuron fires to understanding how it shapes the model’s computation, thereby bridging the gap between semantic labeling and causal explanation.

Comments & Academic Discussion

Loading comments...

Leave a Comment