Towards Resiliency in Large Language Model Serving with KevlarFlow

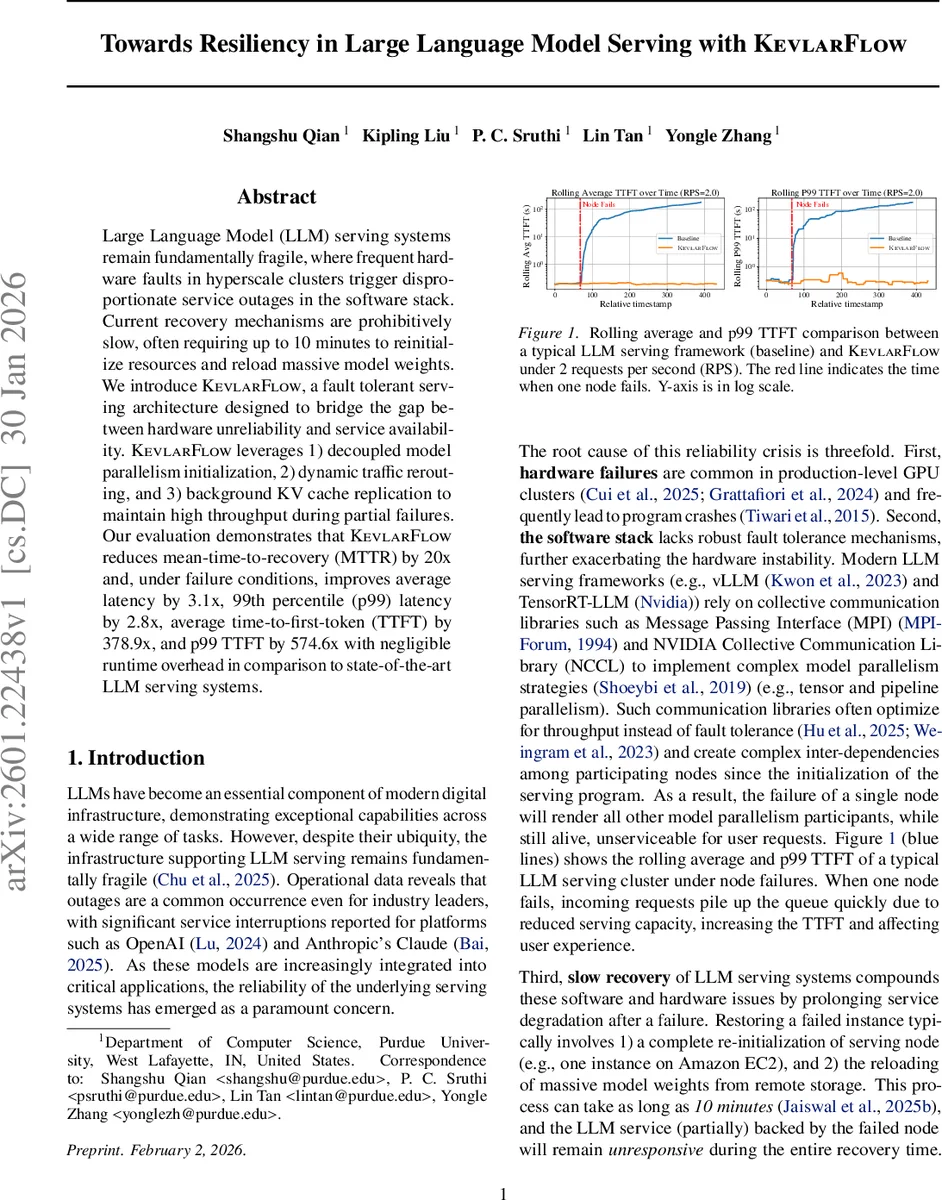

Large Language Model (LLM) serving systems remain fundamentally fragile, where frequent hardware faults in hyperscale clusters trigger disproportionate service outages in the software stack. Current recovery mechanisms are prohibitively slow, often requiring up to 10 minutes to reinitialize resources and reload massive model weights. We introduce KevlarFlow, a fault tolerant serving architecture designed to bridge the gap between hardware unreliability and service availability. KevlarFlow leverages 1) decoupled model parallelism initialization, 2) dynamic traffic rerouting, and 3) background KV cache replication to maintain high throughput during partial failures. Our evaluation demonstrates that KevlarFlow reduces mean-time-to-recovery (MTTR) by 20x and, under failure conditions, improves average latency by 3.1x, 99th percentile (p99) latency by 2.8x, average time-to-first-token (TTFT) by 378.9x, and p99 TTFT by 574.6x with negligible runtime overhead in comparison to state-of-the-art LLM serving systems.

💡 Research Summary

The paper “Towards Resiliency in Large Language Model Serving with KevlarFlow” addresses a critical weakness in current LLM serving infrastructures: extreme fragility in the face of hardware failures. Modern serving stacks such as vLLM or Nvidia’s TensorRT‑LLM rely on collective communication libraries (MPI, NCCL) to implement tensor and pipeline parallelism. These libraries are optimized for throughput, not fault tolerance, and they bind the entire model parallel group to a static communicator created at startup. Consequently, the failure of a single GPU node collapses the whole parallel group, forcing a full service restart and a costly reload of multi‑gigabyte model weights—a process that can take up to ten minutes, dramatically inflating mean‑time‑to‑recovery (MTTR) and violating latency Service Level Objectives (SLOs).

KevlarFlow proposes a fundamentally different architecture that eliminates the single point of failure and decouples logical availability from physical node health. Its design rests on three novel mechanisms:

-

Decoupled Model Parallelism Initialization – Instead of a monolithic startup sequence (state sharing → communicator creation → weight loading), each node autonomously discovers peers, validates health, and only then constructs a communicator. This permits dynamic re‑configuration: when a node crashes, the remaining healthy nodes can quickly form a new communicator and continue inference using already‑loaded weights, avoiding a full restart.

-

Dynamic Traffic Rerouting – KevlarFlow treats a load‑balancer group as a pool of identical model replicas. If a pipeline stage becomes unavailable, traffic is rerouted around the failed node to other replicas. Only the capacity of the failed node is lost; the rest of the GPUs keep serving requests, preserving throughput and preventing request queues from exploding.

-

Background KV‑Cache Replication – The KV cache stores attention keys and values for each token, dramatically accelerating generation. Traditional systems keep this cache solely in GPU memory; a node failure erases it, forcing a full request restart. KevlarFlow continuously replicates each request’s KV cache to the GPU memory of other nodes in the same pool. Upon failure, the replicated cache is used to resume the in‑flight request instantly, eliminating the latency spike associated with cache loss.

The authors evaluate KevlarFlow on a four‑stage pipeline‑parallel model under a modest 2 RPS load. When a single node fails, KevlarFlow achieves:

- Average latency improvement of 3.1× and p99 latency improvement of 2.8×.

- Average time‑to‑first‑token (TTFT) improvement of 378.9× and p99 TTFT improvement of 574.6×.

- MTTR reduction from ~10 minutes to ~30 seconds (20× faster).

These gains come with “negligible” overhead during normal operation; the background KV‑cache replication consumes minimal bandwidth and memory, and the decoupled initialization adds only a small constant to startup time. Compared to state‑of‑the‑art serving systems, KevlarFlow’s performance degradation under failure is dramatically lower, while its steady‑state throughput remains within 1–2 % of the baseline.

In the related‑work discussion, the paper distinguishes itself from fault‑tolerance techniques used in LLM training (checkpointing, pipeline bubble redistribution) which are unsuitable for inference because training runs a single forward pass per iteration and does not maintain per‑request KV state. Existing serving‑focused fault‑tolerance approaches (DejaVu, SpotServe, AnchorTP, R2CCL) either require a full instance restart after topology changes, handle only intra‑node GPU failures, or target NIC failures. None of them can keep a serving instance alive after an entire node crash. KevlarFlow’s key innovation is to split a serving instance into multiple independent fault domains (the nodes of a multi‑node pipeline), allowing the system to survive node loss without restarting the whole service.

The paper concludes that KevlarFlow transforms LLM serving from a “fail‑stop” paradigm to a “fail‑stutter” model, where service gracefully degrades rather than collapses. This self‑healing fabric is especially valuable for large‑scale AI deployments where hardware failures are frequent and latency SLOs are strict. Future work could explore scaling to many‑model, many‑tenant environments, integration with heterogeneous interconnects (NVLink, PCIe, Ethernet), and quantitative cost‑benefit analyses for cloud providers.

Overall, KevlarFlow represents a significant step toward robust, production‑grade LLM inference, offering orders‑of‑magnitude reductions in recovery latency and tail‑latency spikes while preserving near‑optimal throughput.

Comments & Academic Discussion

Loading comments...

Leave a Comment