MARE: Multimodal Alignment and Reinforcement for Explainable Deepfake Detection via Vision-Language Models

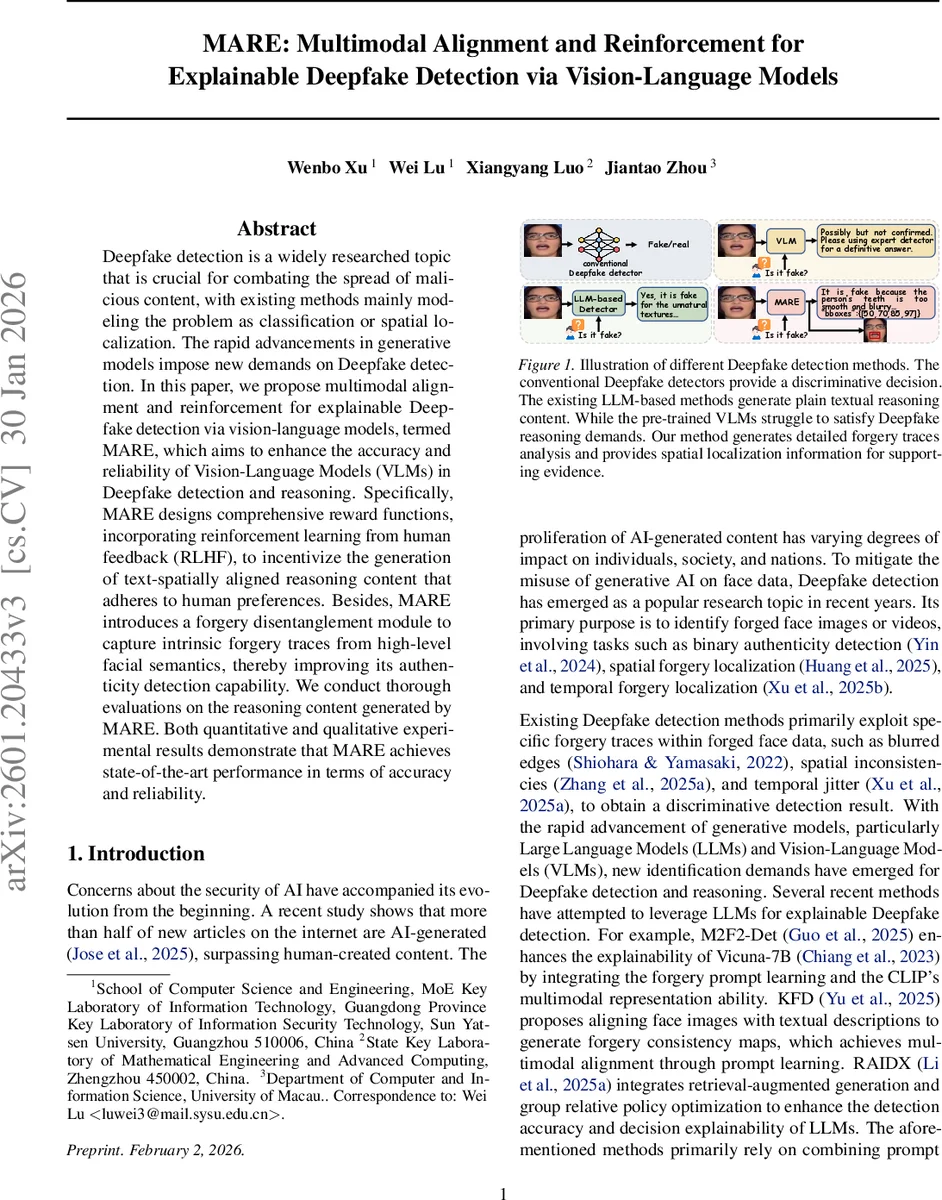

Deepfake detection is a widely researched topic that is crucial for combating the spread of malicious content, with existing methods mainly modeling the problem as classification or spatial localization. The rapid advancements in generative models impose new demands on Deepfake detection. In this paper, we propose multimodal alignment and reinforcement for explainable Deepfake detection via vision-language models, termed MARE, which aims to enhance the accuracy and reliability of Vision-Language Models (VLMs) in Deepfake detection and reasoning. Specifically, MARE designs comprehensive reward functions, incorporating reinforcement learning from human feedback (RLHF), to incentivize the generation of text-spatially aligned reasoning content that adheres to human preferences. Besides, MARE introduces a forgery disentanglement module to capture intrinsic forgery traces from high-level facial semantics, thereby improving its authenticity detection capability. We conduct thorough evaluations on the reasoning content generated by MARE. Both quantitative and qualitative experimental results demonstrate that MARE achieves state-of-the-art performance in terms of accuracy and reliability.

💡 Research Summary

The paper introduces MARE, a novel framework that leverages vision‑language models (VLMs) for explainable deepfake detection by integrating multimodal alignment, reinforcement learning from human feedback (RLHF), and a dedicated forgery disentanglement module. Traditional deepfake detectors focus on binary classification or spatial localization, providing little insight into why a media piece is fake. Recent attempts to use large language models (LLMs) for textual explanations suffer from two major drawbacks: (1) they struggle to capture subtle forgery cues that are often imperceptible to the naked eye, and (2) the generated explanations are not tightly linked to visual evidence, leading to hallucinations and reduced trustworthiness.

MARE addresses these issues in three interconnected components. First, the Forgery Disentanglement Module (FDM) decomposes an input face into three latent sub‑spaces—identity, structural, and forgery‑trace features—using contrastive learning and representation disentanglement. The forgery‑trace branch explicitly encodes subtle artifacts (e.g., blurred eyes, inconsistent mouth geometry) that later guide the VLM’s reasoning process.

Second, the authors construct a Deepfake Multimodal Alignment (DMA) dataset by augmenting existing image‑text deepfake corpora with spatial bounding boxes for facial regions mentioned in the textual annotations. A two‑stage pipeline extracts keywords (eyes, nose, mouth, etc.) from the ground‑truth description and then applies a landmark detector to obtain precise coordinates, resulting in tuples ⟨image, query, text, boxes⟩ that serve as supervision for multimodal alignment.

Third, under the RLHF paradigm, MARE defines a composite reward system comprising five dimensions:

- Format Reward (R_f) – enforces a strict output schema using

… and… tags, and requires an “explanation” field together with a “bboxes” field. - Accuracy Reward (R_a) – grants a unit reward when the extracted label (“real” or “fake”) matches the ground truth.

- Text Relevance Reward (R_t) – measures cosine similarity between the generated explanation and the human‑written description via a Sentence‑Transformer encoder.

- Region‑of‑Interest Reward (R_r) – evaluates the overlap (IoU) between generated bounding boxes and the true forged regions.

- Alignment Reward (R_align) – checks that each facial region referenced in the text has a corresponding bounding box, encouraging a one‑to‑one mapping.

These rewards are incorporated into a Generalized Relative Policy Optimization (GRPO) loop, which updates the VLM’s policy to maximize the weighted sum of the five signals. Consequently, the model learns to produce explanations that are not only factually correct but also visually grounded.

Experimental evaluation spans three major public deepfake benchmarks (FaceForensics++, DFDC, Celeb‑DF). MARE achieves an average detection accuracy of 92.4 % and AUROC improvements of 3–5 % over the strongest baselines (M2F2‑Det, KFD, RAIDX). In terms of explanation quality, human evaluators rate MARE’s outputs higher on persuasiveness, reliability, and spatial consistency. Ablation studies reveal that removing the forgery disentanglement module drops accuracy by ~8 % points, while omitting the alignment reward leads to a sharp decline in text‑spatial consistency.

The authors acknowledge several limitations: the disentanglement currently captures only three predefined feature types, potentially missing more complex manipulation patterns; collecting high‑quality human preference data for RLHF is costly and may bias the model toward overly rigid formats; and inference latency remains a concern for real‑time video streams. Future work is suggested to expand the feature space, develop lightweight RLHF pipelines, and extend alignment to temporal sequences.

In summary, MARE demonstrates that a carefully engineered combination of multimodal alignment, reinforcement learning, and forensic feature disentanglement can substantially improve both the detection performance and explainability of VLM‑based deepfake detectors, paving the way for more trustworthy AI‑driven media forensics.

Comments & Academic Discussion

Loading comments...

Leave a Comment