Supporting Informed Self-Disclosure: Design Recommendations for Presenting AI-Estimates of Privacy Risks to Users

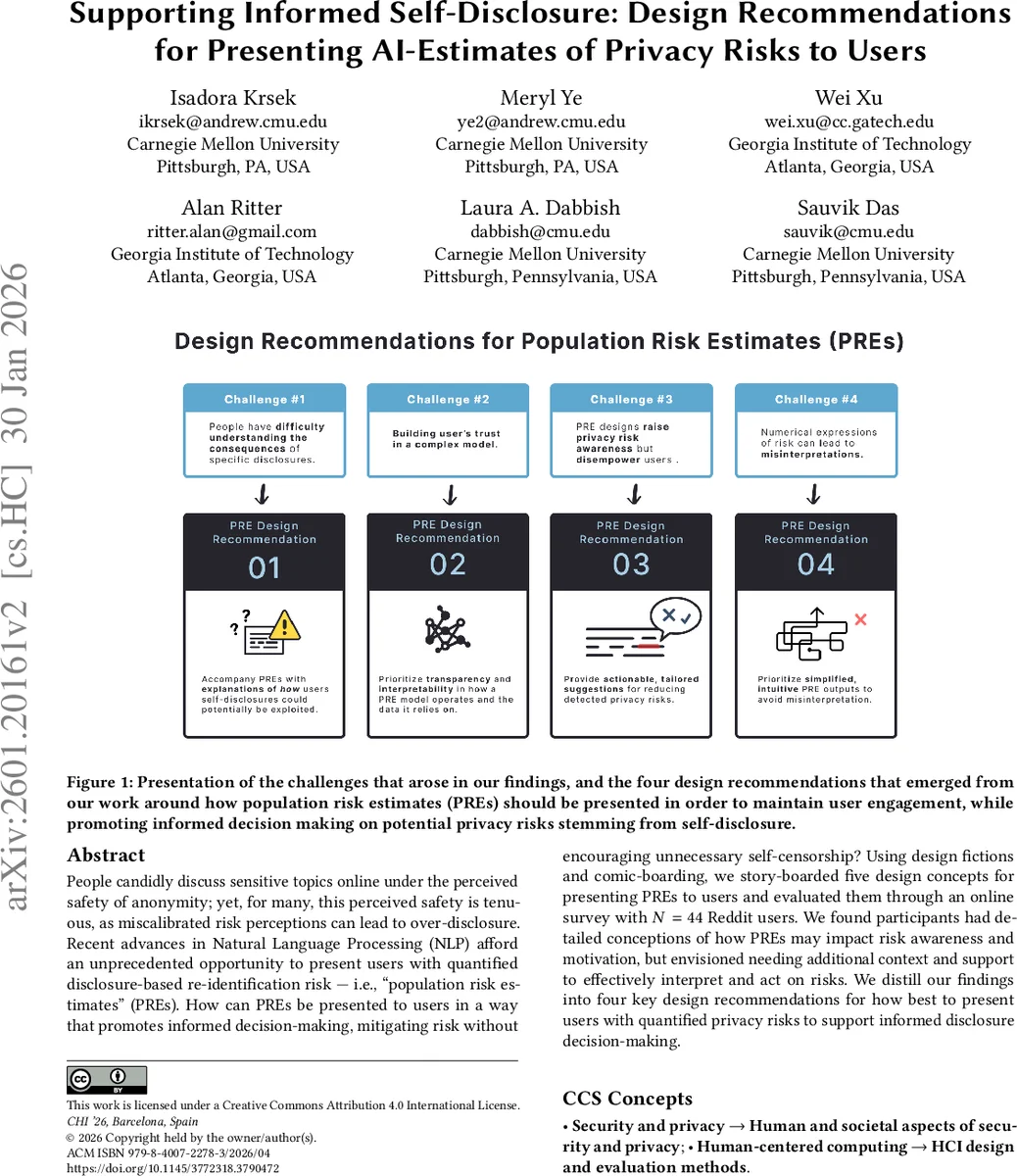

People candidly discuss sensitive topics online under the perceived safety of anonymity; yet, for many, this perceived safety is tenuous, as miscalibrated risk perceptions can lead to over-disclosure. Recent advances in Natural Language Processing (NLP) afford an unprecedented opportunity to present users with quantified disclosure-based re-identification risk (i.e., “population risk estimates”, PREs). How can PREs be presented to users in a way that promotes informed decision-making, mitigating risk without encouraging unnecessary self-censorship? Using design fictions and comic-boarding, we story-boarded five design concepts for presenting PREs to users and evaluated them through an online survey with N = 44 Reddit users. We found participants had detailed conceptions of how PREs may impact risk awareness and motivation, but envisioned needing additional context and support to effectively interpret and act on risks. We distill our findings into four key design recommendations for how best to present users with quantified privacy risks to support informed disclosure decision-making.

💡 Research Summary

The paper investigates how to present AI‑generated “population risk estimates” (PREs)—quantitative measures of how many people share a set of disclosed attributes—to users who are about to share sensitive information online. The authors argue that while self‑disclosure on platforms such as Reddit provides social support, users often misjudge the privacy risks because re‑identification threats are abstract. Recent advances in large‑language‑model reasoning make it possible to compute PREs in real time, but the way these numbers are displayed may determine whether users become more aware, motivated, and capable of managing their disclosures.

To explore design space, the researchers created five visual‑feedback concepts using speculative design and comic‑boarding techniques. The concepts ranged from a bare numeric k‑value, to a colour‑coded risk bar, to a design that offers concrete editing suggestions (e.g., “replace ‘tennis’ with ‘sport’ to increase anonymity”), to a narrative explaining how an attacker could combine the disclosed facts, and finally a design that proposes alternative channels (e.g., a private forum) when risk is high. Each concept was embedded in a short illustrated scenario depicting a Reddit user preparing a post about a personal problem.

A “speed‑dating” study was conducted with 44 Reddit participants recruited via Prolific. Participants first answered baseline questions about their expectations of anonymity and prior privacy knowledge. Then, for each comic board, they rated perceived risk awareness, motivation to mitigate risk, and perceived ability to act, using five‑point Likert scales, and provided free‑form reflections. The authors analysed both quantitative ratings and qualitative comments.

Key findings: (1) PREs substantially raise risk awareness—74 % of participants reported a higher perception of danger after seeing a PRE. (2) Designs that pair the PRE with concrete, actionable suggestions most effectively boost both motivation and ability; users reported they would “tweak wording while preserving the core message” rather than delete the post entirely. (3) Explaining the derivation of the PRE (e.g., showing how an attacker could combine public datasets) improves users’ mental model of the threat and encourages more deliberate decision‑making. (4) Simple numeric displays or colour‑only cues can cause confusion or anxiety, especially for users with visual impairments or cultural differences in colour interpretation. (5) When risk is deemed high, offering an alternative, lower‑risk venue helps users avoid self‑censorship while still obtaining support.

From these insights the authors distilled four design recommendations: (i) accompany each PRE with actionable guidance that preserves communicative intent (e.g., specific wording changes, anonymisation techniques); (ii) transparently explain how the PRE was computed and illustrate plausible attacker scenarios; (iii) present risk information in a balanced way that warns without prompting excessive self‑censorship; (iv) use clear, jargon‑free language and intuitive visual metaphors (icons, simple charts) to ensure interpretability.

The paper also acknowledges limitations: the sample is limited to Reddit users, which may not represent other platforms; the study used static mock‑ups rather than a live system, so real‑time performance, latency, and false‑positive concerns remain untested; and the designs assume accurate PRE calculations, yet model errors could mislead users. Future work should prototype an integrated AI‑assistant that delivers PREs during composition, evaluate longitudinal effects on disclosure behaviour, and explore personalization of feedback based on user expertise.

Overall, the study demonstrates that quantified privacy risk estimates can make abstract re‑identification threats concrete, but their utility hinges on thoughtful presentation that supports awareness, motivation, and ability simultaneously. The four design guidelines provide a concrete roadmap for building user‑centred AI privacy assistants that help people share responsibly without unnecessary self‑censorship.

Comments & Academic Discussion

Loading comments...

Leave a Comment