VGGT-SLAM 2.0: Real-time Dense Feed-forward Scene Reconstruction

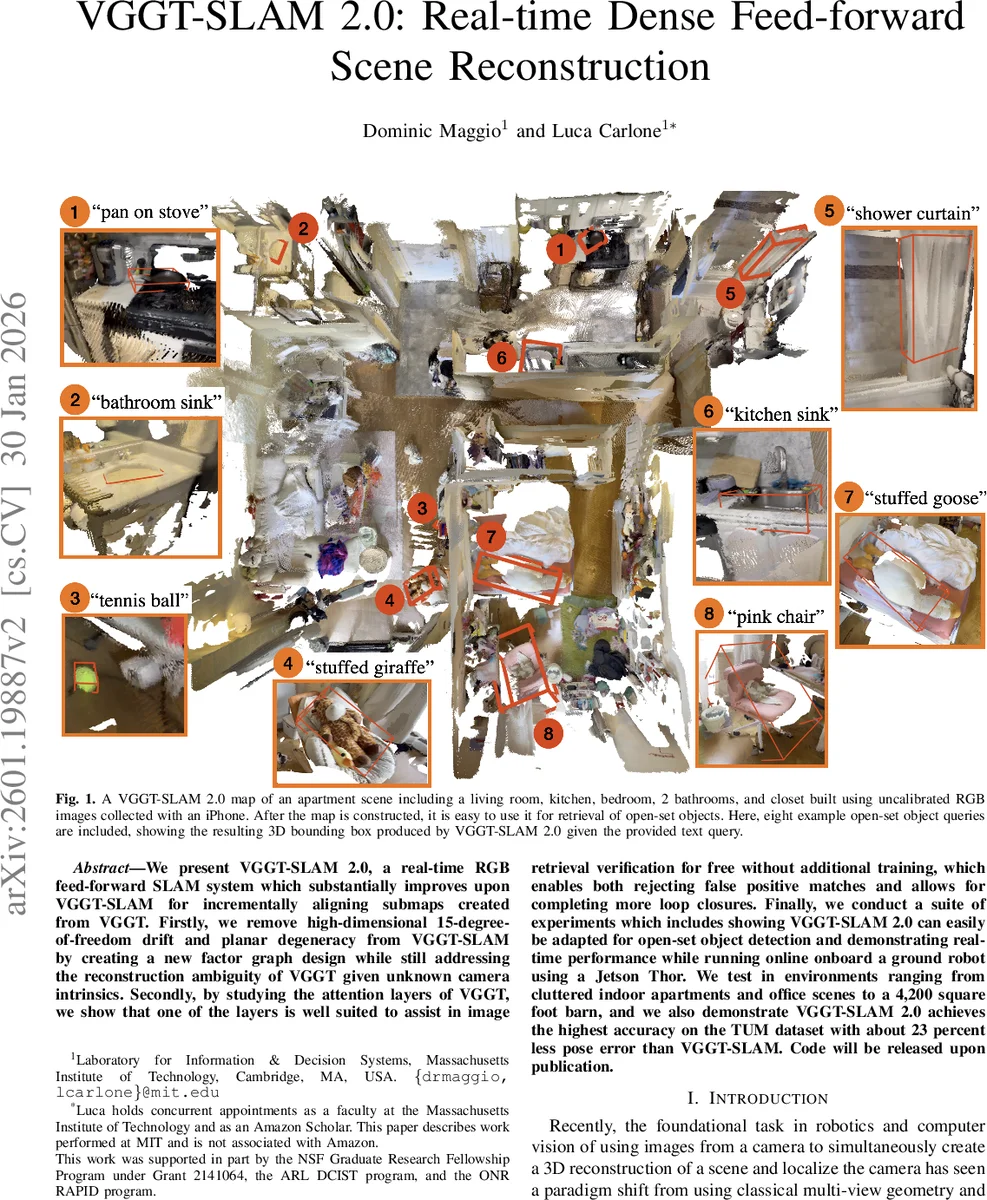

We present VGGT-SLAM 2.0, a real-time RGB feed-forward SLAM system which substantially improves upon VGGT-SLAM for incrementally aligning submaps created from VGGT. Firstly, we remove high-dimensional 15-degree-of-freedom drift and planar degeneracy from VGGT-SLAM by creating a new factor graph design while still addressing the reconstruction ambiguity of VGGT given unknown camera intrinsics. Secondly, by studying the attention layers of VGGT, we show that one of the layers is well suited to assist in image retrieval verification for free without additional training, which enables both rejecting false positive matches and allows for completing more loop closures. Finally, we conduct a suite of experiments which includes showing VGGT-SLAM 2.0 can easily be adapted for open-set object detection and demonstrating real-time performance while running online onboard a ground robot using a Jetson Thor. We test in environments ranging from cluttered indoor apartments and office scenes to a 4,200 square foot barn, and we also demonstrate VGGT-SLAM 2.0 achieves the highest accuracy on the TUM dataset with about 23 percent less pose error than VGGT-SLAM. Code will be released upon publication.

💡 Research Summary

VGGT‑SLAM 2.0 builds on the original VGGT‑SLAM framework by addressing three major limitations that hindered large‑scale, real‑time deployment. First, it eliminates the 15‑degree‑of‑freedom (DoF) SL(4) alignment used in the prior system, which caused rapid drift and planar degeneracy. By enforcing that overlapping frames between sub‑maps share identical position, orientation, and intrinsic calibration, the only remaining free variable is a global scale factor. This scale is estimated robustly as the median ratio of corresponding 3‑D point distances after warping the points to a common calibration.

Second, the authors redesign the factor graph: every keyframe becomes a node, intra‑sub‑map edges encode SE(3) pose relationships, and inter‑sub‑map edges encode only calibration and scale consistency for overlapping frames. This graph structure allows global optimization to directly correct translation and rotation errors that were previously handled only indirectly, resulting in substantially reduced drift before loop closure.

Third, an insightful analysis of VGGT’s internal attention layers reveals that layer 22 consistently produces a “spotlight” attention pattern that highlights true correspondences between two images. Leveraging this observation, the paper defines a match score (γₜ) and an aggregated confidence metric (α_match) that quantify how well layer 22 predicts overlap. When combined with an external image‑retrieval system (SALAD), this verification step filters out false positives, enabling more reliable loop closures even in texture‑poor or repetitive environments.

The system is demonstrated on a Jetson Thor platform, achieving real‑time performance (≈30 fps) on a ground robot. It also integrates open‑set object detection by projecting 3‑D bounding boxes onto the dense VGGT‑derived map. Experiments span cluttered indoor apartments, office spaces, a 4,200 sq ft barn, and outdoor KITTI sequences. On the TUM RGB‑D benchmark, VGGT‑SLAM 2.0 attains the lowest pose error among recent learning‑based SLAM methods, reducing the error by roughly 23 % compared to the original VGGT‑SLAM.

Overall, VGGT‑SLAM 2.0 showcases a practical hybrid approach that marries geometric foundation models with classical SLAM optimization, delivering memory‑efficient, accurate, and real‑time dense reconstruction without requiring prior camera calibration. The forthcoming code release is expected to further accelerate research and applications in autonomous robotics.

Comments & Academic Discussion

Loading comments...

Leave a Comment