Generalizable Multimodal Large Language Model Editing via Invariant Trajectory Learning

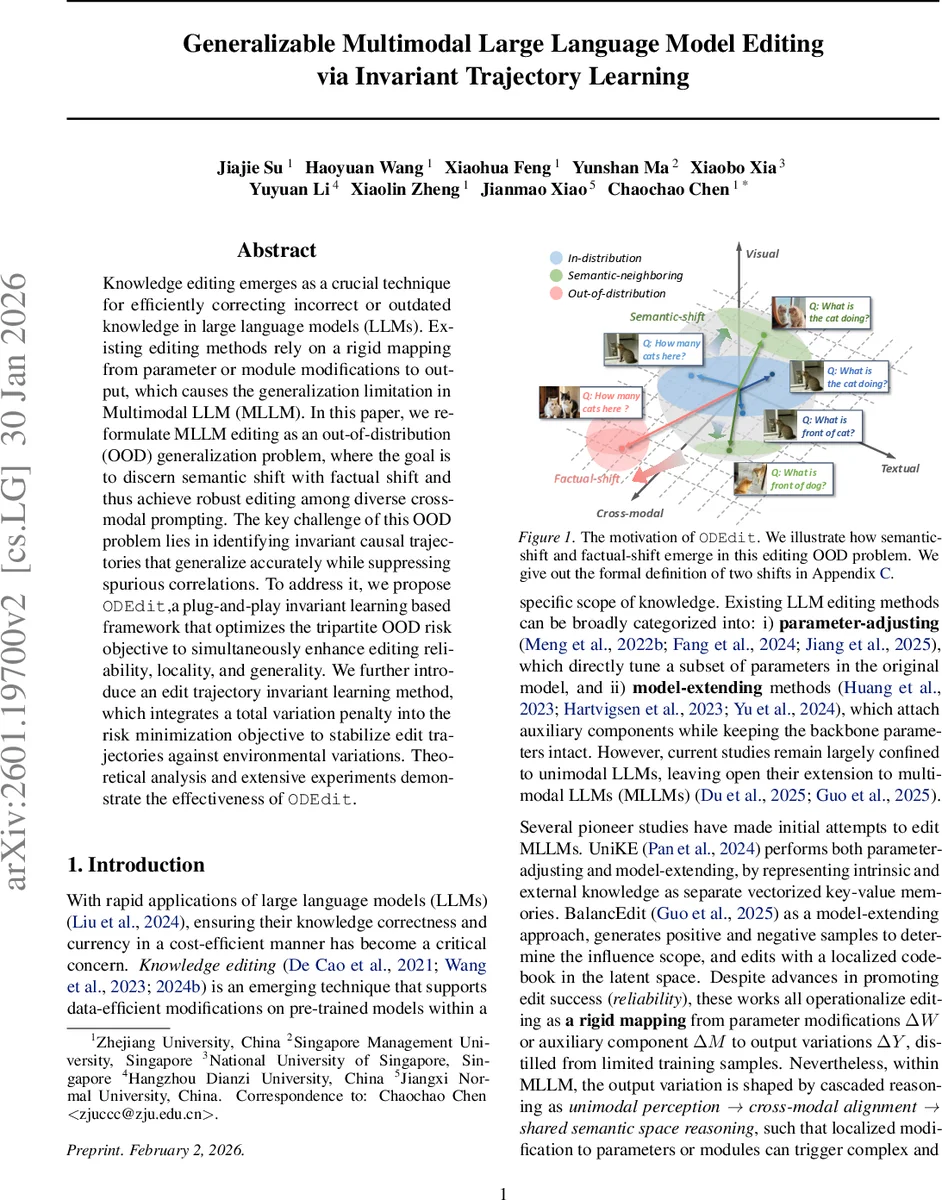

Knowledge editing emerges as a crucial technique for efficiently correcting incorrect or outdated knowledge in large language models (LLM). Existing editing methods rely on a rigid mapping from parameter or module modifications to output, which causes the generalization limitation in Multimodal LLM (MLLM). In this paper, we reformulate MLLM editing as an out-of-distribution (OOD) generalization problem, where the goal is to discern semantic shift with factual shift and thus achieve robust editing among diverse cross-modal prompting. The key challenge of this OOD problem lies in identifying invariant causal trajectories that generalize accurately while suppressing spurious correlations. To address it, we propose ODEdit, a plug-and-play invariant learning based framework that optimizes the tripartite OOD risk objective to simultaneously enhance editing reliability, locality, and generality.We further introduce an edit trajectory invariant learning method, which integrates a total variation penalty into the risk minimization objective to stabilize edit trajectories against environmental variations. Theoretical analysis and extensive experiments demonstrate the effectiveness of ODEdit.

💡 Research Summary

The paper tackles the problem of knowledge editing in multimodal large language models (MLLMs), where existing methods—either parameter‑adjusting (e.g., ROME, MEMIT) or model‑extending (e.g., LoRA, codebooks)—are limited by a rigid mapping from parameter changes to output variations. This rigidity hampers generalization across the diverse cross‑modal prompts that MLLMs encounter. The authors reformulate MLLM editing as an out‑of‑distribution (OOD) generalization task. They define three distinct environments: in‑distribution (IN) prompts, semantic‑neighbor (SE) prompts that are semantically related but not identical, and out‑of‑distribution (OUT) prompts that lie outside the intended editing scope.

To jointly optimize reliability (correctness on IN), locality (preserving unrelated knowledge on OUT), and generality (transfer to SE), they introduce a tripartite OOD risk:

- Reliability Risk – negative log‑likelihood of the target answer on the edited instance, ensuring the edited knowledge is correctly incorporated.

- Locality Risk – a KL‑divergence penalty between the model’s output distributions before and after editing on OUT prompts, limiting unintended side‑effects.

- Generality Risk – a Maximum Mean Discrepancy (MMD) loss that aligns hidden representations of edited prompts with those of their semantic re‑phrasings, encouraging the model to generalize the edit to semantically neighboring contexts.

The central methodological contribution is Edit Trajectory Invariant Learning (ETIL). Building on Invariant Risk Minimization (IRM), ETIL introduces an environment‑aware classifier and adds a Total Variation (TV) regularizer to the risk objective. The TV term penalizes fluctuations of the learned edit trajectory across environments, thereby suppressing spurious correlations and enforcing that the causal edit path remains stable under diverse visual and textual variations. Optimization proceeds via a primal‑dual scheme: the feature extractor (the “primal” variable) is updated to minimize the combined risk, while environment‑specific classifiers (the “dual” variables) are constrained to be optimal for each environment, ensuring invariance.

The authors provide theoretical analysis showing that the TV regularizer reduces the worst‑case OOD risk bound, and that the combined tripartite risk yields a provable upper bound on editing error across all environments.

Extensive experiments are conducted on several state‑of‑the‑art multimodal LLMs (e.g., BLIP‑2, LLaVA, MiniGPT‑4). The ODEdit framework is applied as a plug‑and‑play module on top of both parameter‑adjusting and model‑extending editors. Results demonstrate that ODEdit consistently improves editing reliability (higher success rates on IN prompts), reduces locality violations (lower degradation on unrelated OUT prompts), and boosts generality (better performance on SE prompts) compared to baselines. Notably, the method remains robust under visual perturbations (lighting changes, background swaps) and textual re‑phrasings (synonyms, syntactic variations).

In conclusion, the paper presents a novel perspective that treats multimodal knowledge editing as an OOD generalization problem and introduces invariant trajectory learning to achieve robust, localized, and semantically generalizable edits. ODEdit’s plug‑and‑play nature makes it applicable to a wide range of existing editing techniques, and the theoretical and empirical evidence suggests it is a significant step forward for maintaining and updating knowledge in evolving multimodal AI systems. Future directions include automated discovery of editing environments, meta‑learning of edit policies, and scaling the approach to real‑time, large‑scale deployment.

Comments & Academic Discussion

Loading comments...

Leave a Comment