LLM-ForcedAligner: A Non-Autoregressive and Accurate LLM-Based Forced Aligner for Multilingual and Long-Form Speech

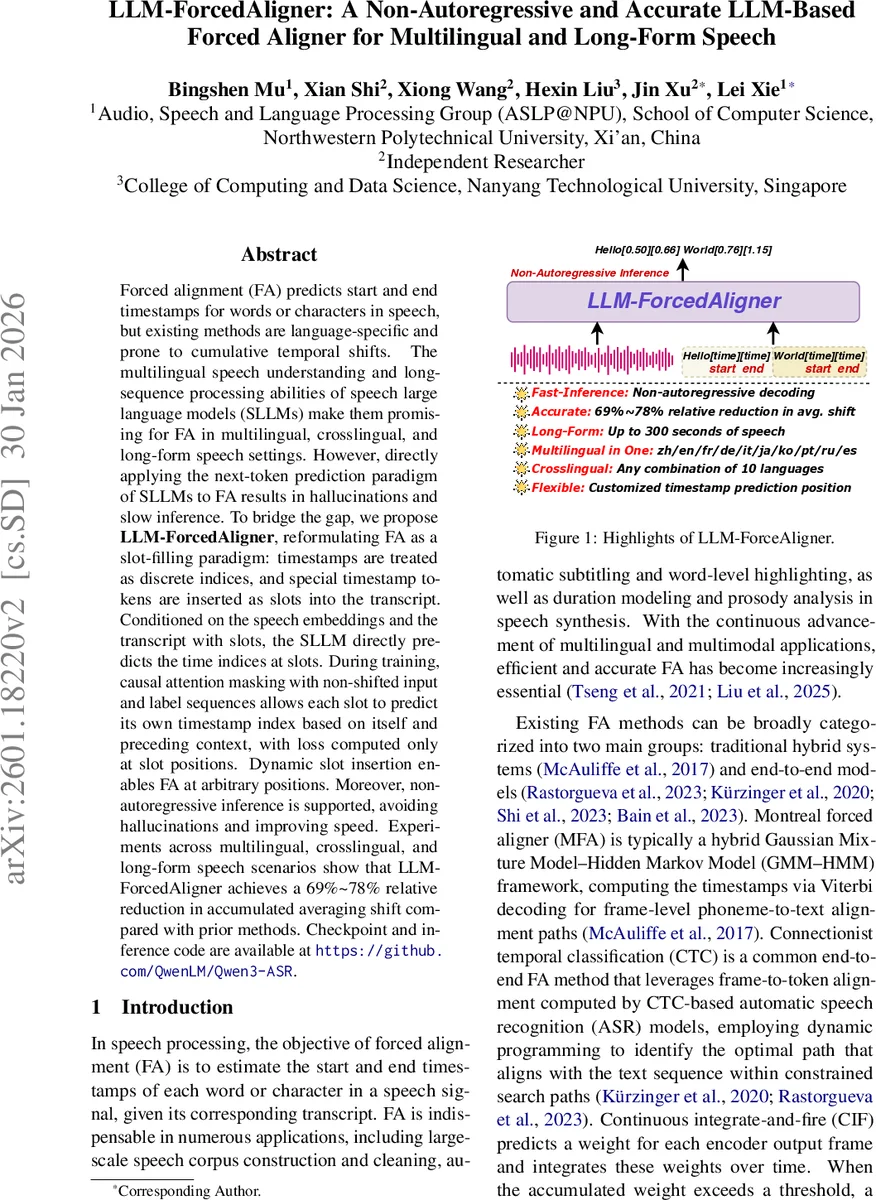

Forced alignment (FA) predicts start and end timestamps for words or characters in speech, but existing methods are language-specific and prone to cumulative temporal shifts. The multilingual speech understanding and long-sequence processing abilities of speech large language models (SLLMs) make them promising for FA in multilingual, crosslingual, and long-form speech settings. However, directly applying the next-token prediction paradigm of SLLMs to FA results in hallucinations and slow inference. To bridge the gap, we propose LLM-ForcedAligner, reformulating FA as a slot-filling paradigm: timestamps are treated as discrete indices, and special timestamp tokens are inserted as slots into the transcript. Conditioned on the speech embeddings and the transcript with slots, the SLLM directly predicts the time indices at slots. During training, causal attention masking with non-shifted input and label sequences allows each slot to predict its own timestamp index based on itself and preceding context, with loss computed only at slot positions. Dynamic slot insertion enables FA at arbitrary positions. Moreover, non-autoregressive inference is supported, avoiding hallucinations and improving speed. Experiments across multilingual, crosslingual, and long-form speech scenarios show that LLM-ForcedAligner achieves a 69%~78% relative reduction in accumulated averaging shift compared with prior methods. Checkpoint and inference code are available at https://github.com/QwenLM/Qwen3-ASR.

💡 Research Summary

Forced alignment (FA) is the task of assigning precise start and end timestamps to each word or character in a speech signal given its transcript. Traditional FA approaches fall into two families: hybrid GMM‑HMM systems (e.g., Montreal Forced Aligner) that rely on language‑specific phoneme lexicons and Viterbi decoding, and end‑to‑end models (CTC‑based, CIF, WhisperX) that compute frame‑level acoustic similarities and then perform monotonic path search. While these methods work reasonably well on short utterances, they suffer from two major drawbacks. First, they are tightly coupled to language‑specific resources, making multilingual deployment costly and complex. Second, small local alignment errors accumulate over long recordings, leading to substantial systematic temporal shifts (average accumulated shift, AAS).

Large speech‑language models (SLLMs) have demonstrated strong multilingual text understanding and the ability to process very long sequences. However, existing SLLM‑based FA methods treat timestamps as ordinary text tokens and generate them via the standard next‑token prediction paradigm. This autoregressive approach can produce non‑monotonic “hallucinations” and incurs high inference latency, especially for long audio.

The paper introduces LLM‑ForcedAligner, a novel framework that reframes FA as a slot‑filling problem. The key ideas are:

- Timestamp Slots – For each word/character, two special tokens `

Comments & Academic Discussion

Loading comments...

Leave a Comment