BibAgent: An Agentic Framework for Traceable Miscitation Detection in Scientific Literature

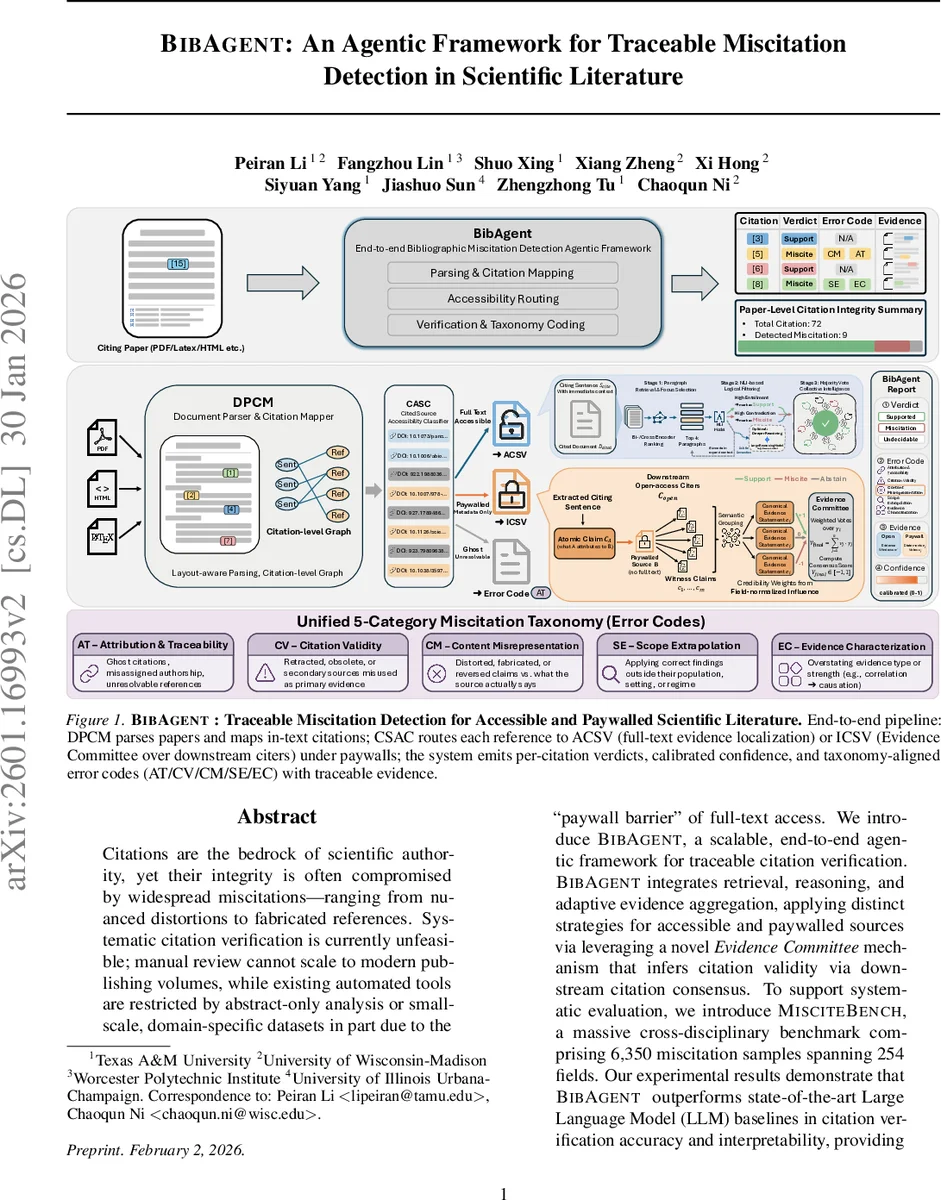

Citations are the bedrock of scientific authority, yet their integrity is compromised by widespread miscitations: ranging from nuanced distortions to fabricated references. Systematic citation verification is currently unfeasible; manual review cannot scale to modern publishing volumes, while existing automated tools are restricted by abstract-only analysis or small-scale, domain-specific datasets in part due to the “paywall barrier” of full-text access. We introduce BibAgent, a scalable, end-to-end agentic framework for automated citation verification. BibAgent integrates retrieval, reasoning, and adaptive evidence aggregation, applying distinct strategies for accessible and paywalled sources. For paywalled references, it leverages a novel Evidence Committee mechanism that infers citation validity via downstream citation consensus. To support systematic evaluation, we contribute a 5-category Miscitation Taxonomy and MisciteBench, a massive cross-disciplinary benchmark comprising 6,350 miscitation samples spanning 254 fields. Our results demonstrate that BibAgent outperforms state-of-the-art Large Language Model (LLM) baselines in citation verification accuracy and interpretability, providing scalable, transparent detection of citation misalignments across the scientific literature.

💡 Research Summary

The paper tackles the pervasive problem of miscitations—instances where a cited source is distorted, fabricated, or applied outside its valid evidential role—by introducing BibAgent, an end‑to‑end, agentic framework that can operate both when the full text of a cited paper is freely accessible and when it lies behind a paywall. BibAgent decomposes the citation‑verification task into four cooperating modules. First, the Document Parser & Citation Mapper (DPCM) normalizes heterogeneous input formats (LaTeX, PDF, HTML) into a unified hierarchical markdown representation, preserving discourse structure, mathematics, and in‑text citation anchors, and builds a sentence‑level citation graph linking each citing context to its bibliography entry. Second, the Cited Source Accessibility Classifier (CSA C) determines whether the referenced work is open‑access or paywalled, routing the citation to either the Accessible Citation Verification (A‑CSV) branch or the Inaccessible Citation Verification (I‑CSV) branch. In the A‑CSV pipeline, a bi‑encoder followed by a cross‑encoder ranks the top‑k paragraphs most relevant to the citing sentence. These paragraphs are filtered through a Natural Language Inference (NLI) model that classifies the relationship as entailment (support), contradiction (miscite), or neutral (uncertain). When the NLI confidence is low or the case is semantically subtle, a large reasoning model such as Gemini‑2.5‑Pro is invoked for deeper reasoning. The I‑CSV branch cannot read the source directly; instead it reconstructs community consensus by harvesting downstream citations that reference the same source. Each downstream citing sentence is processed by the same NLI pipeline, yielding a vote of support, refutation, or neutrality. Votes are weighted by field‑specific credibility scores, and a consensus score V_final is computed as a weighted average, producing a calibrated confidence in the inferred verdict. The final decision module maps the outcome onto a unified five‑category miscitation taxonomy: Attribution & Traceability (AT), Citation Validity (CV), Content Misrepresentation (CM), Scope Extrapolation (SE), and Evidence Characterization (EC). A Dependency Precedence Rule enforces a human‑like verification order—first traceability, then validity, then content, then scope, then evidence strength—ensuring each citation receives a single primary error code. To evaluate BibAgent, the authors construct MisciteBench, a contamination‑controlled benchmark comprising 6,350 expert‑validated miscitation instances across all 254 Clarivate Journal Citation Reports (JCR) subject categories. For each category, the most‑cited 2024–2025 research article from the top‑impact journal is selected, and a knowledge‑blank protocol guarantees that no LLM can answer forensic questions about the paper without accessing its full text. Large reasoning models then generate adversarial miscitations covering both surface‑level and deep‑semantic errors for each of the five taxonomy categories, which are cross‑validated by a second LLM and domain experts. Experiments show that BibAgent outperforms strong full‑text LLM baselines by an average of 12 percentage points in accuracy and achieves a 0.15 improvement in calibrated confidence (ECE) on MisciteBench. The I‑CSV branch, powered by the Evidence Committee, maintains >78 % accuracy despite lacking direct access to the source, demonstrating robustness to paywall constraints. Error‑type analysis reveals that BibAgent excels particularly on AT and CV categories, while also delivering superior performance on the more nuanced SE and EC categories where prior models struggled. Moreover, BibAgent provides traceable evidence windows, error codes, and confidence scores for each citation, enabling transparent audit trails. The authors conclude that BibAgent is the first scalable, taxonomy‑aware, paywall‑robust, and auditable system for miscitation detection across disciplines, and they outline future work on refining the evidence‑committee voting mechanism and integrating the framework into real‑time manuscript submission pipelines.

Comments & Academic Discussion

Loading comments...

Leave a Comment