Identity, Cooperation and Framing Effects within Groups of Real and Simulated Humans

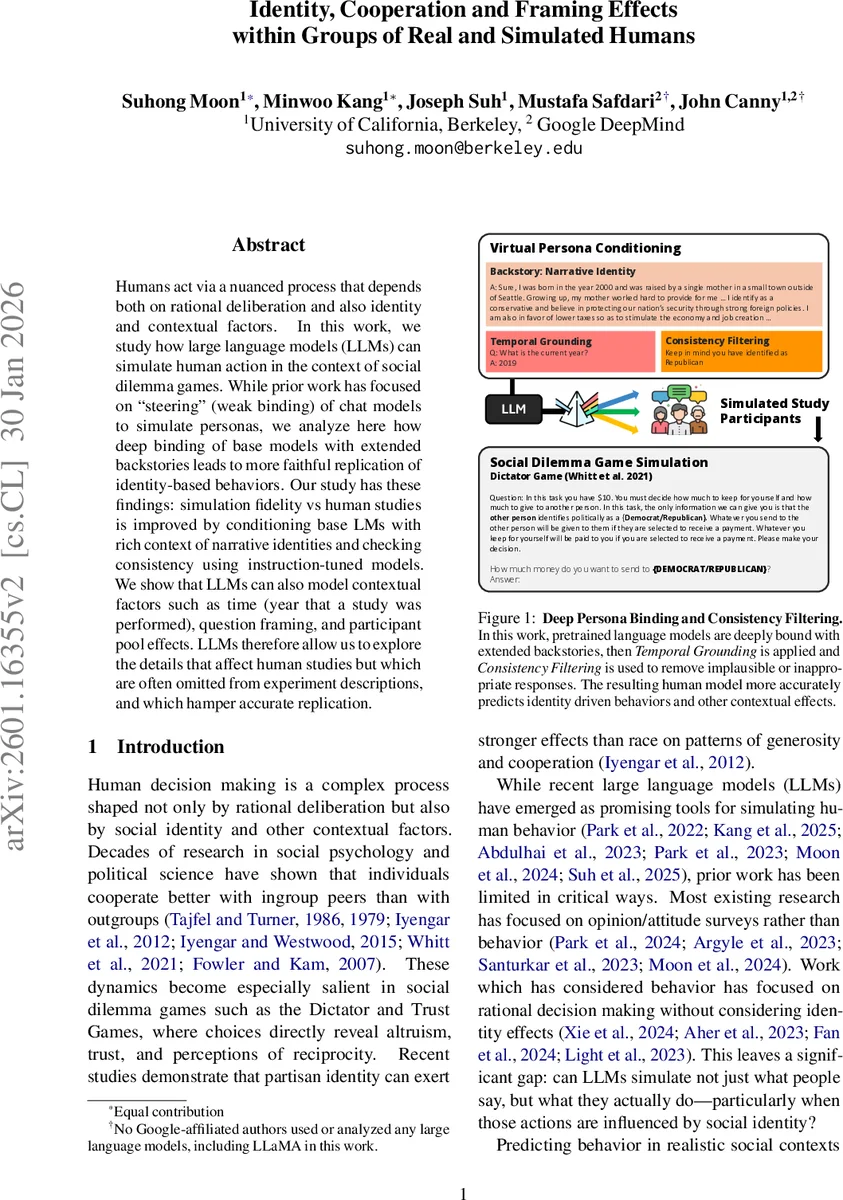

Humans act via a nuanced process that depends both on rational deliberation and also on identity and contextual factors. In this work, we study how large language models (LLMs) can simulate human action in the context of social dilemma games. While prior work has focused on “steering” (weak binding) of chat models to simulate personas, we analyze here how deep binding of base models with extended backstories leads to more faithful replication of identity-based behaviors. Our study has these findings: simulation fidelity vs human studies is improved by conditioning base LMs with rich context of narrative identities and checking consistency using instruction-tuned models. We show that LLMs can also model contextual factors such as time (year that a study was performed), question framing, and participant pool effects. LLMs, therefore, allow us to explore the details that affect human studies but which are often omitted from experiment descriptions, and which hamper accurate replication.

💡 Research Summary

This paper investigates the potential of large language models (LLMs) to simulate human decision-making in social dilemmas, with a specific focus on how social identity and contextual factors influence behavior. The central thesis is that LLMs, when properly conditioned, can move beyond simulating simple survey responses to faithfully replicate complex, identity-driven actions observed in behavioral economics games.

The authors identify a critical limitation in prior work: an overreliance on superficially “steering” instruction-tuned chat models to create personas, which often fails to capture the depth and consistency of real human behavior rooted in identity. To address this, they propose a methodology centered on “deep binding” of pretrained base models (PT) with extensive narrative backstories. These backstories are generated by interviewing PT models using questions from real-world surveys, resulting in rich, first-person biographies that encapsulate demographics, personal history, and political beliefs.

The methodological innovation is threefold. First, it advocates for using pretrained base models over instruction-tuned (IT) models. The paper provides compelling evidence that PT models are inherently superior for human simulation because their training data contains a vast diversity of latent human “voices” and contexts. In contrast, IT models are fine-tuned to adopt a single “helpful agent” persona, which strips away diversity and leads to a “Lake Wobegon Effect” where simulated personas are unrealistically positive and homogeneous. This is quantified by showing IT models have 50-100% higher perplexity than their PT counterparts on human dialogue data.

Second, the paper introduces Temporal Grounding, a technique where the simulation is explicitly anchored to the year the original human study was conducted (e.g., “The current year is 2007”). This allows the model to reflect temporal shifts in societal attitudes, such as increasing partisan animosity over time.

Third, Consistency Filtering is employed during simulation. By periodically injecting prompts that remind the model of its core persona identity (e.g., “Remember, you are a Republican…”), the method mitigates semantic drift and ensures the model stays in character throughout extended interactions.

The proposed approach is evaluated using classic behavioral games: the Dictator Game and the Trust Game. The experiments meticulously replicate the designs and wording from prior human studies on partisan bias (Iyengar & Westwood, 2015; Whitt et al., 2021). The deep-binding method with temporal grounding and consistency filtering demonstrates significantly improved alignment with human data compared to baseline prompting strategies (simple QA, Bio, or Portray methods). The LLM-simulated personas successfully reproduce key phenomena like in-group favoritism, where simulated partisans allocate more money to co-partisans than to opposing partisans.

Beyond mere replication, the paper highlights the exploratory power of LLM simulation. It uses models to investigate why two similar human studies conducted in different years (2007 vs. 2021) found different effect sizes. The LLM simulations suggest that the “year” or temporal context itself can be a significant explanatory variable, mimicking the hypothesized increase in real-world polarization. This points to a novel application: using LLMs as a tool to systematically probe the impact of “hidden variables”—such as subtle differences in question framing, participant pool composition, or temporal context—that are often omitted from experimental descriptions but can hamper accurate replication across studies.

In conclusion, the research makes a strong case that LLMs, particularly pretrained base models conditioned with deep narrative identities and contextual controls, can serve as high-fidelity, flexible platforms for simulating human social behavior. This opens new avenues for theory testing, exploring experimental confounds, and ultimately improving the robustness and reproducibility of social science research.

Comments & Academic Discussion

Loading comments...

Leave a Comment