LogicScore: Fine-grained Logic Evaluation of Conciseness, Completeness, and Determinateness in Attributed Question Answering

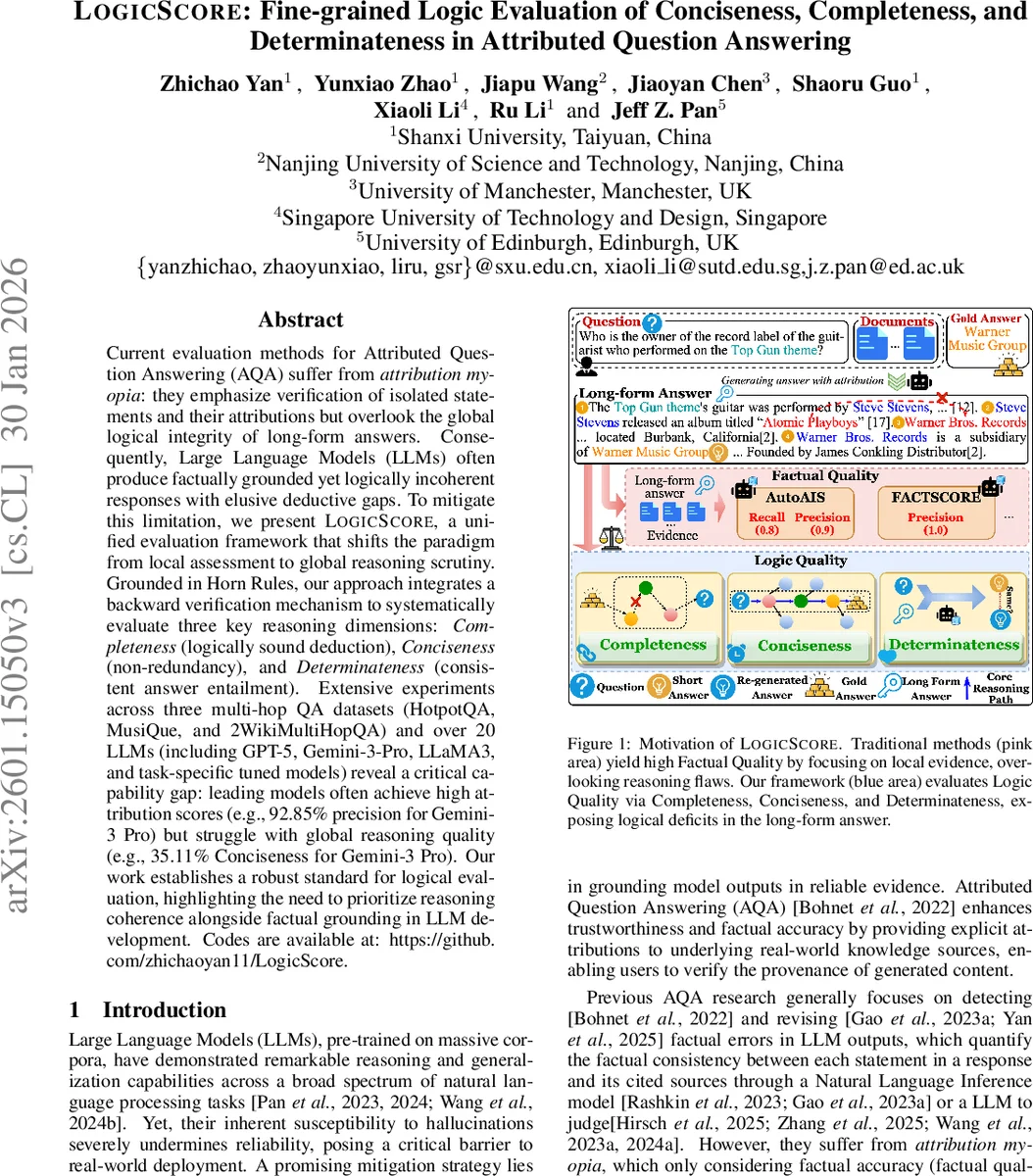

Current evaluation methods for Attributed Question Answering (AQA) suffer from \textit{attribution myopia}: they emphasize verification of isolated statements and their attributions but overlook the global logical integrity of long-form answers. Consequently, Large Language Models (LLMs) often produce factually grounded yet logically incoherent responses with elusive deductive gaps. To mitigate this limitation, we present \textsc{LogicScore}, a unified evaluation framework that shifts the paradigm from local assessment to global reasoning scrutiny. Grounded in Horn Rules, our approach integrates a backward verification mechanism to systematically evaluate three key reasoning dimensions: \textit{Completeness} (logically sound deduction), \textit{Conciseness} (non-redundancy), and \textit{Determinateness} (consistent answer entailment). Extensive experiments across three multi-hop QA datasets (HotpotQA, MusiQue, and 2WikiMultiHopQA) and over 20 LLMs (including GPT-5, Gemini-3-Pro, LLaMA3, and task-specific tuned models) reveal a critical capability gap: leading models often achieve high attribution scores (e.g., 92.85% precision for Gemini-3 Pro) but struggle with global reasoning quality (e.g., 35.11% Conciseness for Gemini-3 Pro). Our work establishes a robust standard for logical evaluation, highlighting the need to prioritize reasoning coherence alongside factual grounding in LLM development. Codes are available at: https://github.com/zhichaoyan11/LogicScore.

💡 Research Summary

The paper addresses a critical blind spot in the evaluation of Attributed Question Answering (AQA) systems: existing metrics focus almost exclusively on factual correctness and the accuracy of source attributions, while ignoring the global logical coherence of long‑form answers. The authors term this shortcoming “attribution myopia.” To remedy it, they introduce LogicScore, a unified framework that evaluates the logical quality of generated answers along three dimensions—Completeness, Conciseness, and Determinateness—using a formalism based on Definite Horn Rules.

Methodology

- Answer Generation: A question and a set of retrieved documents are fed to a large language model (LLM) with a chain‑of‑thought (CoT) prompt. The model produces a detailed long‑form answer (LA) containing citation markers and a concise short answer (SA).

- Logic Transformation: An LLM‑based transformation parses the LA into a set of atomic propositions (P_i). These are expressed as Horn clauses, yielding a logical chain of the form (SA \leftarrow P_1 \land P_2 \land \dots \land P_n). Each proposition is broken into a triple (subject, relation, object).

- Logic Quality Evaluation:

- Completeness is computed by a backward‑chaining algorithm that starts from propositions containing the gold answer entity and attempts to connect them to the question entity through shared intermediate entities. If a continuous path exists, Completeness = 1, otherwise 0.

- Conciseness measures the density of essential reasoning steps: it is the ratio (|P_{\text{min}}| / |P|), where (P_{\text{min}}) is the minimal sufficient set of propositions forming a valid path. If no path exists, Conciseness = 0.

- Determinateness checks inferential certainty via a re‑inference step. The LA is treated as the sole premise context, and the LLM is asked to generate a new short answer (\hat{SA}). Determinateness is 1 if (\hat{SA}=SA), otherwise 0. This mirrors self‑consistency verification.

Experiments

The authors evaluate LogicScore on three multi‑hop QA benchmarks—HotpotQA, MusiQue, and 2WikiMultiHopQA—using more than 20 contemporary LLMs, including proprietary models (GPT‑5, Gemini‑3‑Pro) and open‑source models (LLaMA‑3, Qwen‑3), as well as task‑specific supervised fine‑tuned (SFT) variants. Key findings include:

- High factual attribution scores do not guarantee logical quality. For example, Gemini‑3‑Pro achieves 92.85 % attribution precision but only 0.41 Completeness, 0.35 Conciseness, and 0.48 Determinateness.

- A “scaling paradox” emerges: larger models retrieve more correct facts yet tend to over‑produce evidence, inflating answer length and reducing Conciseness.

- SFT models, while sometimes lagging in raw factual recall, often produce more logically coherent answers, suggesting that targeted fine‑tuning can improve reasoning structure.

Analysis and Limitations

LogicScore’s reliance on LLM‑driven Horn‑rule extraction introduces potential transformation errors; the quality of the logical chain depends on the correctness of the intermediate parsing step. Moreover, the current framework handles only deterministic, conjunctive reasoning and cannot directly evaluate probabilistic or arithmetic reasoning, limiting its applicability to more complex domains. Human‑LLM correlation studies are needed to validate that LogicScore scores align with expert judgments.

Conclusion

The work establishes a robust, automated metric for assessing the logical integrity of AQA outputs, complementing existing factual metrics. By quantifying how well a model’s reasoning chain connects question to answer, eliminates redundancy, and deterministically entails the final answer, LogicScore highlights a crucial dimension for future LLM development. The authors suggest extending the framework to richer logical formalisms (first‑order or probabilistic logic) and integrating human‑in‑the‑loop verification to further enhance evaluation fidelity.

Comments & Academic Discussion

Loading comments...

Leave a Comment